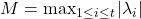

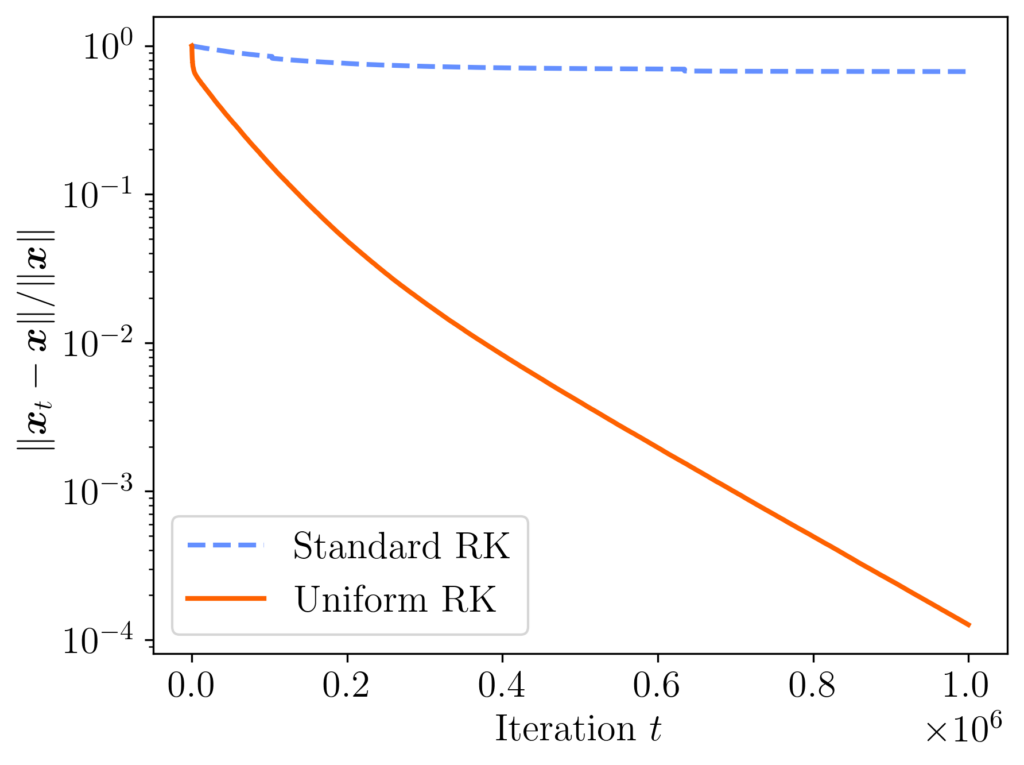

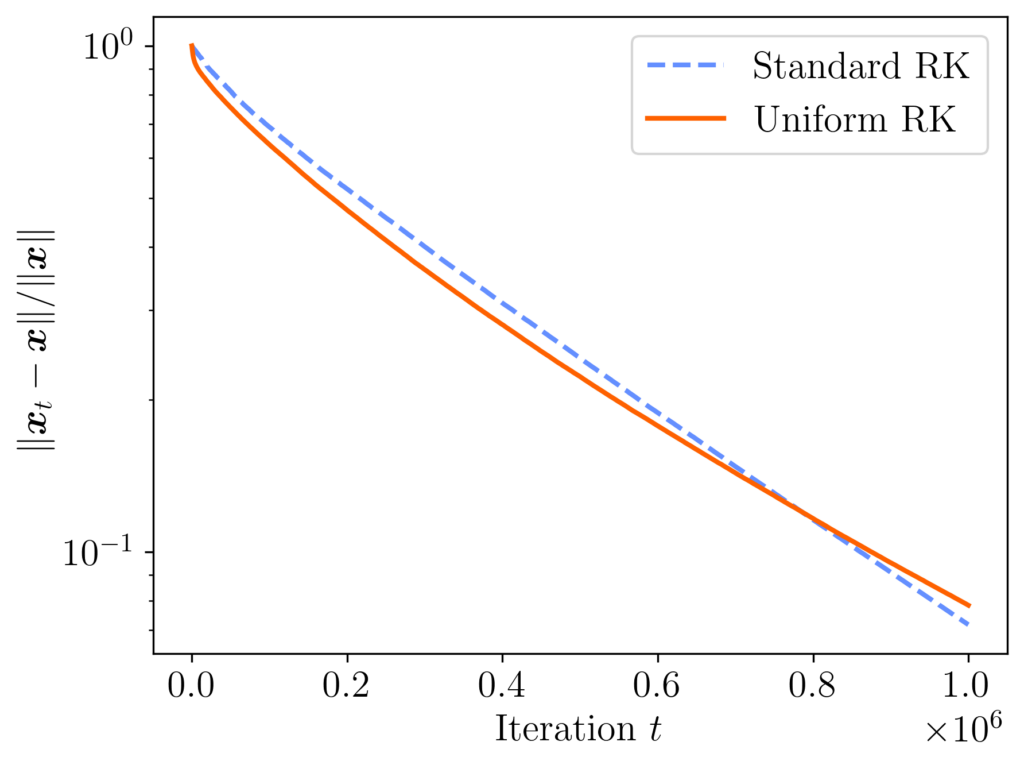

I am excited to share that my paper Does block size matter in randomized block Krylov low-rank approximation? has recently been released on arXiv. In that paper, we study the randomized block Krylov iteration (RBKI) algorithm for low-rank approximation. Existing results show that RBKI is efficient at producing rank- approximations with a large block size of

approximations with a large block size of  or a small block size of

or a small block size of  , but these results give poor results for intermediate block sizes

, but these results give poor results for intermediate block sizes  . But often these intermediate block sizes are the most efficient in practice. In our paper, we close this theoretical gap, showing RBKI is efficient for any block size

. But often these intermediate block sizes are the most efficient in practice. In our paper, we close this theoretical gap, showing RBKI is efficient for any block size  . Check out the paper for details!

. Check out the paper for details!

In our paper, the core technical challenge is understanding the condition number of a random block Krylov matrix of the form

![Rendered by QuickLaTeX.com \[K = \begin{bmatrix} G & AG & \cdots & A^{t-1}G \end{bmatrix},\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-3a6ec37fe54d09f2ee9f6f2c648dafd1_l3.png)

where

is an

real

symmetric positive semidefinite matrix and

is an

random

Gaussian matrix. Proving this result was not easy, and our proof required several ingredients. In this post, I want to talk about just one of them: Gautschi’s bound on the conditioning of

Vandermonde matrices. (Check out Gautschi’s original paper

here.)

Evaluating a Polynomial and Vandermonde Matrices

Let us begin with one the humblest but most important characters in mathematics, the univariate polynomial

![Rendered by QuickLaTeX.com \[p_a(u) = a_1 + a_2 u+ \cdots + a_t u^{t-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ba898ff67858e9c5a76463593337b542_l3.png)

Here, we have set the degree to be

and have written the polynomial

parametrically in terms of its vector of coefficients

. The polynomials of degree at most

form a

linear space of dimension

, and the monomials

provide a

basis for this space. In this post, we will permit the coefficients

to be complex numbers.

Given a polynomial, we often wish to evaluate it at a set of inputs. Specifically, let  be

be  (distinct) input locations. If we evaluate

(distinct) input locations. If we evaluate  at each number, we obtain a list of (output) values, which we denote by

at each number, we obtain a list of (output) values, which we denote by  of

of  (distinct) values, each of which given by the formula

(distinct) values, each of which given by the formula

![Rendered by QuickLaTeX.com \[p_a(\lambda_i) = \sum_{j=1}^t \lambda_i^{j-1} a_j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-eaaf53acb1f30e1ced80af4704800e02_l3.png)

Observe that the outputs

are a

nonlinear function of the input values

but a

linear function of the coefficients

. We may call the mapping

the

coefficients-to-values map.

Every linear transformation between vectors can be realized as a matrix–vector product, and the matrix for the coefficients-to-values map is called a Vandermonde matrix  . It is given by the formula

. It is given by the formula

![Rendered by QuickLaTeX.com \[V= \begin{bmatrix} 1 & \lambda_1 & \lambda_1^2 & \cdots & \lambda_1^{t-1} \\ 1 & \lambda_2 & \lambda_2^2 & \cdots & \lambda_2^{t-1} \\ 1 & \lambda_3 & \lambda_3^2 & \cdots & \lambda_3^{t-1} \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 1 & \lambda_s & \lambda_s^2 & \cdots & \lambda_s^{t-1} \end{bmatrix} \in \complex^{s\times t}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2d100f803f43a4910ba6c03d2edde384_l3.png)

The Vandermonde matrix defines the coefficients-to-values map in the sense that

.

Interpolating by a Polynomial and Inverting a Vandermonde Matrix

Going forward, let us set  so the number of locations

so the number of locations  equals the number of coefficients

equals the number of coefficients  . The Vandermonde matrix maps the vector of coefficients

. The Vandermonde matrix maps the vector of coefficients  to the vector of values

to the vector of values  . Its inverse

. Its inverse  maps a set of values

maps a set of values  to a set of coefficients

to a set of coefficients  defining a polynomial

defining a polynomial  which interpolates the values

which interpolates the values  :

:

![Rendered by QuickLaTeX.com \[p_a(\lambda_i) = f_i \quad \text{for } i =1,\ldots,t .\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-0cab3d96a2006c60b95e665b3c9afc97_l3.png)

More concisely, multiplying the inverse of a Vandermonde matrix solves the problem of

polynomial interpolation.

To solve the polynomial interpolation problem with Vandermonde matrices, we can do the following. Given values  , we first solve the linear system of equations

, we first solve the linear system of equations  , obtaining a vector of coefficients

, obtaining a vector of coefficients  . Then, define the interpolating polynomial

. Then, define the interpolating polynomial

(1) ![Rendered by QuickLaTeX.com \[q(u) = a_1 + a_2 u + \cdots + a_t u^{t-1} \quad \text{with } a = V^{-1} f. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b44af0dbb2f4e6b3c74a40b23d074f68_l3.png)

The polynomial

interpolates the values

at the locations

,

.

Lagrange Interpolation

Inverting a the Vandermonde matrix is one way to solve the polynomial interpolation problem, but the polynomial interpolation can also be solved directly. To do so, first notice that we can construct a special polynomial  that is zero at the locations

that is zero at the locations  but nonzero at the first location

but nonzero at the first location  . (Remember that we have assumed that

. (Remember that we have assumed that  are distinct.) Further, by rescaling this polynomial to

are distinct.) Further, by rescaling this polynomial to

![Rendered by QuickLaTeX.com \[\ell_1(u) = \frac{(u - \lambda_2)(u - \lambda_3)\cdots(u-\lambda_t)}{(\lambda_1 - \lambda_2)(\lambda_1 - \lambda_3)\cdots(\lambda_1-\lambda_t)},\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-174996c439ec13555388925748a3269e_l3.png)

we obtain a polynomial whose value at

can be set to

. Likewise, for each

, we can define a similar polynomial

(2) ![Rendered by QuickLaTeX.com \[\ell_i(u) = \frac{(u - \lambda_1)\cdots(u-\lambda_{i-1}) (u-\lambda_{i+1})\cdots(u-\lambda_t)}{(\lambda_i - \lambda_1)\cdots(\lambda_i-\lambda_{i-1}) (\lambda_i-\lambda_{i+1})\cdots(\lambda_i-\lambda_t)}, \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-5453dea91bda2e744ca98b5dc0ae9790_l3.png)

which is

at

and zero at

for

. Using the Dirac delta symbol, we may write

![Rendered by QuickLaTeX.com \[\ell_i(\lambda_j) = \delta_{ij} = \begin{cases} 1, & i = j, \\ 0, & i\ne j. \end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-64c4effeb0f12225a76059d89ccce692_l3.png)

The polynomials

are called the

Lagrange polynomials of the locations

. Below is an interactive illustration of the second Lagrange polynomial

associated with the points

(with

).

With the Lagrange polynomials in hand, the polynomial interpolation problem is easy. To obtain a polynomial whose values are  , simply multiply each Lagrange polynomial

, simply multiply each Lagrange polynomial  by the value

by the value  and sum up, obtaining

and sum up, obtaining

![Rendered by QuickLaTeX.com \[q(u) = \sum_{i=1}^t f_i \ell_i(u).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-c1c5043718b5df795a883a6a60d26aaf_l3.png)

The polynomial

interpolates the values

. Indeed,

(3) ![Rendered by QuickLaTeX.com \[q(\lambda_j) = \sum_{i=1}^t f_i \ell_i(\lambda_j) = \sum_{i=1}^t f_i \delta_{ij} = f_j. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-90032b377ebe250ef36dccba2186b6ce_l3.png)

The interpolating polynomial computed is shown in the interactive display below:

From Lagrange to Vandermonde via Elementary Symmetric Polynomials

We now have two ways of solving the polynomial interpolation problem, the Vandermonde way (1) and the Lagrange way (3). Ultimately, the difference between these formulas is one of basis: The Vandermonde formula (1) expresses the interpolating polynomial  as a linear combination of monomials

as a linear combination of monomials  and the Lagrange formula (3) expresses

and the Lagrange formula (3) expresses  as a linear combination of the Lagrange polynomials

as a linear combination of the Lagrange polynomials  . To convert between these formulas, we just need to express the Lagrange polynomial basis in the monomial basis.

. To convert between these formulas, we just need to express the Lagrange polynomial basis in the monomial basis.

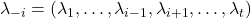

To do so, let us examine the Lagrange polynomials more closely. Consider first the case  , and consider the fourth unnormalized Lagrange polynomial

, and consider the fourth unnormalized Lagrange polynomial

![Rendered by QuickLaTeX.com \[(u - \lambda_1) (u - \lambda_2) (u - \lambda_3).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d3fe2471c7c0557476992f6f7a1361cf_l3.png)

Expanding this polynomial in the monomials

, we obtain the expression

![Rendered by QuickLaTeX.com \[(u - \lambda_1) (u - \lambda_2) (u - \lambda_3) = u^3 - (\lambda_1 + \lambda_2 + \lambda_3) u^2 + (\lambda_1\lambda_2 + \lambda_1\lambda_3 + \lambda_2\lambda_3)u - \lambda_1\lambda_2\lambda_3.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ad4d01202c2b9a69e395412829cc7fed_l3.png)

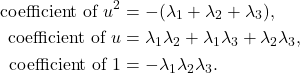

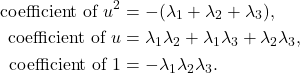

Looking at the coefficients of this polynomial in

, we recognize some pretty distinctive expressions involving the

‘s:

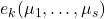

Indeed, these expressions are special. Up to a plus-or-minus sign, they are called the elementary symmetric polynomials of the locations  . Specifically, given numbers

. Specifically, given numbers  , the

, the  th elementary symmetric polynomial

th elementary symmetric polynomial  is defined as the sum of all products

is defined as the sum of all products  of

of  values, i.e.,

values, i.e.,

![Rendered by QuickLaTeX.com \[e_k(\mu_1,\ldots,\mu_{t-1}) = \sum_{i_1 < i_2 < \cdots < i_k} \mu_{i_1}\mu_{i_2}\cdots \mu_{i_k}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-49b00bf8f1a0f6fc399e029516a4682e_l3.png)

The zeroth elementary symmetric polynomial is

by convention.

The elementary symmetric polynomials appear all the time in mathematics. In particular, they are the coefficients of the characteristic polynomial of a matrix and feature heavily in the theory of determinantal point processes. For our purposes, the key observation will be that the elementary symmetric polynomials appear whenever one expands out an expression like  :

:

Lemma 1 (Expanding a product of linear functions). It holds that

![Rendered by QuickLaTeX.com \[(u + \mu_1) (u + \mu_2) \cdots (u+\mu_k) = \sum_{j=0}^k e_{k-j}(\mu_1,\ldots,\mu_k) u^j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-fe26dd7ba2e215fba4fea5cd28147cb2_l3.png)

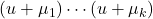

Using this fact, we obtain an expression for the Lagrange polynomials in the monomial basis. Let  denote the list of locations without

denote the list of locations without  . Then the

. Then the  th Lagrange polynomial is given by

th Lagrange polynomial is given by

![Rendered by QuickLaTeX.com \[\ell_i(u) = \frac{\sum_{j=1}^t e_{t-j}(-\lambda_{-i})u^{j-1}}{\prod_{k\ne i} (\lambda_i - \lambda_k)}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-15d6cb602dcf916e14363b25a013870d_l3.png)

Not exactly a beautiful expression, but it will get the job done.

Indeed, we can write the interpolating polynomial as

![Rendered by QuickLaTeX.com \[q(u) = \sum_{i=1}^t f_i\ell_i(u) = \sum_{i=1}^t \sum_{j=1}^t f_i \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)} u^{j-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-17012971c8949a309b08ae230bf7fa94_l3.png)

To make progress, we interchange the order of summation and regroup:

![Rendered by QuickLaTeX.com \[q(u) = \sum_{j=1}^t \sum_{i=1}^t f_i \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)} u^{j-1} = \sum_{j=1}^t \left(\sum_{i=1}^t \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)}\cdot f_i \right) u^{j-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-10960bce48c6a124c253db4c1272f2e2_l3.png)

We see that the coefficients of the interpolating polynomial (in the monomial basis) are

![Rendered by QuickLaTeX.com \[a_j = \sum_{i=1}^t \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)}\cdot f_i.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2bb3d1280c99ca124084f031ed343679_l3.png)

But we also know that the coefficients are given by

. Therefore, we conclude that the entries of the inverse-Vandermonde matrix

are

(4) ![Rendered by QuickLaTeX.com \[(V^{-1})_{ji} = \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-c3d618b9ee7195d040d1875f76fb1d67_l3.png)

Vandermonde Matrices are Merely Exponentially Ill-Conditioned

Vandermonde matrices are notoriously ill-conditioned, meaning that small changes to the values  can cause large changes to the coefficients

can cause large changes to the coefficients  . On its face, this might seem like the problem of polynomial interpolation itself is ill-conditioned, but this is too hasty a conclusion. After all, it is only the mapping from values

. On its face, this might seem like the problem of polynomial interpolation itself is ill-conditioned, but this is too hasty a conclusion. After all, it is only the mapping from values  to coefficients

to coefficients  in the monomial basis that is ill-conditioned. Fortunately, there are much better, more numerically stable bases for representing a polynomial like the Chebyshev polynomials.

in the monomial basis that is ill-conditioned. Fortunately, there are much better, more numerically stable bases for representing a polynomial like the Chebyshev polynomials.

But these more stable methods of polynomial interpolation and approximation are not the subject of this post: Here, our task is to will be to characterize just how ill-conditioned the computation of  is. To characterize this ill-conditioning, we will utilize the condition number of the matrix

is. To characterize this ill-conditioning, we will utilize the condition number of the matrix  . Given a norm

. Given a norm  , the condition number of

, the condition number of  is defined to be

is defined to be

![Rendered by QuickLaTeX.com \[\kappa_{\uinorm{\cdot}}(V) = \uinorm{V} \uinorm{\smash{V^{-1}}}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cf4da36f5f2ce121d2d2512423310221_l3.png)

For this post, we will focus on the case where

is chosen to be the (

operator)

–

norm, defined as

![Rendered by QuickLaTeX.com \[\norm{A}_1= \max_{x \ne 0} \frac{\norm{Ax}_1}{\norm{x}_1} \quad \text{where } \norm{x}_1 = \sum_i |x_i|.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-beb68f2e11bc5c6e30b06fec3b886ff3_l3.png)

The

-norm

has a simple characterization: It is the maximum sum of the absolute values of the entries in any column

(5) ![Rendered by QuickLaTeX.com \[\norm{A}_\infty = \max_j \sum_i |A_{ij}|. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-936a7309e99d6c03a128b3a7771c88df_l3.png)

We shall denote the

-norm condition number

.

Bounding  is straightforward. Indeed, setting

is straightforward. Indeed, setting  and using (5), we compute

and using (5), we compute

![Rendered by QuickLaTeX.com \[\norm{V}_1= \max_{1\le j \le t} \sum_{i=1}^t |\lambda_i|^{j-1} \le \max_{1\le j \le t} tM^{j-1} = t\max\{1,M^{t-1}\}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ec5f488cb8233df69dd22a00e9bae784_l3.png)

An even weaker, but still useful, bound is

![Rendered by QuickLaTeX.com \[\norm{V}_1\le t(1+M)^{t-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-8cecac332b3fcbe899304e963ec1fe47_l3.png)

The harder task is bounding  . Fortunately, we have already done most of the hard work needed to bound this quantity. Using our expression (4) for the entries of

. Fortunately, we have already done most of the hard work needed to bound this quantity. Using our expression (4) for the entries of  and using the formula (5) for the

and using the formula (5) for the  -norm, we have

-norm, we have

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1= \max_{1\le j \le t} \sum_{i=1}^t |(V^{-1})_{ij}| = \max_{1\le j \le t}\frac{ \sum_{i=1}^t |e_{t-i}(-\lambda_{-j})|}{\prod_{k\ne j} |\lambda_j- \lambda_k|}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b431915bf4dd9e0a3087cd91f5e3533a_l3.png)

To bound this expression, we make use of the following “triangle inequality” for elementary symmetric polynomials

![Rendered by QuickLaTeX.com \[|e_k(\mu_1,\ldots,\mu_s)| \le e_k(|\mu_1|,\ldots,|\mu_s|).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-73a7d10b6aadf0271cb538d648049f13_l3.png)

Using this bound and defining

, we obtain

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1\le \max_{1\le j \le t}\frac{ \sum_{i=1}^t e_{t-i}(|\lambda_{-j}|)}{\prod_{k\ne j} |\lambda_j- \lambda_k|}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-34c4b502a43c91513781e51c25190ad1_l3.png)

We now use the Lemma 1 with

to obtain the expression

(6) ![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1\le \max_{1\le j \le t}\frac{ \prod_{k\ne j} (1 + |\lambda_k|)}{\prod_{k\ne j} |\lambda_j- \lambda_k|}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-9fee19675094e57026eaf8571e060317_l3.png)

Equation (6) is

Gautschi’s bound on the norm of the inverse of a Vandermonde matrix, the result we were working towards proving.

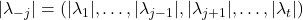

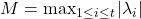

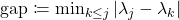

Often, it is helpful to simply Gautschi’s bound a bit. Setting  as above, the numerator is bounded as

as above, the numerator is bounded as  . To bound the denominator, let

. To bound the denominator, let  be the smallest distance between two locations. Using

be the smallest distance between two locations. Using  and

and  , we can weaken the bound (6) to obtain

, we can weaken the bound (6) to obtain

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1\le \left(\frac{1+M}{\mathrm{gap}}\right)^{t-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4df225e8dea0e69df68907fc81ab32e6_l3.png)

Combining this with our bound on

from above, we obtain a bound on the condition number

![Rendered by QuickLaTeX.com \[\kappa_1(V) \le t \left( \frac{(1+M)^2}{\mathrm{gap}} \right)^{t-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-a88bac288484194059179a7e854bc368_l3.png)

We record these results:

Theorem 2 (Gautschi’s bound, simplified). Introduce  and

and  . Then

. Then

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1\le \left(\frac{1+M}{\mathrm{gap}}\right)^{t-1}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-933010efafd6e0336cdf5b4071c2c90b_l3.png)

and ![Rendered by QuickLaTeX.com \[\kappa_1(V) \le t \left( \frac{(1+M)^2}{\mathrm{gap}} \right)^{t-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-a88bac288484194059179a7e854bc368_l3.png)

Gautschi’s bound suggests that Vandermonde matrices can be very ill-conditioned, which is disappointing. But Gautschi’s bound also shows that Vandermonde matrices are merely exponentially ill-conditioned—that is, they are not worse than exponentially conditioned. The fact that Vandermonde matrices are only exponentially ill-conditioned plays a crucial role in our analysis of randomized block Krylov iteration.

Gautschi’s Bound as a Robust Version of the Fundamental Theorem of Algebra

The fundamental theorem of algebra states that a degree- polynomial has precisely

polynomial has precisely  roots. Consequently, at

roots. Consequently, at  locations, it must be nonzero at least one. But how nonzero must the polynomial be at that one location? How large must it be? On this subject, the fundamental theorem of algebra is moot. However, Gautschi’s bound provides an answer.

locations, it must be nonzero at least one. But how nonzero must the polynomial be at that one location? How large must it be? On this subject, the fundamental theorem of algebra is moot. However, Gautschi’s bound provides an answer.

To answer this question, we ask: What is the minimum possible size  of the values

of the values  ? Well, if we set all the coefficients

? Well, if we set all the coefficients  to zero, then

to zero, then  as well. So to avoid this trivial case, we should enforce a normalization condition on the coefficient vector

as well. So to avoid this trivial case, we should enforce a normalization condition on the coefficient vector  , say

, say  . With this setting, we are ready to compute. Begin by observing that

. With this setting, we are ready to compute. Begin by observing that

![Rendered by QuickLaTeX.com \[\min_{\norm{a}_1 = 1} \norm{Va}_1 = \min_{a\ne 0} \frac{\norm{Va}_1}{\norm{a}_1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-3a21773d318eeb447cc3deec7d2ec9e1_l3.png)

Now, we make the change of variables

, obtaining

![Rendered by QuickLaTeX.com \[\min_{\norm{a}_1 = 1} \norm{Va}_1 = \min_{a\ne 0} \frac{\norm{Va}_1}{\norm{a}_1} = \min_{f\ne 0} \frac{\norm{f}_1}{\norm{\smash{V^{-1}f}}_1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-e223271ff5f99accd84933f4936864bd_l3.png)

Now, take the inverse of both sides to obtain

![Rendered by QuickLaTeX.com \[\left(\min_{\norm{a}_1 = 1} \norm{Va}_1\right)^{-1} = \max_{f\ne 0} \frac{\norm{\smash{V^{-1}f}}_1}{\norm{f}_1} = \norm{\smash{V^{-1}}}_1.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-745fdb308eb43cd71eb1fda9a7b16762_l3.png)

Ergo, we conclude

![Rendered by QuickLaTeX.com \[\min_{\norm{a}_1 = 1} \norm{Va}_1 = \norm{\smash{V^{-1}}}_1^{-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-145e10d104c261b8eba6886d9e5a88d0_l3.png)

Indeed, this relation holds for all operator norms:

Proposition 3 (Minimum stretch). For vector norm  and its induced operator norm

and its induced operator norm  , it holds that for any square invertible matrix

, it holds that for any square invertible matrix  that

that

![Rendered by QuickLaTeX.com \[\min_{\uinorm{v} = 1} \uinorm{Av} = \min_{v\ne 0} \frac{\uinorm{Av}}{\uinorm{v}} = \uinorm{\smash{A^{-1}}}^{-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-c07d1dd4195814db2ab892e8587fb9b4_l3.png)

Using this result, we obtain the lower bound  on the values

on the values  of a polynomial with coefficients

of a polynomial with coefficients  . Combining with Gautschi’s bound gives the following robust version of the fundamental theorem of algebra:

. Combining with Gautschi’s bound gives the following robust version of the fundamental theorem of algebra:

Theorem 4 (Robust fundamental theorem of algebra). Fix a polynomial  and locations

and locations  . Define

. Define  and

and  . Then

. Then

![Rendered by QuickLaTeX.com \[|p(\lambda_1)| + \cdots + |p(\lambda_t)| \ge \left(\frac{\mathrm{gap}}{1+M}\right)^{t-1} (|a_1| + \cdots + |a_t|).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-f011798a0af29d677a21bf1d0fb00152_l3.png)

Thus, at  locations, a degree-

locations, a degree- polynomial must be nonzero at least one point. In fact, the sum of the values at these

polynomial must be nonzero at least one point. In fact, the sum of the values at these  locations must be no worse than exponentially small in

locations must be no worse than exponentially small in  .

.

![]() be random vectors and let

be random vectors and let ![]() denote their tensor product. Assume the vectors

denote their tensor product. Assume the vectors ![]() are isotropic, in the sense that

are isotropic, in the sense that ![]()

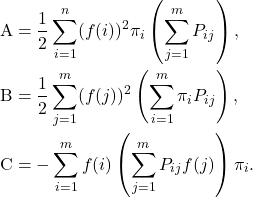

![]() ? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator

? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator ![]() is

is ![]()

![]()

![]()

![]() is a tensor product of isotropic vectors

is a tensor product of isotropic vectors ![]() and

and ![]() , and suppose that

, and suppose that ![]()

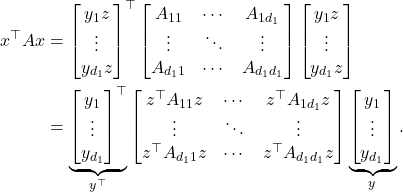

![Rendered by QuickLaTeX.com \[A = \begin{bmatrix} A_{11} & \cdots & A_{1d_1} \\ \vdots & \ddots & \vdots \\ A_{d_1 1} & \cdots & A_{d_1 d_1} \end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-43bb38960318d2dc04d04e021243f074_l3.png)

![Rendered by QuickLaTeX.com \begin{align*}\expect_y [(x^\top A x)^2] &\le \alpha_1 \left( \tr \begin{bmatrix} z^\top A_{11}z & \cdots & z^\top A_{1d_1}z \\ \vdots & \ddots & \vdots \\ z^\top A_{d_1 1}z & \cdots & z^\top A_{d_1 d_1}z \end{bmatrix} \right)^2 \\ &= \alpha_1 [z^\top(A_{11}+\cdots+ A_{d_1d_1})z]^2.\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4536f123e88a9b329362cc19825464cc_l3.png)

![]()

![]()

![Rendered by QuickLaTeX.com \[f_{\rm ramp}(t) = \begin{cases} 1-t/2, & 0 \le t \le 2, \\ 0, & 2 < t \le b.\end{cases}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-26e453856802dfaca0eef8e4b383a649_l3.png)

![Rendered by QuickLaTeX.com \[p_a(\lambda_i) = \sum_{j=1}^t \lambda_i^{j-1} a_j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-eaaf53acb1f30e1ced80af4704800e02_l3.png)

![Rendered by QuickLaTeX.com \[V= \begin{bmatrix} 1 & \lambda_1 & \lambda_1^2 & \cdots & \lambda_1^{t-1} \\ 1 & \lambda_2 & \lambda_2^2 & \cdots & \lambda_2^{t-1} \\ 1 & \lambda_3 & \lambda_3^2 & \cdots & \lambda_3^{t-1} \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 1 & \lambda_s & \lambda_s^2 & \cdots & \lambda_s^{t-1} \end{bmatrix} \in \complex^{s\times t}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2d100f803f43a4910ba6c03d2edde384_l3.png)

![Rendered by QuickLaTeX.com \[\ell_i(\lambda_j) = \delta_{ij} = \begin{cases} 1, & i = j, \\ 0, & i\ne j. \end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-64c4effeb0f12225a76059d89ccce692_l3.png)

![Rendered by QuickLaTeX.com \[q(u) = \sum_{i=1}^t f_i \ell_i(u).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-c1c5043718b5df795a883a6a60d26aaf_l3.png)

![Rendered by QuickLaTeX.com \[q(\lambda_j) = \sum_{i=1}^t f_i \ell_i(\lambda_j) = \sum_{i=1}^t f_i \delta_{ij} = f_j. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-90032b377ebe250ef36dccba2186b6ce_l3.png)

![Rendered by QuickLaTeX.com \[(u + \mu_1) (u + \mu_2) \cdots (u+\mu_k) = \sum_{j=0}^k e_{k-j}(\mu_1,\ldots,\mu_k) u^j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-fe26dd7ba2e215fba4fea5cd28147cb2_l3.png)

![Rendered by QuickLaTeX.com \[\ell_i(u) = \frac{\sum_{j=1}^t e_{t-j}(-\lambda_{-i})u^{j-1}}{\prod_{k\ne i} (\lambda_i - \lambda_k)}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-15d6cb602dcf916e14363b25a013870d_l3.png)

![Rendered by QuickLaTeX.com \[q(u) = \sum_{i=1}^t f_i\ell_i(u) = \sum_{i=1}^t \sum_{j=1}^t f_i \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)} u^{j-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-17012971c8949a309b08ae230bf7fa94_l3.png)

![Rendered by QuickLaTeX.com \[q(u) = \sum_{j=1}^t \sum_{i=1}^t f_i \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)} u^{j-1} = \sum_{j=1}^t \left(\sum_{i=1}^t \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)}\cdot f_i \right) u^{j-1}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-10960bce48c6a124c253db4c1272f2e2_l3.png)

![Rendered by QuickLaTeX.com \[a_j = \sum_{i=1}^t \frac{e_{t-j}(-\lambda_{-i})}{\prod_{k\ne i} (\lambda_i - \lambda_k)}\cdot f_i.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2bb3d1280c99ca124084f031ed343679_l3.png)

![Rendered by QuickLaTeX.com \[\norm{V}_1= \max_{1\le j \le t} \sum_{i=1}^t |\lambda_i|^{j-1} \le \max_{1\le j \le t} tM^{j-1} = t\max\{1,M^{t-1}\}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ec5f488cb8233df69dd22a00e9bae784_l3.png)

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1= \max_{1\le j \le t} \sum_{i=1}^t |(V^{-1})_{ij}| = \max_{1\le j \le t}\frac{ \sum_{i=1}^t |e_{t-i}(-\lambda_{-j})|}{\prod_{k\ne j} |\lambda_j- \lambda_k|}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b431915bf4dd9e0a3087cd91f5e3533a_l3.png)

![Rendered by QuickLaTeX.com \[\norm{\smash{V^{-1}}}_1\le \max_{1\le j \le t}\frac{ \sum_{i=1}^t e_{t-i}(|\lambda_{-j}|)}{\prod_{k\ne j} |\lambda_j- \lambda_k|}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-34c4b502a43c91513781e51c25190ad1_l3.png)

![Rendered by QuickLaTeX.com \[\operatorname{sign}(x) = \begin{cases} 1, & x > 0, \\ 0 & x = 0, \\ -1 & x < 0.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-28ef3c2b8f17f4fc65f112fc1ea06c86_l3.png)

![Rendered by QuickLaTeX.com \[\mu^{(t)} = \left(x^{(t)}\right)^\top Ax^{(t)}= \frac{\left(x^{(0)}\right)^\top A^{2t+1} x^{(0)}}{\left(x^{(0)}\right)^\top A^{2t} x^{(0)}}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-8744da7cad08a86191f52369bd86e7b3_l3.png)

![Rendered by QuickLaTeX.com \[\frac{\lambda_1 - \mu^{(t)}}{\lambda_1} \le \frac{\sum_{i=2}^n \lambda_i^{2t} z_i^2}{\lambda_1^{2t} z_1^2 + \sum_{i=2}^n \lambda_i^{2t} z_i^2} = \frac{c^2}{\lambda_1^{2t} z_1^2 + c^2} \quad \text{for } c^2 = \sum_{i=2}^n \lambda_i^{2t} z_i^2.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-752c7ced1eb1bd8cc0ddf1eacdbc5b1c_l3.png)

![Rendered by QuickLaTeX.com \[\expect_{z_1}\left[\frac{\lambda_1 - \mu^{(t)}}{\lambda_1}\right] \le \expect_{z_1} \left[z_1 \cdot \frac{c}{\lambda_1} \arctan \left( \frac{\lambda_1^t}{c} \cdot z_1 \right) \right] = \expect_{z_1} \left[|z_1| \cdot \frac{c}{\lambda_1^t} \left|\arctan \left( \frac{\lambda_1^t}{c} \cdot z_1 \right)\right| \right].\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b8e649fd7a63c495f6f2fa09d9534c3b_l3.png)

![Rendered by QuickLaTeX.com \[\expect_{z_1}\left[\frac{\lambda_1 - \mu^{(t)}}{\lambda_1}\right] \le = \frac{\pi}{2} \cdot \frac{c}{\lambda_1^t} \cdot \expect_{z_1} \left[|z_1|\right] \le \sqrt{\frac{\pi}{2}} \cdot \frac{c}{\lambda_1^t}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-e9d84cb3710fdc80b0166f5491e5b3da_l3.png)

![Rendered by QuickLaTeX.com \[c = \sqrt{\sum_{i=2} \lambda_i^{2t} z_i^2} \le \lambda_2^{t} \sqrt{\sum_{i=2}^n z_i^2} = \lambda_2^t \norm{(z_2,\ldots,z_n)}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-7facff60e6dd9b6579d16ed28a6b0f47_l3.png)

![Rendered by QuickLaTeX.com \[\expect\left[\frac{\lambda_1 - \mu^{(t)}}{\lambda_1}\right] = \expect_c\left[\expect_{z_1} \left[ \frac{\lambda_1 - \mu^{(t)}}{\lambda_1} \right]\right] \le \sqrt{\frac{\pi}{2}} \cdot \expect\left[ \frac{c}{\lambda_1^t} \right] =\sqrt{\frac{\pi}{2}} \cdot \left(\frac{\lambda_2}{\lambda_1}\right)^t \cdot \sqrt{n-1} .\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-71e78c2a3b2280f94d13d08cfeed954c_l3.png)

![Rendered by QuickLaTeX.com \[\kappa_{\rm dem}(A) = \frac{\norm{A}_{\rm F}}{\sigma_{\rm min}(A)} = \sqrt{\sum_i \left(\frac{\sigma_i(A)}{\sigma_{\rm min}(A)}\right)^2} \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-824557fbf90de5be0f9756f9089728aa_l3.png)

![Rendered by QuickLaTeX.com \[\operatorname{sign}(x) = \begin{cases} 1, & x > 0, \\ 0, & x = 0, \\ -1, & x < 0. \end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-103d0549df7eab731cd55caeaef8f0f2_l3.png)

![Rendered by QuickLaTeX.com \[\langle \varphi_i ,\varphi_j\rangle = \begin{cases}1, & i = j, \\0, & i \ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-1bcdaefcfb18b68324fe7793e2cb3534_l3.png)

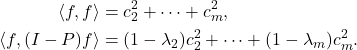

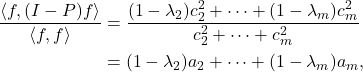

![Rendered by QuickLaTeX.com \[\mathcal{E}(f) = \frac{1}{2} \sum_{i,j=1}^m (f(i)-f(j))^2 \pi_iP_{ij}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-3b001418aee305354517a3784887e27f_l3.png)

![Rendered by QuickLaTeX.com \[{\rm B} = \frac{1}{2} \sum_{j=1}^m (f(j))^2 \left(\sum_{i=1}^m \pi_j P_{ji} \right) = \frac{1}{2} \sum_{j=1}^m (f(j))^2 \pi_j = \frac{1}{2} \langle f, f\rangle.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d26f896a5e016a55ba59c4b0abca8f69_l3.png)

![Rendered by QuickLaTeX.com \[x^\top (A\circ M)x = \sum_{i,j=1}^n x_i (A\circ M)_{ij} x_j = \sum_{i,j=1}^n x_i A_{ij} M_{ij} x_j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ad02e17a6c90aca9b469ef0fe73bb1d3_l3.png)

![Rendered by QuickLaTeX.com \[x^\top (A\circ M)x = \sum_{i,j=1}^n x_i A_{ij} x_j M_{ji} = \tr(\operatorname{diag}(x) A \operatorname{diag}(x) M).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4386c5381ce8bcc4e8ded09b2c00bcf5_l3.png)