This post is part of a series, Markov Musings, about the mathematical analysis of Markov chains. See here for the first post in the series.

In the previous two posts, we proved the fundamental theorem of Markov chains using couplings and discussed the use of couplings to bound the mixing time, the time required for the chain to mix to near-stationarity.

In this post, we will continue this discussion by discussing spectral methods for understanding the convergence of Markov chains.

Mathematical Setting

In this post, we will study a reversible Markov chain on ![]() with transition probability matrix

with transition probability matrix ![]() and stationary distribution

and stationary distribution ![]() . Our goal will be to use the eigenvalues and eigenvectors of the matrix

. Our goal will be to use the eigenvalues and eigenvectors of the matrix ![]() to understand the properties of the Markov chain.

to understand the properties of the Markov chain.

Throughout our discussion, it will be helpful to treat vectors ![]() and functions

and functions ![]() as being one and the same, and we will use both

as being one and the same, and we will use both ![]() and

and ![]() to denote the

to denote the ![]() th entry of the vector

th entry of the vector ![]() (aka the evaluation of the function

(aka the evaluation of the function ![]() at

at ![]() ). By adopting this perspective, a vector is not merely a list of

). By adopting this perspective, a vector is not merely a list of ![]() numbers, but instead labels each state

numbers, but instead labels each state ![]() with a numeric value.

with a numeric value.

Given a function/vector ![]() , we let

, we let ![]() denote the expected value of

denote the expected value of ![]() where

where ![]() is drawn from

is drawn from ![]() :

:

![]()

![]()

As a final piece of notation, we let ![]() denote a probability distribution which assigns 100% probability to outcome

denote a probability distribution which assigns 100% probability to outcome ![]() .

.

Spectral Theory

The eigenvalues and eigenvectors of general square matrices are ill-behaved creatures. Indeed, a general ![]() matrix with real entries can have complex-valued eigenvalues and fail to possess a full suite of

matrix with real entries can have complex-valued eigenvalues and fail to possess a full suite of ![]() linearly independent eigenvectors. The situation is dramatically better for symmetric matrices which obey the spectral theorem:

linearly independent eigenvectors. The situation is dramatically better for symmetric matrices which obey the spectral theorem:

Theorem (spectral theorem for real symmetric matrices). An

real symmetric matrix

(i.e., one satisfying

) has

real eigenvalues

and

orthonormal eigenvectors

.

Unfortunately for us, the transition matrix ![]() for a reversible Markov chain is not always symmetric. But despite this, there’s a surprise:

for a reversible Markov chain is not always symmetric. But despite this, there’s a surprise: ![]() always has real eigenvalues. This leads us to ask:

always has real eigenvalues. This leads us to ask:

Why does are the eigenvalues of the transition matrix

real?

To answer this question, we will need to develop and a more general version of the spectral theorem and use our standing assumption that the Markov chain is reversible.

The Transpose

In our quest to develop a general version of the spectral theorem, we look more deeply into the hypothesis of the theorem, namely that ![]() is equal to its transpose

is equal to its transpose ![]() . Let’s first ask: What does the transpose

. Let’s first ask: What does the transpose ![]() mean?

mean?

Recall that ![]() is equipped with the standard inner product, sometimes called the Euclidean or dot product. We denote this product by

is equipped with the standard inner product, sometimes called the Euclidean or dot product. We denote this product by ![]() and it is defined as

and it is defined as

![]()

The transpose of a matrix ![]() is closely related to the standard inner product. Specifically,

is closely related to the standard inner product. Specifically, ![]() is the (unique) matrix satisfying the adjoint property:

is the (unique) matrix satisfying the adjoint property:

![]()

The Adjoint and the General Spectral Theorem

Since the transpose is intimately related to the standard inner product on ![]() , it is natural to wonder if non-standard inner products on

, it is natural to wonder if non-standard inner products on ![]() give rise to non-standard versions of the transpose. This idea proves to be true.

give rise to non-standard versions of the transpose. This idea proves to be true.

Definition (adjoint). Let

be any inner product on

and let

be an

matrix. The adjoint of

with respect to the inner product

is the (unique) matrix

such that

A matrix

is self-adjoint if it equals its own adjoint,

.

The spectral theorem naturally extends to the more abstract setting of adjoints with respect to non-standard inner products:

Theorem (general spectral theorem). Let

be an

matrix which is set-adjoint with respect to an inner product

. Then

has

real eigenvalues

and

eigenvectors

which are

–orthonormal:

Reversibility and Self-Adjointness

The general spectral theorem offers us a path to understand our observation from above that the eigenvalues of ![]() are always real. Namely, we ask:

are always real. Namely, we ask:

Is there an inner product under which

is self-adjoint?

Fortunately, the answer is yes. Define the ![]() -inner product

-inner product

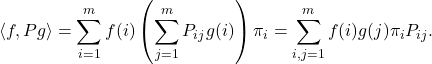

![]()

Let us compute the adjoint of the transition matrix ![]() in the

in the ![]() -inner product

-inner product ![]() :

:

![]()

![Rendered by QuickLaTeX.com \[\langle f, Pg \rangle= \sum_{i,j=1}^m f(i) g(j) \pi_jP_{ji} = \sum_{j=1}^m \left( \sum_{i=1}^m f(i) P_{ij} \right) g(j) \pi_j = \langle Pf, g\rangle.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2e8d0788b8c0f3ee0e4602e817957cfa_l3.png)

We cannot emphasize enough that the reversibility assumption is crucial to ensure that ![]() is self-adjoint. In fact,

is self-adjoint. In fact,

Theorem (reversibility and self-adjointness). The transition matrix of a general Markov chain with stationary distribution

is self-adjoint in the

-inner product if and only if the chain is reversible.

Spectral Theorem for Markov Chains

Since ![]() is self-adjoint in the

is self-adjoint in the ![]() -inner product, the general spectral theorem implies the following

-inner product, the general spectral theorem implies the following

Corollary (spectral theorem for reversible Markov chain). The reversible Markov transition matrix

has

real eigenvalues

associated with eigenvectors

which are orthonormal in the

-inner product:

Since ![]() is a reversible Markov transition matrix—not just any self-adjoint matrix—the eigenvalues of

is a reversible Markov transition matrix—not just any self-adjoint matrix—the eigenvalues of ![]() satisfy additional properties:

satisfy additional properties:

- Bounded. All of the eigenvalues of

lie between

lie between  and

and  .1Here’s an argument. The vectors

.1Here’s an argument. The vectors  span all of

span all of  , where

, where  denotes a vector with

denotes a vector with  in position

in position  and

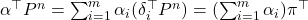

and  elsewhere. Then, by the fundamental theorem of Markov chains,

elsewhere. Then, by the fundamental theorem of Markov chains,  converges to

converges to  for every

for every  . In particular, for any vector

. In particular, for any vector  ,

,  . Thus, since

. Thus, since  for every vector

for every vector  , all of the eigenvalues must be

, all of the eigenvalues must be  in magnitude.

in magnitude. - Eigenvalue 1.

is an eigenvalue of

is an eigenvalue of  with eigenvector

with eigenvector  , where

, where  is a vector of all one’s.

is a vector of all one’s.

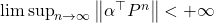

For a primitive chain, we can also have the property:

- Contractive. For a primitive chain, all eigenvalues other than

have magnitude

have magnitude  .2This follows

.2This follows  for every

for every  , so

, so  must be the unique left eigenvector with eigenvalue of modulus

must be the unique left eigenvector with eigenvalue of modulus  .

.

Distance Between Probability Distributions Redux

In the previous post, we used the total variation distance to compare probability distributions:

Definition (total variation distance). The total variation distance between probability distributions

and

is

The total variation distance is an “![]() “ way of comparing two probability distributions since it can be computed by adding the absolute difference between the probabilities

“ way of comparing two probability distributions since it can be computed by adding the absolute difference between the probabilities ![]() and

and ![]() of each outcome. Spectral theory plays more nicely with an “

of each outcome. Spectral theory plays more nicely with an “![]() ” way of comparing probability distributions, which we develop now.

” way of comparing probability distributions, which we develop now.

Densities

Given two probability densities ![]() and

and ![]() , the density of

, the density of ![]() with respect to

with respect to ![]() is the function3Note that we typically associate probability distributions

is the function3Note that we typically associate probability distributions ![]() and

and ![]() with row vectors, whereas the density

with row vectors, whereas the density ![]() is a function which we identify with column vectors. For those interested and familiar with measure theory, here is a good reason why this makes sense. In the continuum setting, probability distributions

is a function which we identify with column vectors. For those interested and familiar with measure theory, here is a good reason why this makes sense. In the continuum setting, probability distributions ![]() and

and ![]() are measures whereas the density

are measures whereas the density ![]() remains a function, known as the Radon–Nikodym derivative

remains a function, known as the Radon–Nikodym derivative ![]() . This provides a general way of figuring out which objects for finite state space Markov chains are row vectors and which are column vectors: Measures are row vectors whereas functions are column vectors.

. This provides a general way of figuring out which objects for finite state space Markov chains are row vectors and which are column vectors: Measures are row vectors whereas functions are column vectors. ![]() given by

given by

![]()

(1) ![]()

To see why we call ![]() a density, it may be helpful to appeal to continuous probability for a moment. If

a density, it may be helpful to appeal to continuous probability for a moment. If ![]() is a random variable with distribution

is a random variable with distribution ![]() , the probability density function of

, the probability density function of ![]() (with respect to the uniform distribution

(with respect to the uniform distribution ![]() ) is a function

) is a function ![]() such that

such that

![]()

The Chi-Squared Divergence

We are now ready to introduce our “![]() ” way of comparing probability distributions.

” way of comparing probability distributions.

Definition (

-divergence). The

-divergence of

with respect to

is the variance of the density function:

To see the last equality is a valid formula for ![]() , note that

, note that

(2) ![]()

Relations Between Distance Measures

The ![]() divergence always gives an upper bound on the total variation distance. Indeed, first note that we can express the total variation distance as

divergence always gives an upper bound on the total variation distance. Indeed, first note that we can express the total variation distance as

![]()

(3) ![Rendered by QuickLaTeX.com \[\norm{\sigma - \pi}_{\rm TV} = \frac{1}{2} \expect \left[ \left| \frac{d\sigma}{d\pi} - 1 \right| \right] \le \frac{1}{2} \left(\expect \left[ \left( \frac{d\sigma}{d\pi} - 1 \right)^2 \right]\right)^{1/2} = \frac{1}{2} \sqrt{\chi^2(\sigma \mid\mid \pi)}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4986b7434b84b79aff6f94df2ec4b4e8_l3.png)

Markov Chain Convergence by Eigenvalues

Now, we prove a quantitative version of the fundamental theorem of Markov chains (for reversible processes) using spectral theory and eigenvalues:4Note that we used the fundamental theorem of Markov chains in above footnotes to prove the “bounded” and “contractive” properties, so, at present, this purported proof of the fundamental theorem would be circular. Fortunately, we can establish these two claims independently of the fundamental theorem, say, by appealing to the Perron–Frobenius theorem. Thus, this argument does give a genuine non-circular proof of the fundamental theorem.

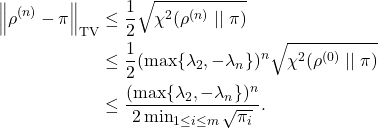

Theorem (Markov chain convergence by eigenvalues). Let

denote the distributions of the Markov chain at times

. Then

This raises a natural question: How large can the initial ![]() divergence between

divergence between ![]() and

and ![]() be? This is answered by the next result.

be? This is answered by the next result.

Proposition (Initial distance to stationarity). For any

,

(4)

For any initial distribution, we have

(5)

The condition (4) is a direct computation

![]()

Using the previous two results and equation (3), we immediately obtain the following:

Corollary (Mixing time). When initialized from a distribution

, the chain mixes to

-total variation distance to stationarity

after

(6)

Indeed,

Equating the left-hand side with

Proof of Markov Chain Convergence Theorem

We conclude with a proof of the Markov chain convergence result.

Recurrence for Densities

Our first task is to derive a recurrence for the densities ![]() . To do this, we use the recurrence for the probability distribution:

. To do this, we use the recurrence for the probability distribution:

![]()

![Rendered by QuickLaTeX.com \[\left( \frac{d\rho^{(n+1)}}{d\pi}\right)_j = \frac{\rho_j^{(n+1)}}{\pi_j} = \frac{\sum_{i=1}^m \rho^{(n)}_i P_{ij}}{\pi_j}= \sum_{i=1}^m \rho^{(n)}_i\frac{P_{ij}}{\pi_j}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b6c60937b23d2509e4a3e4fa1f121427_l3.png)

We now invoke the detailed balance ![]() , which implies

, which implies

![Rendered by QuickLaTeX.com \[\left( \frac{d\rho^{(n+1)}}{d\pi}\right)_j = \sum_{i=1}^m\rho^{(n)}_i \frac{P_{ji}}{\pi_i} = \sum_{i=1}^m P_{ji} \frac{\rho^{(n)}_i}{\pi_i} = \left( P \frac{d \rho^{(n)}}{d\pi} \right)_j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d22315b80e3fc4d3736d0c49f877fa9c_l3.png)

(7) ![]()

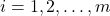

Spectral Decomposition

Now that we have a recurrence for the densities ![]() , we can understand how the densities change in time. Expand the initial density in eigenvectors of

, we can understand how the densities change in time. Expand the initial density in eigenvectors of ![]() :

:

![]()

![]()

Conclusion

Finally, we compute

![Rendered by QuickLaTeX.com \[\chi^2(\rho^{(n)} \mid\mid \pi) = \sum_{i=2}^m c_i^2 \lambda_i^{2n} \le (\max \{ \lambda_2, -\lambda_m \})^{2n} \sum_{i=2}^m c_i^2 = (\max \{ \lambda_2, -\lambda_m \})^{2n} \chi^2(\rho^{(0)} \mid\mid \pi).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-7b82e2d3cefcede8d4a7a72b1fa3ceea_l3.png)

![Rendered by QuickLaTeX.com \[[u_i,u_j]=\begin{cases}1, & i=j, \\0,& i\ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-6485908bc8e69e0ad6fb16b826344f60_l3.png)

![Rendered by QuickLaTeX.com \[\langle\varphi_i,\varphi_j\rangle=\begin{cases}1, &i=j\\0,& i\ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-fd0e99bc2439329beccce1339db0aee9_l3.png)

![Rendered by QuickLaTeX.com \[\chi^2(\sigma \mid\mid \pi) \coloneqq \Var \left(\frac{d\sigma}{d\pi} \right) = \expect \left[\left( \frac{d\sigma}{d\pi} - 1 \right)^2\right] = \expect \left[\left(\frac{d\sigma}{d\pi}\right)^2\right] - 1.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2521b63ae50f02fe6099c54dcc4e00a4_l3.png)

2 thoughts on “Markov Musings 3: Spectral Theory”