Let  be random vectors and let

be random vectors and let  denote their tensor product. Assume the vectors

denote their tensor product. Assume the vectors  are isotropic, in the sense that

are isotropic, in the sense that

![Rendered by QuickLaTeX.com \[\expect[x_i^{\vphantom{\top}} x_i^\top] = I.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-5e65b0d7cf66e1cecc4c60470eca40af_l3.png)

The vector

inherits the isotropy property as well

![Rendered by QuickLaTeX.com \expect[xx^\top] = I](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-693de07bc613a6824b8000f4615ccf85_l3.png)

. As a consequence, we can use the vector

to form an

unbiased estimator for the matrix

trace ![Rendered by QuickLaTeX.com \expect[x^\top Ax] = \tr(A)](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-99c590c818d205b76d1b6ab2b4391edb_l3.png)

. Trace estimation has been a frequent topic

on this blog.

What is the variance of the trace estimate  ? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator

? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator  is

is

![Rendered by QuickLaTeX.com \[\Var(x^\top A x) = \expect[(x^\top A x)^2] - \expect[x^\top A x]^2 = \expect[(x^\top A x)^2] - \tr(A)^2.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-5647f8054d7455519ae8e1bef31af139_l3.png)

As such, bounding the variance is equivalent to bounding the second moment

![Rendered by QuickLaTeX.com \expect[(x^\top A x)^2]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b5fbf8e67e4ff29170a0eebe078ce961_l3.png)

. Suppose that the individual base vectors

satisfy a moment bound

(1) ![Rendered by QuickLaTeX.com \[\expect[(x_i^\top A x_i)^2] \le \alpha \tr(A)^2 \quad \text{for every psd matrix } A.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-277e90ec9bcf7846732486a3c5953375_l3.png)

For instance, Gaussian, random sign, and uniformly random vectors on the sphere

all satisfy this bound with

. Under assumption (1), Meyer and Avron show that the tensor-product trace estimator

satisfies the bound

(2) ![Rendered by QuickLaTeX.com \[\expect[(x^\top A x)^2] \le \alpha^\ell \tr(A)^2 \quad \text{for any psd matrix } A.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ee2f7f4328d1d2f7117f850c28a62528_l3.png)

Here, a matrix

is

positive semidefinite (psd) if it is

symmetric and satisfies

for any vector

. Observe, the constant in the bound (2) is exponentially larger than the constant in bound (1). Unfortunately,

as Meyer and Avron show, this exponentially large variance is a real property of the tensor-structured trace estimator, at least on worst-case examples.

Meyer and Avron’s paper is really nice, and it contains many different results for Kronecker-structured trace estimation beyond the bound (2). I highly recommend checking their paper out, which was just appeared in the SIAM Journal of Matrix Analysis and Applications! In this blog, I’ll give an alternate, somewhat shorter proof of the Meyer–Avron bound (2).

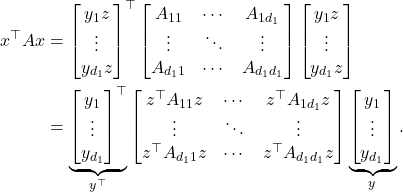

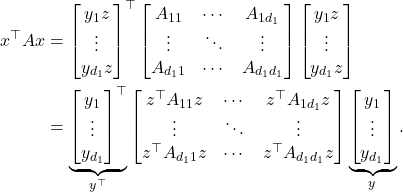

Suppose that  is a tensor product of isotropic vectors

is a tensor product of isotropic vectors  and

and  , and suppose that

, and suppose that

(3) ![Rendered by QuickLaTeX.com \[\expect[(y^\top A_1 y)^2] \le \alpha_1 \tr(A_1)^2 \quad \text{and} \quad \expect[(z^\top A_2 z)^2] \le \alpha_2 \tr(A_2)^2 \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-9b8a7bf17b8f16b0ec73e0027423055e_l3.png)

for any psd matrices

and

. Now, let

be an

psd matrix, and

partition

as

(4) ![Rendered by QuickLaTeX.com \[A = \begin{bmatrix} A_{11} & \cdots & A_{1d_1} \\ \vdots & \ddots & \vdots \\ A_{d_1 1} & \cdots & A_{d_1 d_1} \end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-43bb38960318d2dc04d04e021243f074_l3.png)

Then

(1)

Taking

the expectation over the random vector  alone

alone and applying (3), we obtain

(2) ![Rendered by QuickLaTeX.com \begin{align*}\expect_y [(x^\top A x)^2] &\le \alpha_1 \left( \tr \begin{bmatrix} z^\top A_{11}z & \cdots & z^\top A_{1d_1}z \\ \vdots & \ddots & \vdots \\ z^\top A_{d_1 1}z & \cdots & z^\top A_{d_1 d_1}z \end{bmatrix} \right)^2 \\ &= \alpha_1 [z^\top(A_{11}+\cdots+ A_{d_1d_1})z]^2.\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4536f123e88a9b329362cc19825464cc_l3.png)

Taking the expectation over

and invoking

the law of total expectation then yields

(3) ![Rendered by QuickLaTeX.com \begin{align*}\expect [(x^\top A x)^2] &\le \alpha_1 \alpha_2 \tr(A_{11}+\cdots+ A_{d_1d_1})^2=\alpha_1 \alpha_2\tr(A)^2.\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-5f49a9eb10fd37e189a8f518add54828_l3.png)

In the last line, we observe that the trace of

is the sum of the traces of its diagonal blocks

. Voilà! We have deduced the bound

![Rendered by QuickLaTeX.com \[\expect [(x^\top A x)^2] \le \alpha_1\alpha_2 \tr(A)^2,\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-434b9be6486b07cf1509bbe664e7f3d4_l3.png)

which immediately yields the Meyer–Avron bound (2) by iteration.

![]() be random vectors and let

be random vectors and let ![]() denote their tensor product. Assume the vectors

denote their tensor product. Assume the vectors ![]() are isotropic, in the sense that

are isotropic, in the sense that ![]()

![]() ? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator

? This question was addressed by Raphael Meyer and Haim Avron. The variance of the trace estimator ![]() is

is ![]()

![]()

![]()

![]() is a tensor product of isotropic vectors

is a tensor product of isotropic vectors ![]() and

and ![]() , and suppose that

, and suppose that ![]()

![Rendered by QuickLaTeX.com \[A = \begin{bmatrix} A_{11} & \cdots & A_{1d_1} \\ \vdots & \ddots & \vdots \\ A_{d_1 1} & \cdots & A_{d_1 d_1} \end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-43bb38960318d2dc04d04e021243f074_l3.png)

![Rendered by QuickLaTeX.com \begin{align*}\expect_y [(x^\top A x)^2] &\le \alpha_1 \left( \tr \begin{bmatrix} z^\top A_{11}z & \cdots & z^\top A_{1d_1}z \\ \vdots & \ddots & \vdots \\ z^\top A_{d_1 1}z & \cdots & z^\top A_{d_1 d_1}z \end{bmatrix} \right)^2 \\ &= \alpha_1 [z^\top(A_{11}+\cdots+ A_{d_1d_1})z]^2.\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4536f123e88a9b329362cc19825464cc_l3.png)

![]()

![]()