At its core, computational mathematics is about representing the infinite by the finite. Even attempting to store a single arbitrary real number requires an infinite amount of memory to store its infinitely many potentially nonrepeating digits.1It is a well-known result that a real number has a repeating decimal expansion if, and only if, it is rational. When dealing with problems in calculus, however, the problem is even more severe as we want to compute with functions on the real line. In effect, a function is an uncountably long list of real numbers, one for each value of the function’s domain. We certainly cannot store an infinite list of numbers with infinitely many digits on a finite computer!

Thus, our only hope to compute with functions is to devise some sort of finite representation for them. The most natural representation is to describe function by a list of real numbers (and then store approximations of these numbers on our computer). For instance, we may approximate the function by a polynomial ![]() of degree

of degree ![]() and then store the

and then store the ![]() coefficients

coefficients ![]() . From this list of numbers, we then can reconstitute an approximate version of the function. For instance, in our polynomial example, our approximate version of the function is just

. From this list of numbers, we then can reconstitute an approximate version of the function. For instance, in our polynomial example, our approximate version of the function is just ![]() . Naturally, there is a tradeoff between the length of our list of numbers and how accurate our approximation is. This post is about that tradeoff.

. Naturally, there is a tradeoff between the length of our list of numbers and how accurate our approximation is. This post is about that tradeoff.

The big picture idea is that the “smoother” a function is, the easier it will be to approximate it with a small number of parameters. Informally, we have the following rule of thumb: if a function ![]() on a one-dimensional domain possesses

on a one-dimensional domain possesses ![]() nice derivatives, then

nice derivatives, then ![]() can be approximated by a

can be approximated by a ![]() -parameter approximation with error decaying at least as fast as

-parameter approximation with error decaying at least as fast as ![]() . This basic result appears in many variants in approximation theory with different precise definitions of the term “

. This basic result appears in many variants in approximation theory with different precise definitions of the term “![]() nice derivatives” and “

nice derivatives” and “![]() -parameter approximation”. Let us work out the details of the approximation problem in one concrete setting using Fourier series.

-parameter approximation”. Let us work out the details of the approximation problem in one concrete setting using Fourier series.

Approximation by Fourier Series

Consider a complex-valued and ![]() -period function

-period function ![]() defined on the real line. (Note that, by a standard transformation, there are close connections between approximation of

defined on the real line. (Note that, by a standard transformation, there are close connections between approximation of ![]() -periodic functions on the whole real line and functions defined on a compact interval

-periodic functions on the whole real line and functions defined on a compact interval ![]() .2Specifically, suppose that

.2Specifically, suppose that ![]() is a function defined on

is a function defined on ![]() . Then define a function

. Then define a function ![]() on

on ![]() by

by ![]() . This linear rescaling of the domain is very simple and easy to understand. Now define a

. This linear rescaling of the domain is very simple and easy to understand. Now define a ![]() -periodic function

-periodic function ![]() on

on ![]() by

by ![]() . There are very close connections between approximation of

. There are very close connections between approximation of ![]() and

and ![]() . For example, Fourier cosine expansions of

. For example, Fourier cosine expansions of ![]() are equivalent to Chebyshev polynomial expansions of

are equivalent to Chebyshev polynomial expansions of ![]() . For more on this subject, Trefethen’s Approximation Theory and Approximation Practice is an excellent reference.) If

. For more on this subject, Trefethen’s Approximation Theory and Approximation Practice is an excellent reference.) If ![]() is square-integrable, then

is square-integrable, then ![]() possesses a Fourier expansion

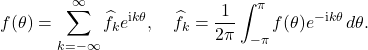

possesses a Fourier expansion

(1)

The infinite series converges in the ![]() sense, meaning that

sense, meaning that ![]() , where

, where ![]() is the truncated Fourier series

is the truncated Fourier series ![]() and

and ![]() is

is ![]() norm

norm ![]() .3One may show with considerable analysis that Fourier series converges in others senses, for example almost everywhere convergence. We also have the Plancherel theorem, which states that

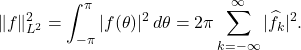

.3One may show with considerable analysis that Fourier series converges in others senses, for example almost everywhere convergence. We also have the Plancherel theorem, which states that

(2)

Note that the convergence of the Fourier series is fundamentally a statement about approximation. The fact that the Fourier series converges means that the truncated Fourier series ![]() of

of ![]() terms acts as an arbitrarily close approximate of

terms acts as an arbitrarily close approximate of ![]() (measured with the

(measured with the ![]() norm). However, the number

norm). However, the number ![]() of terms we need to store might be quite large if a large value of

of terms we need to store might be quite large if a large value of ![]() is needed for

is needed for ![]() to be small. However, as we shall soon see, if the function

to be small. However, as we shall soon see, if the function ![]() is “smooth”,

is “smooth”, ![]() will be small for even moderate values of

will be small for even moderate values of ![]() .

.

Smoothness

Often, in analysis, we refer to a function as smooth when it possesses derivatives of all orders. In one dimension, this means that the ![]() th derivative

th derivative ![]() exists for every integer

exists for every integer ![]() . In this post, we shall speak of smoothness as a more graded notion: loosely, a function

. In this post, we shall speak of smoothness as a more graded notion: loosely, a function ![]() is smoother than a function

is smoother than a function ![]() if

if ![]() possesses more derivatives than

possesses more derivatives than ![]() or the magnitude of

or the magnitude of ![]() ‘s derivatives are smaller. This conception of smoothness accords more with the plain-English definition of smoothness: the graph of a very mildly varying function

‘s derivatives are smaller. This conception of smoothness accords more with the plain-English definition of smoothness: the graph of a very mildly varying function ![]() with a discontinuity in its 33rd derivative looks much smoother to the human eye than the graph of a highly oscillatory and jagged function

with a discontinuity in its 33rd derivative looks much smoother to the human eye than the graph of a highly oscillatory and jagged function ![]() that nonetheless possesses derivatives of all orders.4One might refer to the precise concept of possessing derivatives of all orders as

that nonetheless possesses derivatives of all orders.4One might refer to the precise concept of possessing derivatives of all orders as ![]() smoothness.

smoothness.

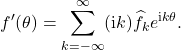

For a function defined in terms of a Fourier series, it is natural to compute its derivative by formally differentiating the Fourier series term-by-term:

(3)

This formal Fourier series converges if, and only if, the ![]() norm of this putative derivative

norm of this putative derivative ![]() , as computed with the Plancherel theorem, is finite:

, as computed with the Plancherel theorem, is finite: ![]() . This “derivative”

. This “derivative” ![]() may not be a derivative of

may not be a derivative of ![]() in the classical sense. For instance, using this definition, the absolute value function

in the classical sense. For instance, using this definition, the absolute value function ![]() possesses a derivative

possesses a derivative ![]() for

for ![]() and

and ![]() for

for ![]() . For the derivative of

. For the derivative of ![]() to exist in the sense of Eq. (3),

to exist in the sense of Eq. (3), ![]() need not be differentiable at every point, but it must define a square-integrable functions at the points where it is differentiable. We shall call the derivative

need not be differentiable at every point, but it must define a square-integrable functions at the points where it is differentiable. We shall call the derivative ![]() as given by Eq. (3) to be a weak derivative of

as given by Eq. (3) to be a weak derivative of ![]() .

.

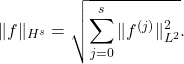

If a function ![]() has

has ![]() square-integrable weak derivatives

square-integrable weak derivatives ![]() for

for ![]() , then we say that

, then we say that ![]() belongs to the Sobolev space

belongs to the Sobolev space ![]() . The Sobolev space

. The Sobolev space ![]() is equipped with the norm

is equipped with the norm

(4)

The Sobolev norm ![]() is a quantitative measure of qualitative smoothness. The smaller the Sobolev norm

is a quantitative measure of qualitative smoothness. The smaller the Sobolev norm ![]() of

of ![]() , the smaller the derivatives of

, the smaller the derivatives of ![]() are. As we will see, we can use this to bound the approximation error.

are. As we will see, we can use this to bound the approximation error.

Smoothness and Degree of Approximation

If ![]() is approximated by

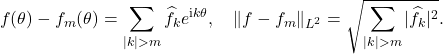

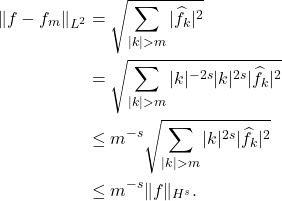

is approximated by ![]() , the error of approximation is given by

, the error of approximation is given by

(5)

Suppose that ![]() (that is,

(that is, ![]() has

has ![]() square integrable derivatives). Then we may deduce the inequality

square integrable derivatives). Then we may deduce the inequality

(6)

The first “![]() ” follows from the fact that

” follows from the fact that ![]() for

for ![]() . This very important result is a precise instantiation of our rule of thumb from earlier: if

. This very important result is a precise instantiation of our rule of thumb from earlier: if ![]() possesses

possesses ![]() nice (i.e. square-integrable) derivatives, then the (

nice (i.e. square-integrable) derivatives, then the (![]() ) approximation error for an

) approximation error for an ![]() -term Fourier approximation decays at least as fast as

-term Fourier approximation decays at least as fast as ![]() .

.

Higher Dimensions

The results for one dimension can easily be extended to consider functions ![]() defined on

defined on ![]() -dimensional space which are

-dimensional space which are ![]() -periodic in every argument.5e.g.

-periodic in every argument.5e.g. ![]() for every

for every ![]() For physics-based scientific simulation, we are often interested in

For physics-based scientific simulation, we are often interested in ![]() or

or ![]() , but for more modern problems in data science, we might be interested in very large dimensions

, but for more modern problems in data science, we might be interested in very large dimensions ![]() .

.

Letting ![]() denote the set of all

denote the set of all ![]() -tuples of integers, one can show that one has the

-tuples of integers, one can show that one has the ![]() -dimensional Fourier series

-dimensional Fourier series

(7) ![]()

Here, we denote ![]() to be the Euclidean inner product of the

to be the Euclidean inner product of the ![]() -dimensional vectors

-dimensional vectors ![]() and

and ![]() ,

, ![]() . A natural generalization of the Plancherel theorem holds as well. Let

. A natural generalization of the Plancherel theorem holds as well. Let ![]() denote the maximum of

denote the maximum of ![]() . Then, we have the truncated Fourier series

. Then, we have the truncated Fourier series ![]() . Using the same calculations from the previous section, we deduce a very similar approximation property

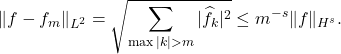

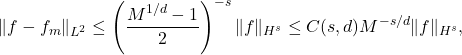

. Using the same calculations from the previous section, we deduce a very similar approximation property

(8)

There’s a pretty big catch though. The approximate function ![]() possesses

possesses ![]() terms! In order to include each of the first

terms! In order to include each of the first ![]() Fourier modes in each of

Fourier modes in each of ![]() dimensions, the number of terms

dimensions, the number of terms ![]() in our Fourier approximation must grow exponentially in

in our Fourier approximation must grow exponentially in ![]() ! In particular, the approximation error satisfies a bound

! In particular, the approximation error satisfies a bound

(9)

where ![]() is a constant depending only on

is a constant depending only on ![]() and

and ![]() .

.

This is the so-called curse of dimensionality: to approximate a function in ![]() dimensions, we need exponentially many terms in the dimension

dimensions, we need exponentially many terms in the dimension ![]() . In higher-dimensions, our rule of thumb needs to be modified: if a function

. In higher-dimensions, our rule of thumb needs to be modified: if a function ![]() on a

on a ![]() -dimensional domain possesses

-dimensional domain possesses ![]() nice derivatives, then

nice derivatives, then ![]() can be approximated by a

can be approximated by a ![]() -parameter approximation with error decaying at least as fast as

-parameter approximation with error decaying at least as fast as ![]() .

.

The Theory of Nonlinear Widths: The Speed Limit of Approximation Theory

So far, we have shown that if one approximates a function ![]() on a

on a ![]() -dimensional space by truncating its Fourier series to

-dimensional space by truncating its Fourier series to ![]() terms, the approximation error decays at least as fast as

terms, the approximation error decays at least as fast as ![]() . Can we do better than this, particularly in high-dimensions where the error decay can be very slow if

. Can we do better than this, particularly in high-dimensions where the error decay can be very slow if ![]() ?

?

One must be careful about how one phrases this question. Suppose I ask “what is the best way of approximating a function ![]() “? A subversive answer is that we may approximate

“? A subversive answer is that we may approximate ![]() by a single-parameter approximation of the form

by a single-parameter approximation of the form ![]() with

with ![]() ! Consequently, there is a one-parameter approximation procedure that approximates every function perfectly. The problem with this one-parameter approximation is obvious: the one-parameter approximation is terrible at approximating most functions different than

! Consequently, there is a one-parameter approximation procedure that approximates every function perfectly. The problem with this one-parameter approximation is obvious: the one-parameter approximation is terrible at approximating most functions different than ![]() . Thus, the question “what is the best way of approximating a particular function?” is ill-posed. We must instead ask the question “what is the best way of approximating an entire class of functions?” For us, the class of functions shall be those which are sufficiently smooth: specifically, we shall consider the class of functions whose Sobolev norm satisfies a bound

. Thus, the question “what is the best way of approximating a particular function?” is ill-posed. We must instead ask the question “what is the best way of approximating an entire class of functions?” For us, the class of functions shall be those which are sufficiently smooth: specifically, we shall consider the class of functions whose Sobolev norm satisfies a bound ![]() . Call this class

. Call this class ![]() .

.

As outlined at the beginning, an approximation procedure usually begins by taking the function ![]() and writing down a list of

and writing down a list of ![]() numbers

numbers ![]() . Then, from this list of numbers we reconstruct a function

. Then, from this list of numbers we reconstruct a function ![]() which serves as an approximation to

which serves as an approximation to ![]() . Formally, this can be viewed as a mathematical function

. Formally, this can be viewed as a mathematical function ![]() which takes

which takes ![]() to a tuple

to a tuple ![]() followed by a function

followed by a function ![]() which takes

which takes ![]() and outputs a continuous

and outputs a continuous ![]() -periodic function

-periodic function ![]() .

.

(10) ![]()

Remarkably, there is a mathematical theory which gives sharp bounds on the expressive power of any approximation procedure of this type. This theory of nonlinear widths serves as a sort of speed limit in approximation theory: no method of approximation can be any better than the theory of nonlinear widths says it can. The statement is somewhat technical, and we advise the reader to look up a precise statement of the result before using it any serious work. Roughly, the theory of nonlinear widths states that for any continuous approximation procedure6That is, an approximation procedure for which ![]() is a continuous function where

is a continuous function where ![]() is equipped with the topology defined by the norm

is equipped with the topology defined by the norm ![]() . that is able to approximate every function in

. that is able to approximate every function in ![]() with

with ![]() approximation error no more than

approximation error no more than ![]() , the number of parameters

, the number of parameters ![]() must be at least some constant multiple of

must be at least some constant multiple of ![]() . Equivalently, the worst-case approximation error for a function in

. Equivalently, the worst-case approximation error for a function in ![]() with an

with an ![]() parameter continuous approximation is at least some multiple of

parameter continuous approximation is at least some multiple of ![]() .

.

In particular, the theory of nonlinear widths states that the approximation property of truncated Fourier series are as good as any method for approximating functions in the class ![]() , as they exactly meet the “speed limit” given by the theory of nonlinear widths. Thus, approximating using truncated Fourier series is, in a certain very precise sense, as good as any other approximation technique you can think of in approximating arbitrary functions from

, as they exactly meet the “speed limit” given by the theory of nonlinear widths. Thus, approximating using truncated Fourier series is, in a certain very precise sense, as good as any other approximation technique you can think of in approximating arbitrary functions from ![]() : splines, rational functions, wavelets, and artificial neural networks must follow the same speed limit. Make no mistake, these other methods have definite advantages, but degree of approximation for the class

: splines, rational functions, wavelets, and artificial neural networks must follow the same speed limit. Make no mistake, these other methods have definite advantages, but degree of approximation for the class ![]() is not one of them. Also, note that the theory of nonlinear widths shows that the curse of dimensionality is not merely an artifact of Fourier series; it affects all high-dimensional approximation techniques.

is not one of them. Also, note that the theory of nonlinear widths shows that the curse of dimensionality is not merely an artifact of Fourier series; it affects all high-dimensional approximation techniques.

For the interested reader, see the following footnotes for two important ways one may perform approximations better than the theory of nonlinear widths within the scope of its rules.7The theory of nonlinear widths holds for continuous methods of approximation. This means that discontinuous approximation procedures may circumvent its bounds. Indeed, such discontinuous approximation procedures exist using probabilistic techniques. These methods are of questionable use in practice since discontinuous approximation procedures, by their nature, are extremely sensitive to the perturbations which are ubiquitous in performing computations on computers.8The theory of nonlinear widths holds for means of approximating the entire class ![]() . More efficient methods may exist for meaningful subclasses of

. More efficient methods may exist for meaningful subclasses of ![]() . For instance, Mhaskar and Poggio show that for functions

. For instance, Mhaskar and Poggio show that for functions ![]() satisfying a compositional property, that they can effectively be approximated by multilayer artificial neural networks.

satisfying a compositional property, that they can effectively be approximated by multilayer artificial neural networks.

Upshot: The smoother a function is, the better it can be approximated. Specifically, one can approximate a function on ![]() dimensions with

dimensions with ![]() nice derivatives with approximation error decaying with rate at least

nice derivatives with approximation error decaying with rate at least ![]() . In the case of

. In the case of ![]() -periodic functions, such an approximation can easily be obtained by truncating the function’s Fourier series. This error decay rate is the best one can hope for to approximate all functions of this type.

-periodic functions, such an approximation can easily be obtained by truncating the function’s Fourier series. This error decay rate is the best one can hope for to approximate all functions of this type.

Excellent summary beautifully written.

This is a really nice explanation for some deep mathematical ideas. I was just wondering how something like “noise” affects the approximations. This is of course such a huge topic, but I guess that gives us a new dimension to the problems–how to approximate or model the noise as well as the function. Still, if you are looking for some blog post ideas, then this question of noise would be a good one. Thanks again.

A good written blog on approximation theory. But my question is why you take a particular class of function that is Sobolev space. There are also many function space like Holder space, Besov space, Generalized Holder space, e.t.c.. Basically I want to know the motivation behind the idea why u choose the space to explain degree of approximation. And another thing can you explain the term “Degree of Approximation”.