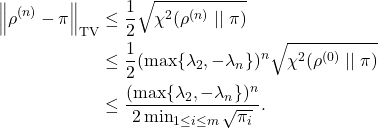

In the previous posts, we’ve been using eigenvalues to understand the mixing of reversible Markov chains. Our main convergence result was as follows:

![]()

Bounding the the rate of convergence requires an upper bound on ![]() and a lower bound on

and a lower bound on ![]() . In this post, we will talk about techniques for bounding

. In this post, we will talk about techniques for bounding ![]() . For more on the smallest eigenvalue

. For more on the smallest eigenvalue ![]() , see the previous post.

, see the previous post.

Setting

Let’s begin by establishing some notation, mostly the same as previous posts as this series. We work with a reversible Markov chain with transition matrix ![]() and stationary distribution

and stationary distribution ![]() .

.

As in previous posts, we identify vectors ![]() and functions

and functions ![]() , treating them as one and the same

, treating them as one and the same ![]() .

.

For a vector/function ![]() ,

, ![]() and

and ![]() denote the variance with respect to the stationary distribution

denote the variance with respect to the stationary distribution ![]() :

:

![]()

![]()

We shall also use expressions such as

We denote the eigenvalues of the transition matrix are ![]() . The associated eigenvectors (eigenfunctions)

. The associated eigenvectors (eigenfunctions) ![]() are orthonormal in the

are orthonormal in the ![]() -inner product

-inner product

![Rendered by QuickLaTeX.com \[\langle \varphi_i ,\varphi_j\rangle = \begin{cases}1, & i = j, \\0, & i \ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-1bcdaefcfb18b68324fe7793e2cb3534_l3.png)

Variance and Local Variance

To discover methods for bounding ![]() , we begin by investigating a seemingly simple question:

, we begin by investigating a seemingly simple question:

How much variable is the output of a function

?

There are two natural quantities which provide answers to this question: the variance and the local variance. Poincaré inequalities—the main subject of this post—establish a relation between these two numbers. As a consequence, Poincaré inequalities will provide a bound on ![]() .

.

Variance

We begin with the first of our two main characters, the variance ![]() . The variance is a very familiar measure of variation, as it is defined for any random variable. It measures the average squared deviation of

. The variance is a very familiar measure of variation, as it is defined for any random variable. It measures the average squared deviation of ![]() from its mean, where

from its mean, where ![]() is drawn from the stationary distribution

is drawn from the stationary distribution ![]() .

.

Another helpful formula for the variance is the exchangeable pairs formula:

![]()

Local Variance

The exchangeable pairs formula shows that variance is a measure of the global variability of the function: It measures the amount ![]() varies across locations

varies across locations ![]() and

and ![]() sampled randomly from the entire set of possible states

sampled randomly from the entire set of possible states ![]() .

.

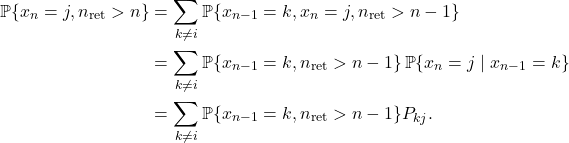

The local variance measures how much ![]() varies between points

varies between points ![]() and

and ![]() which are separated by just one step of the Markov chain, thus providing a more local measure of variability. Let

which are separated by just one step of the Markov chain, thus providing a more local measure of variability. Let ![]() be sampled from the stationary distribution, and let

be sampled from the stationary distribution, and let ![]() denote one step of the Markov chain after

denote one step of the Markov chain after ![]() . The local variance is

. The local variance is

![]()

An important note: The variance of a function ![]() depends only on the stationary distribution

depends only on the stationary distribution ![]() . By contrast, the local variance depends on the Markov transition matrix

. By contrast, the local variance depends on the Markov transition matrix ![]() .

.

Poincaré Inequalities

If ![]() does not vary much over a single step of the Markov chain, then it seems reasonable to expect that it doesn’t vary much globally. This intuition is made quantitative using Poincaré inequalities.

does not vary much over a single step of the Markov chain, then it seems reasonable to expect that it doesn’t vary much globally. This intuition is made quantitative using Poincaré inequalities.

Definition (Poincaré inequality). A Markov chain is said to satisfy a Poincaré inequality with constant

if

(1)

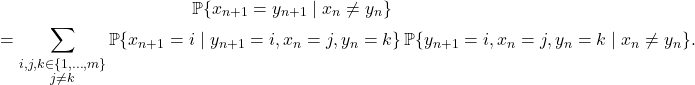

Poincaré Inequalities and Mixing

Poincaré inequalities are intimately related with the speed of mixing for a Markov chain.

To see why, consider a function ![]() with small local variance. Because

with small local variance. Because ![]() has small local variance,

has small local variance, ![]() is close to

is close to ![]() ,

, ![]() is close to

is close to ![]() , etc.; the function

, etc.; the function ![]() does not change much over a single step of the Markov chain. Does this mean that the (global) variance of

does not change much over a single step of the Markov chain. Does this mean that the (global) variance of ![]() will also be small? Not necessarily. If the Markov chain takes a long time to mix, the small local variance can accumulate to a large global variance over many steps of the Markov chain. Thus, a slowly mixing chain has a large Poincaré constant

will also be small? Not necessarily. If the Markov chain takes a long time to mix, the small local variance can accumulate to a large global variance over many steps of the Markov chain. Thus, a slowly mixing chain has a large Poincaré constant ![]() . Conversely, if the chain mixes rapidly, the Poincaré constant

. Conversely, if the chain mixes rapidly, the Poincaré constant ![]() is small.

is small.

This relation between mixing and Poincaré inequalities is quantified by the following theorem:

Theorem (Poincaré inequalities from eigenvalues). The Markov chain satisfies a Poincaré inequality with constant

This is the smallest possible Poincaré inequality for the Markov chain.

One way to interpret this result is that the eigenvalue ![]() gives you Poincaré inequality (1). But we can flip this result around: Poincaré inequalities (1) establish bounds on the eigenvalue

gives you Poincaré inequality (1). But we can flip this result around: Poincaré inequalities (1) establish bounds on the eigenvalue ![]() .

.

Corollary (Eigenvalue bounds from Poincaré inequalities). If the Markov chain satisfies a Poincaré inequality (1) for a certain constant

, then

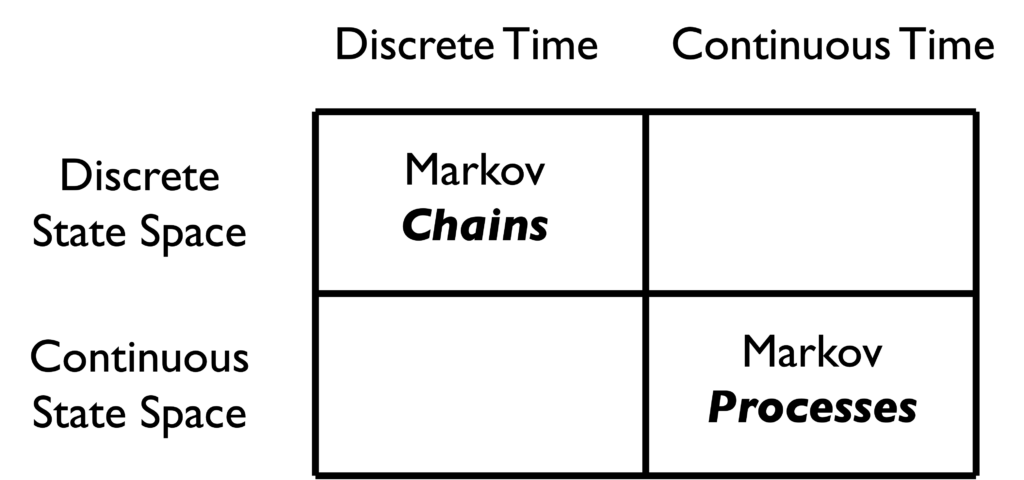

A View to the Continuous Setting

For a particularly vivid example of a Poincaré inequality, it will be helpful to take a brief detour to the world of continuous Markov processes. This series has—to this point—exclusively focused on Markov chains ![]() that have finitely many possible states and are indexed by discrete times

that have finitely many possible states and are indexed by discrete times ![]() .. We can generalize Markov chains by lifting both of these restrictions, considering Markov processes

.. We can generalize Markov chains by lifting both of these restrictions, considering Markov processes ![]() which take values in continuous space (such as the real line

which take values in continuous space (such as the real line ![]() ) and are indexed by continuous times

) and are indexed by continuous times ![]() .

.

The mathematical details for Markov processes are a lot more complicated than for their Markov chain siblings, so we will keep it light on details.

For this example, our Markov process will be the Ornstein–Uhlenbeck process. This process has the somewhat mysterious form

![]()

Conditional on its starting value

, the Ornstein–Uhlenbeck process

is has a Gaussian distribution with mean

and variance

.

From this observation, it appears that the stationary distribution of the Ornstein–Uhlenbeck process is the standard Gaussian distribution. Indeed, this is the case, and the Ornstein–Uhlenbeck process converges to stationarity exponentially fast.

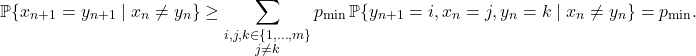

Since we have exponential convergence to stationarity,1And, as can be checked, the Ornstein–Uhlenbeck process is reversible, in the appropriate sense. there’s a Poincaré inequality lurking in the background, known as the Gaussian Poincaré inequality. Letting ![]() denote a standard Gaussian random variable, Gaussian Poincaré inequality states that

denote a standard Gaussian random variable, Gaussian Poincaré inequality states that

(2) ![]()

![]()

The Gaussian Poincaré inequality presents a very clear demonstration of what a Poincaré inequality is: The global variance of the function ![]() is controlled by its local variability, here quantified by the expected squared derivative:

is controlled by its local variability, here quantified by the expected squared derivative:

![]()

Our main interest in Poincaré inequalities in this post is instrumental, we seek to use Poincaré inequalities to understand the mixing properties of Markov chains. But the Gaussian Poincaré inequality demonstrates that Poincaré inequalities are also interesting on their own terms. The inequality (2) is a useful inequality for bounding the variance of a function of a Gaussian random variable. As an immediate example, observe that the function ![]() has derivative bounded by

has derivative bounded by ![]() :

: ![]() . Thus,

. Thus,

![]()

Poincaré Inequalities and Eigenvalues

For the remainder of this post, we will develop the connection between Poincaré inequalities and eigenvalues, leading to a proof of our main theorem:

Theorem (Poincaré inequalities from eigenvalues). The Markov chain satisfies a Poincaré inequality with constant

That is,

(3)

There exists a functionfor which equality is attained.

We begin by showing that it suffices to consider mean-zero functions ![]() to prove (3). Next, we derive formulas for

to prove (3). Next, we derive formulas for ![]() and

and ![]() using the

using the ![]() -inner product

-inner product ![]() . We conclude by expanding

. We conclude by expanding ![]() in eigenvectors of

in eigenvectors of ![]() and deriving the Poincaré inequality (3).

and deriving the Poincaré inequality (3).

Shift to Mean-Zero

To prove the Poincaré inequality (3), we are free to assume that ![]() has mean zero,

has mean zero, ![]() . Indeed, both the variance

. Indeed, both the variance ![]() and local variance

and local variance ![]() don’t change if we shift

don’t change if we shift ![]() by a constant

by a constant ![]() . That is, letting

. That is, letting ![]() denote the function

denote the function

![]()

![]()

![]()

Variance

Our strategy for proving the main theorem will be to develop a more linear algebraic formula for the variance and local variance. Let’s begin with the variance.

Assume ![]() . Then the variance is

. Then the variance is

![]()

![]()

Local Variance

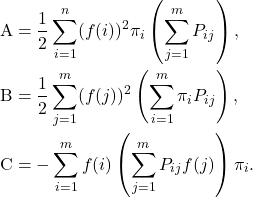

Now we derive a formula for the local variance:

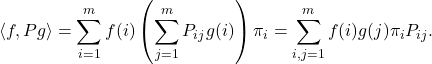

![]()

![Rendered by QuickLaTeX.com \[\mathcal{E}(f) = \frac{1}{2} \sum_{i,j=1}^m (f(i)-f(j))^2 \pi_iP_{ij}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-3b001418aee305354517a3784887e27f_l3.png)

![]()

Let’s take each of these terms one-by-one. For ![]() , recognize that

, recognize that ![]() . Thus,

. Thus,

![]()

![Rendered by QuickLaTeX.com \[{\rm B} = \frac{1}{2} \sum_{j=1}^m (f(j))^2 \left(\sum_{i=1}^m \pi_j P_{ji} \right) = \frac{1}{2} \sum_{j=1}^m (f(j))^2 \pi_j = \frac{1}{2} \langle f, f\rangle.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d26f896a5e016a55ba59c4b0abca8f69_l3.png)

![]()

![]()

Conclusion

The Poincaré inequality

![]()

![]()

![]()

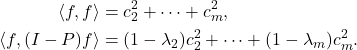

Consider a decomposition of ![]() as a linear combination of

as a linear combination of ![]() ‘s eigenvectors:

‘s eigenvectors:

![]()

Using the orthonormality of ![]() under the

under the ![]() -inner product and the eigenvalue relation

-inner product and the eigenvalue relation ![]() , we have that

, we have that

Thus,

(4)

where

![]()

![]()

![]()

![Rendered by QuickLaTeX.com \[\hat{f}_{N}\coloneqq \frac{1}{N}\sum_{n=0}^{N-1} f(x_i).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-591e83a4f5551f6826ddffb5de03ce72_l3.png)

![Rendered by QuickLaTeX.com \[\hat{f}_N = \frac{1}{N}\sum_{n=0}^{N-1} f(x_i)\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-615239005583b665db04e6a578abec08_l3.png)

![Rendered by QuickLaTeX.com \[\langle \varphi_i,\varphi_j \rangle = \expect_{x\sim \pi} [\varphi_i(x)\varphi_j(x)] = \begin{cases}1, & i = j, \\0, & i \ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-139934705e34440f3c903f0e089f580b_l3.png)

![Rendered by QuickLaTeX.com \begin{align*}\expect[f(x_0)f(x_n)] &= \sum_{i=1}^m \expect[f(x_0) f(x_n) \mid x_0 = i] \prob\{x_0 = i\} \\&= \sum_{i=1}^m f(i) \expect[f(x_n) \mid x_0 = i] \pi_i.\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2e350d683f2a7cfff71028de3a45dbf8_l3.png)

![Rendered by QuickLaTeX.com \[\expect[f(x_0)f(x_n)] = \left\langle \sum_{i=1}^m c_i \varphi_i,\sum_{i=1}^m c_i P^n\varphi_i \right\rangle = \left\langle \sum_{i=1}^m c_i \varphi_i,\sum_{i=1}^m c_i \lambda_i^n \varphi_i \right\rangle = \sum_{i=1}^m \lambda_i^n \, c_i^2.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-63bbc0c0f98d4c57de9a53a7162295f3_l3.png)

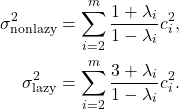

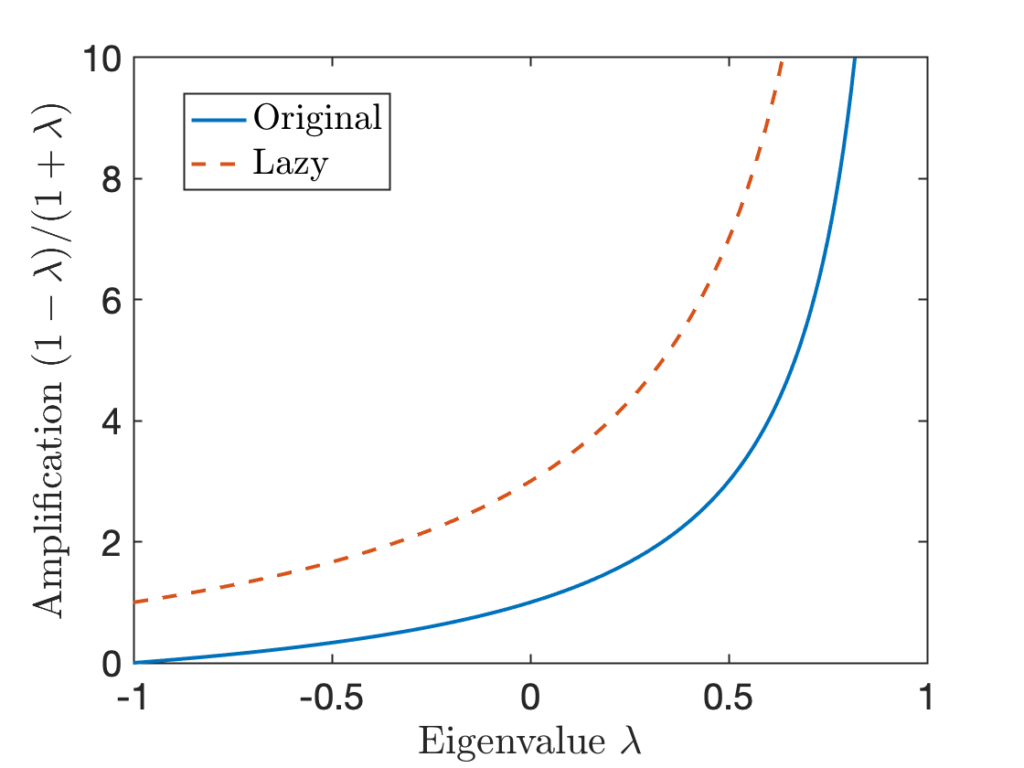

![Rendered by QuickLaTeX.com \begin{align*}\sigma^2 &= \Var[f(x_0)] + 2\sum_{n=1}^\infty \Cov(f(x_0),f(x_n)) \\&= \sum_{i=2}^m c_i^2 + 2\sum_{n=1}^\infty \sum_{i=2}^m \lambda_i^n \, c_i^2 \\&= -\sum_{i=2}^m c_i^2 + 2\sum_{i=2}^m \left(\sum_{n=0}^\infty \lambda_i^n\right)c_i^2 .\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-da05968b01086497f8e6e098e384b170_l3.png)

![Rendered by QuickLaTeX.com \[\sigma^2 = -\sum_{i=2}^m c_i^2 + 2\sum_{i=2}^m \frac{1}{1-\lambda_i} c_i^2 = \sum_{i=2}^m \left(\frac{2}{1-\lambda_i}-1\right)c_i^2 = \sum_{i=2}^m \frac{1+\lambda_i}{1-\lambda_i} c_i^2. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-61c9cb2ae48b8b474edd697e36a1e58c_l3.png)

![Rendered by QuickLaTeX.com \[\hat{f}_N = \frac{1}{N} \sum_{i=0}^{N-1} f(x_n)\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-76330a2c8101f63831cdc0bcdd4e0cee_l3.png)

![Rendered by QuickLaTeX.com \[[u_i,u_j]=\begin{cases}1, & i=j, \\0,& i\ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-6485908bc8e69e0ad6fb16b826344f60_l3.png)

![Rendered by QuickLaTeX.com \[\langle f, Pg \rangle= \sum_{i,j=1}^m f(i) g(j) \pi_jP_{ji} = \sum_{j=1}^m \left( \sum_{i=1}^m f(i) P_{ij} \right) g(j) \pi_j = \langle Pf, g\rangle.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2e8d0788b8c0f3ee0e4602e817957cfa_l3.png)

![Rendered by QuickLaTeX.com \[\langle\varphi_i,\varphi_j\rangle=\begin{cases}1, &i=j\\0,& i\ne j.\end{cases}\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-fd0e99bc2439329beccce1339db0aee9_l3.png)

![Rendered by QuickLaTeX.com \[\chi^2(\sigma \mid\mid \pi) \coloneqq \Var \left(\frac{d\sigma}{d\pi} \right) = \expect \left[\left( \frac{d\sigma}{d\pi} - 1 \right)^2\right] = \expect \left[\left(\frac{d\sigma}{d\pi}\right)^2\right] - 1.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2521b63ae50f02fe6099c54dcc4e00a4_l3.png)

![Rendered by QuickLaTeX.com \[\norm{\sigma - \pi}_{\rm TV} = \frac{1}{2} \expect \left[ \left| \frac{d\sigma}{d\pi} - 1 \right| \right] \le \frac{1}{2} \left(\expect \left[ \left( \frac{d\sigma}{d\pi} - 1 \right)^2 \right]\right)^{1/2} = \frac{1}{2} \sqrt{\chi^2(\sigma \mid\mid \pi)}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4986b7434b84b79aff6f94df2ec4b4e8_l3.png)

![Rendered by QuickLaTeX.com \[\left( \frac{d\rho^{(n+1)}}{d\pi}\right)_j = \frac{\rho_j^{(n+1)}}{\pi_j} = \frac{\sum_{i=1}^m \rho^{(n)}_i P_{ij}}{\pi_j}= \sum_{i=1}^m \rho^{(n)}_i\frac{P_{ij}}{\pi_j}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-b6c60937b23d2509e4a3e4fa1f121427_l3.png)

![Rendered by QuickLaTeX.com \[\left( \frac{d\rho^{(n+1)}}{d\pi}\right)_j = \sum_{i=1}^m\rho^{(n)}_i \frac{P_{ji}}{\pi_i} = \sum_{i=1}^m P_{ji} \frac{\rho^{(n)}_i}{\pi_i} = \left( P \frac{d \rho^{(n)}}{d\pi} \right)_j.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d22315b80e3fc4d3736d0c49f877fa9c_l3.png)

![Rendered by QuickLaTeX.com \[\chi^2(\rho^{(n)} \mid\mid \pi) = \sum_{i=2}^m c_i^2 \lambda_i^{2n} \le (\max \{ \lambda_2, -\lambda_m \})^{2n} \sum_{i=2}^m c_i^2 = (\max \{ \lambda_2, -\lambda_m \})^{2n} \chi^2(\rho^{(0)} \mid\mid \pi).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-7b82e2d3cefcede8d4a7a72b1fa3ceea_l3.png)

![Rendered by QuickLaTeX.com \[a_j = P_{ij} + \sum_{n=2}^\infty \sum_{k\ne i} \prob\{x_{n-1} = k, n_{\rm ret} > n-1 \} P_{kj}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-f243b52714b96a419b94980a12f078ee_l3.png)

![Rendered by QuickLaTeX.com \[a_j = P_{ij} + \sum_{k\ne i} \left(\sum_{n=1}^\infty \prob\{x_n = k, n_{\rm ret} > n \}\right) P_{kj}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cad1677f621e66a91f850ab352cedce0_l3.png)

![Rendered by QuickLaTeX.com \[a_j = a_iP_{ij} + \sum_{k\ne i} a_k P_{kj} = \sum_{k=1}^m a_k P_{kj},\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cecaa5c06b9ce9d9c3f4aa814dfc2323_l3.png)