For this summer, I’ve decided to open up another little mini-series on this blog called Markov Musings about the mathematical analysis of Markov chains, jumping off from my previous post on the subject. My main goal in writing this is to learn the material for myself, and I hope that what I produce is useful to others. My main resources are:

- The book Markov Chains and Mixing Times by Levin, Peres, and Wilmer;

- Lecture notes and videos by theoretical computer scientists Sinclair, Oveis Gharan, O’Donnell, and Schulman; and

- These notes by Rob Webber, for a complementary perspective from a scientific computing point of view.

Be warned, these posts will be more mathematical in nature than most of the material on my blog.

In my previous post on Markov chains, we discussed the fundamental theorem of Markov chains. Here is a slightly stronger version:

Theorem (fundamental Theorem of Markov chains). A primitive Markov chain on a finite state space has a stationary distribution

. When initialized from any starting distribution

, the distributions

of the chain at times

converge at an exponential rate to

.

My goal in this post will be to provide a proof of this fact using the method of couplings, adapted from the notes of Sinclair and Oveis Gharan. I like this proof because it feels very probabilistic (as opposed to more linear algebraic proofs of the fundamental theorem).

Here, and throughout, we say a matrix or vector is ![]() if all of its entries are strictly positive. Recall that a Markov chain with transition matrix

if all of its entries are strictly positive. Recall that a Markov chain with transition matrix ![]() is primitive if there exists

is primitive if there exists ![]() for which

for which ![]() . For this post, all Markov chains will have state space

. For this post, all Markov chains will have state space ![]() .

.

Total Variation Distance

In order to quantify the rate of Markov chain convergence, we need a way of quantifying the closeness of two probability distributions. This motivates the following definition:

Definition (total variation distance). The total variation distance between probability distributions

and

on

is the maximum difference between the probability of an event

under

and under

:

The total variation distance is always between ![]() and

and ![]() . It is zero only when

. It is zero only when ![]() and

and ![]() are the same distribution. It is one only when

are the same distribution. It is one only when ![]() and

and ![]() have disjoint supports—that is, there is no

have disjoint supports—that is, there is no ![]() for which

for which ![]() .

.

The total variation distance is a very strict way of comparing two probability distributions. Sinclair’s notes provide a vivid example. Suppose that ![]() denotes the uniform distribution on all possible ways of shuffling a deck of

denotes the uniform distribution on all possible ways of shuffling a deck of ![]() cards, and

cards, and ![]() denotes the uniform distribution on all ways of shuffling

denotes the uniform distribution on all ways of shuffling ![]() cards with the ace of spades at the top. Then the total variation distance between

cards with the ace of spades at the top. Then the total variation distance between ![]() and

and ![]() is

is ![]() . Thus, despite these distributions seeming quite similar to us, the total variation distance between

. Thus, despite these distributions seeming quite similar to us, the total variation distance between ![]() and

and ![]() is almost as far apart as possible. There are a number of alternative ways of measuring the closeness of probability distributions, some of which are less severe.

is almost as far apart as possible. There are a number of alternative ways of measuring the closeness of probability distributions, some of which are less severe.

Couplings

Given a probability distribution ![]() , it can be helpful to work with random variables drawn from

, it can be helpful to work with random variables drawn from ![]() . Say a random variable

. Say a random variable ![]() is drawn from the distribution

is drawn from the distribution ![]() , written

, written ![]() , if

, if

![]()

To understand the total variation distance more, we shall need the following definition:

Definition (coupling). Given probability distributions

on

, a coupling

is a distribution on

such that if a pair of random variables

is drawn from

, then

and

. Denote the set of all couplings of

and

as

.

More succinctly, a coupling of ![]() and

and ![]() is a joint distribution with marginals

is a joint distribution with marginals ![]() and

and ![]() .

.

Couplings are related to total variation distance by the following lemma:1A proof is provided in Lemma 4.2 of Oveis Gharan’s notes. The coupling lemma holds in the full generality of probability measures on general spaces, and can be viewed as a special case of the Monge–Kantorovich duality principle of optimal transport. See Theorem 4.13 and Example 4.14 in van Handel’s notes for details.

Lemma (coupling lemma). Let

and

be distributions on

. Then

Here,

represents the probability for variables

drawn from joint distribution

.

To see a simple example, suppose ![]() . Then the coupling lemma tells us that there is a coupling

. Then the coupling lemma tells us that there is a coupling ![]() of

of ![]() and itself such that

and itself such that ![]() . Indeed, such a coupling can be obtained by drawing

. Indeed, such a coupling can be obtained by drawing ![]() and setting

and setting ![]() . This defines a joint distribution

. This defines a joint distribution ![]() under which

under which ![]() with 100% probability.

with 100% probability.

To unpack the coupling lemma a little more, it really contains two statements:

- For any coupling

between

between  and

and  and

and  ,

, ![Rendered by QuickLaTeX.com \[\norm{\rho - \sigma}_{\rm TV} \le \prob \{x \ne y \}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-2b881043ba005f53ae84ada8ebe53191_l3.png)

- There exists a coupling

between

between  and

and  such that when

such that when  , then

, then ![Rendered by QuickLaTeX.com \[\norm{\rho - \sigma}_{\rm TV} = \prob \{x \ne y\}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-e692d3e9715ed9b325be70775ba0eac3_l3.png)

We will need both of these statements in our proof of the fundamental theorem.

Proof of the Fundamental Theorem

With these ingredients in place, we are now ready to prove the fundamental theorem of Markov chains. First, we will assume there exists a stationary distribution ![]() . We will provide a proof of this fact at the end of this post.

. We will provide a proof of this fact at the end of this post.

Suppose we initialize the chain in distribution ![]() , and let

, and let ![]() denote the distributions of the chain at times

denote the distributions of the chain at times ![]() . Our goal will be to establish that

. Our goal will be to establish that ![]() as

as ![]() at an exponential rate.

at an exponential rate.

Distance to Stationarity is Non-Increasing

First, let us establish the more modest claim that ![]() is non-increasing

is non-increasing

(1) ![]()

Consider two versions of the chain ![]() and

and ![]() , one initialized in

, one initialized in ![]() and the other initialized with

and the other initialized with ![]() . We now apply the coupling lemma to the states

. We now apply the coupling lemma to the states ![]() and

and ![]() of the chains at time

of the chains at time ![]() . By the coupling lemma, there exists a coupling of

. By the coupling lemma, there exists a coupling of ![]() and

and ![]() such that

such that

![]()

- If

, then draw

, then draw  according to the transition matrix and set

according to the transition matrix and set  .

. - If

, then run the two chains independently to generate

, then run the two chains independently to generate  and

and  .

.

By the way we’ve designed the coupling,

![]()

![]()

We have established that the distance to stationarity is non-increasing.

This proof already contains the essence of the argument as to why Markov chains mix. We run two versions of the Markov chain, one initialized in an arbitrary distribution ![]() and the other initialized in the stationary distribution

and the other initialized in the stationary distribution ![]() . While the states of the two chains are different, we run the chains independently. When the chains meet, we continue moving the chains together in synchrony. After running for long enough, the two chains are likely to meet, implying the chain has mixed.

. While the states of the two chains are different, we run the chains independently. When the chains meet, we continue moving the chains together in synchrony. After running for long enough, the two chains are likely to meet, implying the chain has mixed.

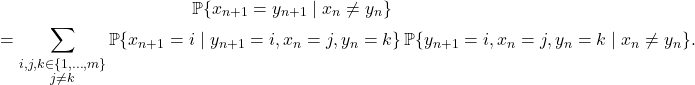

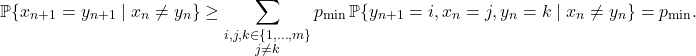

The All-to-All Case

As another stepping stone to the complete proof, let us prove the fundamental theorem in the special case where there is a strictly positive probability of moving between any two states, i.e., assuming ![]() .

.

Consider the two chains ![]() and

and ![]() coupled as in the previous section. We compute the probability

coupled as in the previous section. We compute the probability ![]() more carefully. Write it as

more carefully. Write it as

(2) ![]()

To compute

![]()

Substituting back in (2), we obtain

![]()

![]()

![]()

The General Case

We’ve now proved the fundamental theorem in the special case when ![]() . Fortunately, together with our earlier observation that distance to stationarity is non-increasing, we can upgrade this proof into a proof for the general case.

. Fortunately, together with our earlier observation that distance to stationarity is non-increasing, we can upgrade this proof into a proof for the general case.

We have assumed the Markov chain ![]() is primitive, so there exists a time

is primitive, so there exists a time ![]() for which

for which ![]() . Construct an auxilliary Markov chain

. Construct an auxilliary Markov chain ![]() such that one step of the auxilliary chain consists of running

such that one step of the auxilliary chain consists of running ![]() steps of the original chain:

steps of the original chain:

![]()

![]()

![]()

Mixing Time

We’ve proven a quantiative version of the fundamental theorem of Markov chains, showing that the total variation distance to stationarity decreases exponentially as a function of time. For algorithmic applications of Markov chains, we also care about the rate of convergence, as it dictates how long we need to run the chain. To this end, we define the mixing time:

Definition (mixing time). The mixing time

of a Markov chain is the number of steps required for the distance to stationarity to be at most

when started from a worst-case distribution:

The mixing time controls the rate of convergence for a Markov chain:

Theorem (mixing time as a convergence rate). For any starting distribution,

In particular, for ![]() to be within

to be within ![]() total variation distance of

total variation distance of ![]() , we only need to run the chain for

, we only need to run the chain for ![]() steps:

steps:

Corollary (time to mix to

-stationarity). If

, then

.

These results can be proven using very similar techniques to the proof of the fundamental theorem from above. See Sinclair’s notes for more details.

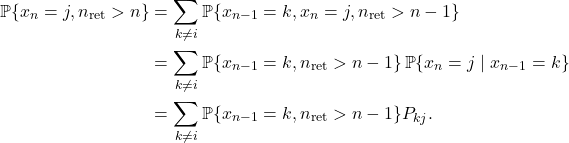

![Rendered by QuickLaTeX.com \[a_j = P_{ij} + \sum_{n=2}^\infty \sum_{k\ne i} \prob\{x_{n-1} = k, n_{\rm ret} > n-1 \} P_{kj}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-f243b52714b96a419b94980a12f078ee_l3.png)

![Rendered by QuickLaTeX.com \[a_j = P_{ij} + \sum_{k\ne i} \left(\sum_{n=1}^\infty \prob\{x_n = k, n_{\rm ret} > n \}\right) P_{kj}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cad1677f621e66a91f850ab352cedce0_l3.png)

![Rendered by QuickLaTeX.com \[a_j = a_iP_{ij} + \sum_{k\ne i} a_k P_{kj} = \sum_{k=1}^m a_k P_{kj},\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cecaa5c06b9ce9d9c3f4aa814dfc2323_l3.png)

3 thoughts on “Markov Musings 1: The Fundamental Theorem”