Let’s start our discussion of low-rank matrices with an application. Suppose that there are 1000 weather stations spread across the world, and we record the temperature during each of the 365 days in a year.1I borrow the idea for the weather example from Candes and Plan. If we were to store each of the temperature measurements individually, we would need to store 365,000 numbers. However, we have reasons to believe that significant compression is possible. Temperatures are correlated across space and time: If it’s hot in Arizona today, it’s likely it was warm in Utah yesterday.

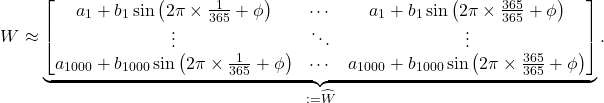

If we are particularly bold, we might conjecture that the weather approximately experiences a sinusoidal variation over the course of the year:

(1) ![]()

For a station ![]() ,

, ![]() denotes the average temperature of the station and

denotes the average temperature of the station and ![]() denotes the maximum deviation above or below this station, signed so that it is warmer than average in the Northern hemisphere during June-August and colder-than-average in the Southern hemisphere during these months. The phase shift

denotes the maximum deviation above or below this station, signed so that it is warmer than average in the Northern hemisphere during June-August and colder-than-average in the Southern hemisphere during these months. The phase shift ![]() is chosen so the hottest (or coldest) day in the year occurs at the appropriate time. This model is clearly grossly inexact: The weather does not satisfy a simple sinusoidal model. However, we might plausibly expect it to be fairly informative. Further, we have massively compressed our data, only needing to store the

is chosen so the hottest (or coldest) day in the year occurs at the appropriate time. This model is clearly grossly inexact: The weather does not satisfy a simple sinusoidal model. However, we might plausibly expect it to be fairly informative. Further, we have massively compressed our data, only needing to store the ![]() numbers

numbers ![]() rather than our full data set of 365,000 temperature values.

rather than our full data set of 365,000 temperature values.

Let us abstract this approximation procedure in a linear algebraic way. Let’s collect our weather data into a matrix ![]() with 1000 rows, one for each station, and 365 columns, one for each day of the year. The entry

with 1000 rows, one for each station, and 365 columns, one for each day of the year. The entry ![]() corresponding to station

corresponding to station ![]() and day

and day ![]() is the temperature at station

is the temperature at station ![]() on day

on day ![]() . The approximation Eq. (1) corresponds to the matrix approximation

. The approximation Eq. (1) corresponds to the matrix approximation

(2)

Let us call the matrix on the right-hand side of Eq. (2) ![]() for ease of discussion. When presented in this linear algebraic form, it’s less obvious in what way

for ease of discussion. When presented in this linear algebraic form, it’s less obvious in what way ![]() is simpler than

is simpler than ![]() , but we know from Eq. (1) and our previous discussion that

, but we know from Eq. (1) and our previous discussion that ![]() is much more efficient to store than

is much more efficient to store than ![]() . This leads us naturally to the following question: Linear algebraically, in what way is

. This leads us naturally to the following question: Linear algebraically, in what way is ![]() simpler than

simpler than ![]() ?

?

The answer is that the matrix ![]() has low rank. The rank of the matrix

has low rank. The rank of the matrix ![]() is

is ![]() whereas

whereas ![]() almost certainly possesses the maximum possible rank of

almost certainly possesses the maximum possible rank of ![]() . This example is suggestive that low-rank approximation, where we approximate a general matrix by one of much lower rank, could be a powerful tool. But there any many questions about how to use this tool and how widely applicable it is. How can we compress a low-rank matrix? Can we use this compressed matrix in computations? How good of a low-rank approximation can we find? What even is the rank of a matrix?

. This example is suggestive that low-rank approximation, where we approximate a general matrix by one of much lower rank, could be a powerful tool. But there any many questions about how to use this tool and how widely applicable it is. How can we compress a low-rank matrix? Can we use this compressed matrix in computations? How good of a low-rank approximation can we find? What even is the rank of a matrix?

What is Rank?

Let’s do a quick review of the foundations of linear algebra. At the core of linear algebra is the notion of a linear combination. A linear combination of vectors ![]() is a weighted sum of the form

is a weighted sum of the form ![]() , where

, where ![]() are scalars2In our case, matrices will be comprised of real numbers, making scalars real numbers as well.. A collection of vectors

are scalars2In our case, matrices will be comprised of real numbers, making scalars real numbers as well.. A collection of vectors ![]() is linearly independent if there is no linear combination of them which produces the zero vector, except for the trivial

is linearly independent if there is no linear combination of them which produces the zero vector, except for the trivial ![]() -weighted linear combination

-weighted linear combination ![]() . If

. If ![]() are not linearly independent, then they’re linearly dependent.

are not linearly independent, then they’re linearly dependent.

The column rank of a matrix ![]() is the size of the largest possible subset of

is the size of the largest possible subset of ![]() ‘s columns which are linearly independent. So if the column rank of

‘s columns which are linearly independent. So if the column rank of ![]() is

is ![]() , then there is some sub-collection of

, then there is some sub-collection of ![]() columns of

columns of ![]() which are linearly independent. There may be some different sub-collections of

which are linearly independent. There may be some different sub-collections of ![]() columns from

columns from ![]() that are linearly dependent, but every collection of

that are linearly dependent, but every collection of ![]() columns is guaranteed to be linearly dependent. Similarly, the row rank is defined to be the maximum size of any linearly independent collection of rows taken from

columns is guaranteed to be linearly dependent. Similarly, the row rank is defined to be the maximum size of any linearly independent collection of rows taken from ![]() . A remarkable and surprising fact is that the column rank and row rank are equal. Because of this, we refer to the column rank and row rank simply as the rank; we denote the rank of a matrix

. A remarkable and surprising fact is that the column rank and row rank are equal. Because of this, we refer to the column rank and row rank simply as the rank; we denote the rank of a matrix ![]() by

by ![]() .

.

Linear algebra is famous for its multiple equivalent ways of phrasing the same underlying concept, so let’s mention one more way of thinking about the rank. Define the column space of a matrix to consist of the set of all linear combinations of its columns. A basis for the column space is a linear independent collection of elements of the column space of the largest possible size. Every element of the column space can be written uniquely as a linear combination of the elements in a basis. The size of a basis for the column space is called the dimension of the column space. With these last definitions in place, we note that the rank of ![]() is also equal to the dimension of the column space of

is also equal to the dimension of the column space of ![]() . Likewise, if we define the row space of

. Likewise, if we define the row space of ![]() to consist of all linear combinations of

to consist of all linear combinations of ![]() ‘s rows, then the rank of

‘s rows, then the rank of ![]() is equal to the dimension of

is equal to the dimension of ![]() ‘s row space.

‘s row space.

The upshot is that if a matrix ![]() has a small rank, its many columns (or rows) can be assembled as linear combinations from a much smaller collection of columns (or rows). It is this fact that allows a low-rank matrix to be compressed for algorithmically useful ends.

has a small rank, its many columns (or rows) can be assembled as linear combinations from a much smaller collection of columns (or rows). It is this fact that allows a low-rank matrix to be compressed for algorithmically useful ends.

Rank Factorizations

Suppose we have an ![]() matrix

matrix ![]() which is of rank

which is of rank ![]() much smaller than both

much smaller than both ![]() and

and ![]() . As we saw in the introduction, we expect that such a matrix can be compressed to be stored with many fewer than

. As we saw in the introduction, we expect that such a matrix can be compressed to be stored with many fewer than ![]() entries. How can this be done?

entries. How can this be done?

Let’s work backwards and start with the answer to this question and then see why it works. Here’s a fact: a matrix ![]() of rank

of rank ![]() can be factored as

can be factored as ![]() , where

, where ![]() is an

is an ![]() matrix and

matrix and ![]() is an

is an ![]() matrix. In other words,

matrix. In other words, ![]() can be factored as a “thin” matrix

can be factored as a “thin” matrix ![]() with

with ![]() columns times a “fat” matrix

columns times a “fat” matrix ![]() with

with ![]() rows. We use the symbols

rows. We use the symbols ![]() and

and ![]() for these factors to stand for “left” and “right”; we emphasize that

for these factors to stand for “left” and “right”; we emphasize that ![]() and

and ![]() are general

are general ![]() and

and ![]() matrices, not necessarily possessing any additional structure.3Readers familiar with numerical linear algebra may instinctively want to assume that

matrices, not necessarily possessing any additional structure.3Readers familiar with numerical linear algebra may instinctively want to assume that ![]() and

and ![]() are lower and upper triangular; we do not make this assumption. The fact that we write the second term in this factorization as a transposed matrix “

are lower and upper triangular; we do not make this assumption. The fact that we write the second term in this factorization as a transposed matrix “![]() ” is unimportant: We adopt a convention where we write a fat matrix as the transpose of a thin matrix. This notational choice is convenient allows us to easily distinguish between thin and fat matrices in formulas; this choice of notation is far from universal. We call a factorization such as

” is unimportant: We adopt a convention where we write a fat matrix as the transpose of a thin matrix. This notational choice is convenient allows us to easily distinguish between thin and fat matrices in formulas; this choice of notation is far from universal. We call a factorization such as ![]() a rank factorization.4Other terms, such as full rank factorization or rank-revealing factorization, have been been used to describe the same concept. A warning is that the term “rank-revealing factorization” can also refer to a factorization which encodes a good low-rank approximation to

a rank factorization.4Other terms, such as full rank factorization or rank-revealing factorization, have been been used to describe the same concept. A warning is that the term “rank-revealing factorization” can also refer to a factorization which encodes a good low-rank approximation to ![]() rather than a genuine factorization of

rather than a genuine factorization of ![]() .

.

Rank factorizations are useful as we can compactly store ![]() by storing its factors

by storing its factors ![]() and

and ![]() . This reduces the storage requirements of

. This reduces the storage requirements of ![]() to

to ![]() numbers down from

numbers down from ![]() numbers. For example, if we store a rank factorization of the low-rank approximation

numbers. For example, if we store a rank factorization of the low-rank approximation ![]() from our weather example, we need only store 2,730 numbers rather than 365,000. In addition to compressing

from our weather example, we need only store 2,730 numbers rather than 365,000. In addition to compressing ![]() , we shall soon see that one can rapidly perform many calculations from the rank factorization

, we shall soon see that one can rapidly perform many calculations from the rank factorization ![]() without ever forming

without ever forming ![]() itself. For these reasons, whenever performing computations with a low-rank matrix, your first step should almost always be to express it using a rank factorization. From there, most computations can be done faster and using less storage.

itself. For these reasons, whenever performing computations with a low-rank matrix, your first step should almost always be to express it using a rank factorization. From there, most computations can be done faster and using less storage.

Having hopefully convinced ourselves of the usefulness of rank factorizations, let us now convince ourselves that every rank-![]() matrix

matrix ![]() does indeed possess a rank factorization

does indeed possess a rank factorization ![]() where

where ![]() and

and ![]() have

have ![]() columns. As we recalled in the previous section, since

columns. As we recalled in the previous section, since ![]() has rank

has rank ![]() , there is a basis of

, there is a basis of ![]() ‘s column space consisting of

‘s column space consisting of ![]() vectors

vectors ![]() . Collect these

. Collect these ![]() vectors as columns of an

vectors as columns of an ![]() matrix

matrix ![]() . But since the columns of

. But since the columns of ![]() comprise a basis of the column space of

comprise a basis of the column space of ![]() , every column of

, every column of ![]() can be written as a linear combination of the columns of

can be written as a linear combination of the columns of ![]() . For example, the

. For example, the ![]() th column

th column ![]() of

of ![]() can be written as a linear combination

can be written as a linear combination ![]() , where we suggestively use the labels

, where we suggestively use the labels ![]() for the scalar multiples in our linear combination. Collecting these coefficients into a matrix

for the scalar multiples in our linear combination. Collecting these coefficients into a matrix ![]() with

with ![]() th entry

th entry ![]() , we have constructed a factorization

, we have constructed a factorization ![]() . (Check this!)

. (Check this!)

This construction gives us a look at what a rank factorization is doing. The columns of ![]() comprise a basis for the column space of

comprise a basis for the column space of ![]() and the rows of

and the rows of ![]() comprise a basis for the row space of

comprise a basis for the row space of ![]() . Once we fix a “column basis”

. Once we fix a “column basis” ![]() , the “row basis”

, the “row basis” ![]() is comprised of linear combination coefficients telling us how to assemble the columns of

is comprised of linear combination coefficients telling us how to assemble the columns of ![]() as linear combinations of the columns in

as linear combinations of the columns in ![]() .5It is worth noting here that a slightly more expansive definition of rank factorization has also proved useful. In the more general definition, a rank factorization is a factorization of the form

.5It is worth noting here that a slightly more expansive definition of rank factorization has also proved useful. In the more general definition, a rank factorization is a factorization of the form ![]() where

where ![]() is

is ![]() ,

, ![]() is

is ![]() , and

, and ![]() is

is ![]() . With this definition, we can pick an arbitrary column basis

. With this definition, we can pick an arbitrary column basis ![]() and row basis

and row basis ![]() . Then, there exists a unique nonsingular “middle” matrix

. Then, there exists a unique nonsingular “middle” matrix ![]() such that

such that ![]() . Note that this means there exist many different rank factorizations of a matrix since one may pick different column bases

. Note that this means there exist many different rank factorizations of a matrix since one may pick different column bases ![]() for

for ![]() .6This non-uniqueness means one should take care to compute a rank factorization which is as “nice” as possible (say, by making sure

.6This non-uniqueness means one should take care to compute a rank factorization which is as “nice” as possible (say, by making sure ![]() and

and ![]() are as well-conditioned as is possible). If one modifies a rank factorization during the course of an algorithm, one should take care to make sure that the rank factorization remains nice. (As an example of what can go wrong, “unbalancing” between the left and right factors in a rank factorization can lead to convergence problems for optimization problems.)

are as well-conditioned as is possible). If one modifies a rank factorization during the course of an algorithm, one should take care to make sure that the rank factorization remains nice. (As an example of what can go wrong, “unbalancing” between the left and right factors in a rank factorization can lead to convergence problems for optimization problems.)

Now that we’ve convinced ourselves that every matrix indeed has a rank factorization, how do we compute them in practice? In fact, pretty much any matrix factorization will work. If you can think of a matrix factorization you’re familiar with (e.g., LU, QR, eigenvalue decomposition, singular value decomposition,…), you can almost certainly use it to compute a rank factorization. In addition, many dedicated methods have been developed for the specific purpose of computing rank factorizations which can have appealing properties which make them great for certain applications.

Let’s focus on one particular example of how a classic matrix factorization, the singular value decomposition, can be used to get a rank factorization. Recall that the singular value decomposition (SVD) of a (real) matrix ![]() is a factorization

is a factorization ![]() where

where ![]() and

and ![]() are an

are an ![]() and

and ![]() (real) orthogonal matrices and

(real) orthogonal matrices and ![]() is a (possibly rectangular) diagonal matrix with nonnegative, descending diagonal entries

is a (possibly rectangular) diagonal matrix with nonnegative, descending diagonal entries ![]() . These diagonal entries are referred to as the singular values of the matrix

. These diagonal entries are referred to as the singular values of the matrix ![]() . From the definition of rank, we can see that the rank of a matrix

. From the definition of rank, we can see that the rank of a matrix ![]() is equal to its number of nonzero singular values. With this observation in hand, a rank factorization of

is equal to its number of nonzero singular values. With this observation in hand, a rank factorization of ![]() can be obtained by letting

can be obtained by letting ![]() be the first

be the first ![]() columns of

columns of ![]() and

and ![]() being the first

being the first ![]() rows of

rows of ![]() (note that the remaining rows of

(note that the remaining rows of ![]() are zero).

are zero).

Computing with Rank Factorizations

Now that we have a rank factorization in hand, what is it good for? A lot, in fact. We’ve already seen that one can store a low-rank matrix expressed as a rank factorization using only ![]() numbers, down from

numbers, down from ![]() numbers by storing all of its entries. Similarly, if we want to compute the matrix-vector product

numbers by storing all of its entries. Similarly, if we want to compute the matrix-vector product ![]() for a vector

for a vector ![]() of length

of length ![]() , we can compute this product as

, we can compute this product as ![]() . This reduces the operation count down from

. This reduces the operation count down from ![]() operations to

operations to ![]() operations using the rank factorization. As a general rule of thumb, when we have something expressed as a rank factorization, we can usually expect to reduce our operation count (and storage costs) from something proportional to

operations using the rank factorization. As a general rule of thumb, when we have something expressed as a rank factorization, we can usually expect to reduce our operation count (and storage costs) from something proportional to ![]() (or worse) down to something proportional to

(or worse) down to something proportional to ![]() .

.

Let’s try something more complicated. Say we want to compute an SVD ![]() of

of ![]() . In the previous section, we computed a rank factorization of

. In the previous section, we computed a rank factorization of ![]() using an SVD, but suppose now we computed

using an SVD, but suppose now we computed ![]() in some other way. Our goal is to “upgrade” the general rank factorization

in some other way. Our goal is to “upgrade” the general rank factorization ![]() into an SVD of

into an SVD of ![]() . Computing the SVD of a general matrix

. Computing the SVD of a general matrix ![]() requires

requires ![]() operations (expressed in big O notation). Can we do better? Unfortunately, there’s a big roadblock for us: We need

operations (expressed in big O notation). Can we do better? Unfortunately, there’s a big roadblock for us: We need ![]() operations even to write down the matrices

operations even to write down the matrices ![]() and

and ![]() , which already prevents us from achieving an operation count proportional to

, which already prevents us from achieving an operation count proportional to ![]() like we’re hoping for. Fortunately, in most applications, only the first

like we’re hoping for. Fortunately, in most applications, only the first ![]() columns of

columns of ![]() and

and ![]() are important. Thus, we can change our goal to compute a so-called economy SVD of

are important. Thus, we can change our goal to compute a so-called economy SVD of ![]() , which is a factorization

, which is a factorization ![]() , where

, where ![]() and

and ![]() are

are ![]() and

and ![]() matrices with orthonormal columns and

matrices with orthonormal columns and ![]() is a

is a ![]() diagonal matrix listing the nonzero singular values of

diagonal matrix listing the nonzero singular values of ![]() in decreasing order.

in decreasing order.

Let’s see how to upgrade a rank factorization ![]() into an economy SVD

into an economy SVD ![]() . Let’s break our procedure into steps:

. Let’s break our procedure into steps:

- Compute (economy7The economy QR factorization of an

thin matrix

thin matrix  is a factorization

is a factorization  where

where  is an

is an  matrix with orthonormal columns and

matrix with orthonormal columns and  is a

is a  upper triangular matrix. The economy QR factorization is sometimes also called a thin or compact QR factorization, and can be computed in

upper triangular matrix. The economy QR factorization is sometimes also called a thin or compact QR factorization, and can be computed in  operations.) QR factorizations of

operations.) QR factorizations of  and

and  :

:  and

and  . Reader beware: We call the “

. Reader beware: We call the “ ” factor in the QR factorizations of

” factor in the QR factorizations of  and

and  to be

to be  and

and  , as we have already used the letter

, as we have already used the letter  to denote the second factor in our rank factorization.

to denote the second factor in our rank factorization. - Compute the small matrix

.

. - Compute an SVD of

.

. - Set

and

and  .

.

By following the procedure line-by-line, one can check that indeed the matrices ![]() and

and ![]() have orthonormal columns and

have orthonormal columns and ![]() , so this procedure indeed computes an economy SVD of

, so this procedure indeed computes an economy SVD of ![]() . Let’s see why this approach is also faster. Let’s count operations line-by-line:

. Let’s see why this approach is also faster. Let’s count operations line-by-line:

- Economy QR factorization of an

and

and  matrix require

matrix require  and

and  operations.

operations. - The product of two

matrices requires

matrices requires  operations.

operations. - The SVD of an

matrix requires

matrix requires  operations.

operations. - The products of a

and a

and a  matrix by

matrix by  matrices requires

matrices requires  and

and  operations.

operations.

Accounting for all the operations, we see the operation count is ![]() , a significant improvement over the

, a significant improvement over the ![]() operations for a general matrix.8We can ignore the term of order

operations for a general matrix.8We can ignore the term of order ![]() since

since ![]() so

so ![]() is

is ![]() .

.

As the previous examples show, many (if not most) things we want to compute from a low-rank matrix ![]() can be dramatically more efficiently computed using its rank factorization. The strategy is simple in principle, but can be subtle to execute: Whatever you do, avoid explicitly computing the product

can be dramatically more efficiently computed using its rank factorization. The strategy is simple in principle, but can be subtle to execute: Whatever you do, avoid explicitly computing the product ![]() at all costs. Instead, compute with the matrices

at all costs. Instead, compute with the matrices ![]() and

and ![]() directly, only operating on

directly, only operating on ![]() ,

, ![]() , and

, and ![]() matrices.

matrices.

Another important type of computation one can perform with low-rank matrices are low-rank updates, where we have already solved a problem for a matrix ![]() and we want to re-solve it efficiently with the matrix

and we want to re-solve it efficiently with the matrix ![]() where

where ![]() has low rank. If

has low rank. If ![]() is expressed in a rank factorization, very often we can do this efficiently as well, as we discuss in the following bonus section. As this is somewhat more niche, the uninterested reader should feel free to skip this and continue to the next section.

is expressed in a rank factorization, very often we can do this efficiently as well, as we discuss in the following bonus section. As this is somewhat more niche, the uninterested reader should feel free to skip this and continue to the next section.

Low-rank Approximation

As we’ve seen, computing with low-rank matrices expressed as rank factorizations can yield significant computational savings. Unfortunately, many matrices in application are not low-rank. In fact, even if a matrix in an application is low-rank, the small rounding errors we incur in storing it on a computer may destroy the matrix’s low rank, increasing its rank to the maximum possible value of ![]() . The solution in this case is straightforward: approximate our high-rank matrix with a low-rank one, which we express in algorithmically useful form as a rank factorization.

. The solution in this case is straightforward: approximate our high-rank matrix with a low-rank one, which we express in algorithmically useful form as a rank factorization.

Here’s one simple way of constructing low-rank approximations. Start with a matrix ![]() and compute a singular value decomposition of

and compute a singular value decomposition of ![]() ,

, ![]() . Recall from two sections previous that the rank of the matrix

. Recall from two sections previous that the rank of the matrix ![]() is equal to its number of nonzero singular values. But what if

is equal to its number of nonzero singular values. But what if ![]() ‘s singular values aren’t exactly zero, but they’re very small? It seems reasonable to expect that

‘s singular values aren’t exactly zero, but they’re very small? It seems reasonable to expect that ![]() is nearly low-rank in this case. Indeed, this intuition is true. To approximate

is nearly low-rank in this case. Indeed, this intuition is true. To approximate ![]() a low-rank matrix, we can truncate

a low-rank matrix, we can truncate ![]() ‘s singular value decomposition by setting

‘s singular value decomposition by setting ![]() ‘s small singular values to zero. If we zero out all but the

‘s small singular values to zero. If we zero out all but the ![]() largest singular values of

largest singular values of ![]() , this procedure results in a rank-

, this procedure results in a rank-![]() matrix

matrix ![]() which approximates

which approximates ![]() . If the singular values that we zeroed out were tiny, then

. If the singular values that we zeroed out were tiny, then ![]() will be very close to

will be very close to ![]() and the low-rank approximation is accurate. This matrix

and the low-rank approximation is accurate. This matrix ![]() is called an

is called an ![]() -truncated singular value decomposition of

-truncated singular value decomposition of ![]() , and it is easy to represent it using a rank factorization once we have already computed an SVD of

, and it is easy to represent it using a rank factorization once we have already computed an SVD of ![]() .

.

It is important to remember that low-rank approximations are, just as the name says, approximations. Not every matrix is well-approximated by one of small rank. A matrix may be excellently approximated by a rank-100 matrix and horribly approximated by a rank-90 matrix. If an algorithm uses a low-rank approximation as a building block, then the approximation error (the difference between ![]() and its low-rank approximation

and its low-rank approximation ![]() ) and its propagations through further steps of the algorithm need to be analyzed and controlled along with other sources of error in the procedure.

) and its propagations through further steps of the algorithm need to be analyzed and controlled along with other sources of error in the procedure.

Despite this caveat, low-rank approximations can be startlingly effective. Many matrices occurring in practice can be approximated to negligible error by a matrix with very modestly-sized rank. We shall return to this surprising ubiquity of approximately low-rank matrices at the end of the article.

We’ve seen one method for computing low-rank approximations, the truncated singular value decomposition. As we shall see in the next section, the truncated singular value decomposition produces excellent low-rank approximations, the best possible in a certain sense, in fact. As we mentioned above, almost every matrix factorization can be used to compute rank factorizations. Can these matrix factorizations also compute high quality low-rank approximations?

Let’s consider a specific example to see the underlying ideas. Say we want to compute a low-rank approximation to a matrix ![]() by a QR factorization. To do this, we want to compute a QR factorization

by a QR factorization. To do this, we want to compute a QR factorization ![]() and then throw away all but the first

and then throw away all but the first ![]() columns of

columns of ![]() and the first

and the first ![]() rows of

rows of ![]() . This will be a good approximation if the rows we discard from

. This will be a good approximation if the rows we discard from ![]() are “small” compared to the rows of

are “small” compared to the rows of ![]() we keep. Unfortunately, this is not always the case. As a worst case example, if the first

we keep. Unfortunately, this is not always the case. As a worst case example, if the first ![]() columns of

columns of ![]() are zero, then the first

are zero, then the first ![]() rows of

rows of ![]() will definitely be zero and the low-rank approximation computed this way is worthless.

will definitely be zero and the low-rank approximation computed this way is worthless.

We need to modify something to give QR factorization a fighting chance for computing good low-rank approximations. The simplest way to do this is by using column pivoting, where we shuffle the columns of ![]() around to bring columns of the largest size “to the front of the line” as we computing the QR factorization. QR factorization with column pivoting produces excellent low-rank approximations in a large number of cases, but it can still give poor-quality approximations for some special examples. For this reason, numerical analysts have developed so-called strong rank-revealing QR factorizations, such as the one developed by Gu and Eisenstat, which are guaranteed to compute quite good low-rank approximations for every matrix

around to bring columns of the largest size “to the front of the line” as we computing the QR factorization. QR factorization with column pivoting produces excellent low-rank approximations in a large number of cases, but it can still give poor-quality approximations for some special examples. For this reason, numerical analysts have developed so-called strong rank-revealing QR factorizations, such as the one developed by Gu and Eisenstat, which are guaranteed to compute quite good low-rank approximations for every matrix ![]() . Similarly, there exists a strong rank-revealing LU factorizations which can compute good low-rank approximations using LU factorization.

. Similarly, there exists a strong rank-revealing LU factorizations which can compute good low-rank approximations using LU factorization.

The upshot is that most matrix factorizations you know and love can be used to compute good-quality low-rank approximations, possibly requiring extra tricks like row or column pivoting. But this simple summary, and the previous discussion, leaves open important questions: what do we mean by good-quality low-rank approximations? How good can a low-rank approximation be?

Best Low-rank Approximation

As we saw in the last section, one way to approximate a matrix by a lower rank matrix is by a truncated singular value decomposition. In fact, in some sense, this is the best way of approximating a matrix by one of lower rank. This fact is encapsulated in a theorem commonly referred to as the Eckart–Young theorem, though the essence of the result is originally due to Schmidt and the modern version of the result to Mirsky.10A nice history of the Eckart–Young theorem is provided in the book Matrix Perturbation Theory by Stewart and Sun.

But what do we mean by best approximation? One ingredient we need is a way of measuring how big the discrepancy between two matrices is. Let’s define a measure of the size of a matrix ![]() which we will call

which we will call ![]() ‘s norm, which we denote as

‘s norm, which we denote as ![]() . If

. If ![]() is a matrix and

is a matrix and ![]() is a low-rank approximation to it, then

is a low-rank approximation to it, then ![]() is a good approximation to

is a good approximation to ![]() if the norm

if the norm ![]() is small. There might be many different ways of measuring the size of the error, but we have to insist on a couple of properties on our norm

is small. There might be many different ways of measuring the size of the error, but we have to insist on a couple of properties on our norm ![]() for it to really define a sensible measure of size. For instance if the norm of a matrix

for it to really define a sensible measure of size. For instance if the norm of a matrix ![]() is

is ![]() , then the norm of

, then the norm of ![]() should be

should be ![]() . A list of the properties we require a norm to have are listed on the Wikipedia page for norms. We shall also insist on one more property for our norm: the norm should be unitarily invariant.11Note that every unitarily invariant norm is a special type of vector norm (called a symmetric gauge function) evaluated on the singular values of the matrix. What this means is the norm of a matrix

. A list of the properties we require a norm to have are listed on the Wikipedia page for norms. We shall also insist on one more property for our norm: the norm should be unitarily invariant.11Note that every unitarily invariant norm is a special type of vector norm (called a symmetric gauge function) evaluated on the singular values of the matrix. What this means is the norm of a matrix ![]() remains the same if it is multiplied on the left or right by an orthogonal matrix. This property is reasonable since multiplication by orthogonal matrices geometrically represents a rotation or reflection12This is not true in dimensions higher than 2, but it gives the right intuition that orthogonal matrices preserve distances. which preserves distances between points, so it makes sense that we should demand that the size of a matrix as measured by our norm does not change by such multiplications. Two important and popular matrix norms satisfy the unitarily invariant property: the Frobenius norm

remains the same if it is multiplied on the left or right by an orthogonal matrix. This property is reasonable since multiplication by orthogonal matrices geometrically represents a rotation or reflection12This is not true in dimensions higher than 2, but it gives the right intuition that orthogonal matrices preserve distances. which preserves distances between points, so it makes sense that we should demand that the size of a matrix as measured by our norm does not change by such multiplications. Two important and popular matrix norms satisfy the unitarily invariant property: the Frobenius norm ![]() and the spectral (or operator 2-) norm

and the spectral (or operator 2-) norm ![]() , which measures the largest singular value.13Both the Frobenius and spectral norms are examples of an important subclass of unitarily invariant norms called Schatten norms. Another example of a Schatten norm, important in matrix completion, is the nuclear norm (sum of the singular values).

, which measures the largest singular value.13Both the Frobenius and spectral norms are examples of an important subclass of unitarily invariant norms called Schatten norms. Another example of a Schatten norm, important in matrix completion, is the nuclear norm (sum of the singular values).

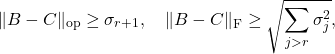

With this preliminary out of the way, the Eckart–Young theorem states that the truncated singular value decomposition of ![]() truncated to rank

truncated to rank ![]() is the closest of all rank-

is the closest of all rank-![]() matrices

matrices ![]() when distances are measured using any unitarily invariant norm

when distances are measured using any unitarily invariant norm ![]() . If we let

. If we let ![]() denote the

denote the ![]() -truncated singular value decomposition of

-truncated singular value decomposition of ![]() , then the Eckart–Young theorem states that

, then the Eckart–Young theorem states that

(5) ![]()

Less precisely, the ![]() -truncated singular value decomposition is the best rank-

-truncated singular value decomposition is the best rank-![]() approximation to a matrix.

approximation to a matrix.

Let’s unpack the Eckart–Young theorem using the spectral and Frobenius norms. In this context, a brief calculation and the Eckart–Young theorem proves that for any rank-![]() matrix

matrix ![]() , we have

, we have

(6)

where ![]() are the singular values of

are the singular values of ![]() . This bound is quite intuitive. The error in low-rank approximation will be “small” when we measure the error in the spectral norm when each singular value we zero out is “small”. When we measure error in the Frobenius norm, the error in low-rank approximation is “small” when all of the singular values we zero out are “small” in aggregate when squared and added together.

. This bound is quite intuitive. The error in low-rank approximation will be “small” when we measure the error in the spectral norm when each singular value we zero out is “small”. When we measure error in the Frobenius norm, the error in low-rank approximation is “small” when all of the singular values we zero out are “small” in aggregate when squared and added together.

The Eckart–Young theorem shows that possessing a good low-rank approximation is equivalent to the singular values rapidly decaying.14At least when measured in unitarily invariant norms. A surprising result shows that even the identity matrix, whose singular values are all equal to one, has good low-rank approximations in the maximum entrywise absolute value norm; see, e.g., Theorem 1.0 in this article. If a matrix does not have nice singular value decay, no good low-rank approximation exists, computed by the ![]() -truncated SVD or otherwise.

-truncated SVD or otherwise.

Why Are So Many Matrices (Approximately) Low-rank?

As we’ve seen, we can perform computations with low-rank matrices represented using rank factorizations much faster than general matrices. But all of this would be a moot point if low-rank matrices rarely occurred in practice. But in fact precisely the opposite is true: Approximately low-rank matrices occur all the time in practice.

Sometimes, exact low-rank matrices appear for algebraic reasons. For instance, when we perform one step Gaussian elimination to compute an ![]() factorization, the lower right portion of the eliminated matrix, the so-called Schur complement, is a rank-one update to the original matrix. In such cases, a rank-

factorization, the lower right portion of the eliminated matrix, the so-called Schur complement, is a rank-one update to the original matrix. In such cases, a rank-![]() matrix might appear in a computation when one performs

matrix might appear in a computation when one performs ![]() steps of some algebraic process: The appearance of low-rank matrices in such cases is unsurprising.

steps of some algebraic process: The appearance of low-rank matrices in such cases is unsurprising.

However, often, matrices appearing in applications are (approximately) low-rank for analytic reasons instead. Consider the weather example from the start again. One might reasonably model the temperature on Earth as a smooth function ![]() of position

of position ![]() and time

and time ![]() . If we then let

. If we then let ![]() denote the position on Earth of station

denote the position on Earth of station ![]() and

and ![]() the time representing the

the time representing the ![]() th day of a given year, then the entries of the

th day of a given year, then the entries of the ![]() matrix are given by

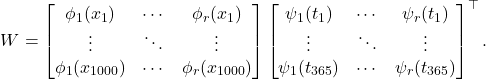

matrix are given by ![]() . As discussed in my article on smoothness and degree of approximation, a smooth function function of one variable can be excellently approximated by, say, a polynomial of low degree. Analogously, a smooth function depending on two arguments, such as our function

. As discussed in my article on smoothness and degree of approximation, a smooth function function of one variable can be excellently approximated by, say, a polynomial of low degree. Analogously, a smooth function depending on two arguments, such as our function ![]() , can be excellently be approximated by a separable expansion of rank

, can be excellently be approximated by a separable expansion of rank ![]() :

:

(7) ![]()

Similar to functions of a single variable, the degree to which a function ![]() can to be approximated by a separable function of small rank depends on the degree smoothness of the function

can to be approximated by a separable function of small rank depends on the degree smoothness of the function ![]() . Assuming the function

. Assuming the function ![]() is quite smooth, then

is quite smooth, then ![]() can be approximated has a separable expansion of small rank

can be approximated has a separable expansion of small rank ![]() . This leads immediately to a low-rank approximation to the matrix

. This leads immediately to a low-rank approximation to the matrix ![]() given by the rank factorization

given by the rank factorization

(8)

Thus, in the context of our weather example, we see that the data matrix can be expected to be low-rank under the reasonable-sounding assumption that the temperature depends smoothly on space and time.

What does this mean in general? Let’s speak informally. Suppose that the ![]() th entries of a matrix

th entries of a matrix ![]() are samples

are samples ![]() from a smooth function

from a smooth function ![]() for points

for points ![]() and

and ![]() . Then we can expect that

. Then we can expect that ![]() will be approximately low-rank. From a computational point of view, we don’t need to know a separable expansion for the function

will be approximately low-rank. From a computational point of view, we don’t need to know a separable expansion for the function ![]() or even the form of the function

or even the form of the function ![]() itself: If the smooth function

itself: If the smooth function ![]() exists and

exists and ![]() is sampled from it, then

is sampled from it, then ![]() is approximately low-rank and we can find a low-rank approximation for

is approximately low-rank and we can find a low-rank approximation for ![]() using the truncated singular value decomposition.15Note here an important subtlety. A more technically precise version of what we’ve stated here is that: if

using the truncated singular value decomposition.15Note here an important subtlety. A more technically precise version of what we’ve stated here is that: if ![]() depending on inputs

depending on inputs ![]() and

and ![]() is sufficiently smooth for

is sufficiently smooth for ![]() in the product of compact regions

in the product of compact regions ![]() and

and ![]() , then an

, then an ![]() matrix

matrix ![]() with

with ![]() and

and ![]() will be low-rank in the sense that it can be approximated to accuracy

will be low-rank in the sense that it can be approximated to accuracy ![]() by a rank-

by a rank-![]() matrix where

matrix where ![]() grows slowly as

grows slowly as ![]() and

and ![]() increase and

increase and ![]() decreases. Note that, phrased this way, the low-rank property of

decreases. Note that, phrased this way, the low-rank property of ![]() is asymptotic in the size

is asymptotic in the size ![]() and

and ![]() and the accuracy

and the accuracy ![]() . If

. If ![]() is not smooth on the entirety of the domain

is not smooth on the entirety of the domain ![]() or the size of the domains

or the size of the domains ![]() and

and ![]() grow with

grow with ![]() and

and ![]() , these asymptotic results may no longer hold. And if

, these asymptotic results may no longer hold. And if ![]() and

and ![]() are small enough or

are small enough or ![]() is large enough,

is large enough, ![]() may not be well approximated by a matrix of small rank. Only when there are enough rows and columns will meaningful savings from low-rank approximation be possible.

may not be well approximated by a matrix of small rank. Only when there are enough rows and columns will meaningful savings from low-rank approximation be possible.

This “smooth function” explanation for the prevalence of low-rank matrices is the reason for the appearance of low-rank matrices in fast multipole method-type fast algorithms in computational physics and has been proposed16This article considers piecewise analytic functions rather than smooth functions; the principle is more-or-less the same. as a general explanation for the prevalence of low-rank matrices in data science.

(Another explanation for low-rank structure for highly structured matrices like Hankel, Toeplitz, and Cauchy matrices17Computations with these matrices can often also be accelerated with other approaches than low-rank structure; see my post on the fast Fourier transform for a discussion of fast Toeplitz matrix-vector products. which appear in control theory applications has a different explanation involving a certain Sylvester equation; see this lecture for a great explanation.)

Upshot: A matrix is low-rank if it has many fewer linearly independent columns than columns. Such matrices can be efficiently represented using rank-factorizations, which can be used to perform various computations rapidly. Many matrices appearing in applications which are not genuinely low-rank can be well-approximated by low-rank matrices; the best possible such approximation is given by the truncated singular value decomposition. The prevalence of low-rank matrices in diverse application areas can partially be explained by noting that matrices sampled from smooth functions are approximately low-rank.

I have been looking for motivations for why low-rank matrices are natural and wasn’t able to find any satisfactory answers.. Thank you very much for your excellent tutorial!

Excellent tutorial!

Could you describe what phi is? I could not find a definition of it in the blog post. It seems perhaps it is related to location i, but then it would be subscripted. Perhaps it is just used to correct alignment between day 1 and peak of sinusoid.

Thanks for the question Paul. You’re absolutely right: ϕ in equation (1) is a phase shift chosen to align make the peaks of the sinusoid occur at the hottest (coldest) days of the year. I’ve edited the text to make this clear.

Amazing post! The examples and explanations are superb.

Superb blog post!