In this post, I want to discuss a beautiful and somewhat subtle matrix factorization known as the Vandermonde decomposition that appears frequently in signal processing and control theory. We’ll begin from the very basics, introducing the controls-and-signals context, how the Vandermonde decomposition comes about, and why it’s useful. By the end, I’ll briefly share how we can push the Vandermonde decomposition beyond matrices to the realm of tensors, which will can allow us to separate mixed signals from multiple measurements. This tensorial generalization plays an important role in my paper ![]() -decompositions, sparse component analysis, and the blind separation of sums of exponentials, joint work with Nithin Govindajaran and Lieven De Lathauwer, which recently appeared in the SIAM Journal of Matrix Analysis and Applications.

-decompositions, sparse component analysis, and the blind separation of sums of exponentials, joint work with Nithin Govindajaran and Lieven De Lathauwer, which recently appeared in the SIAM Journal of Matrix Analysis and Applications.

Finding the Frequencies

Suppose I give you a short recording of a musical chord consisting of three notes. How could you determine which three notes they were? Mathematically, we can represent such a three-note chord as a combination of scaled and shifted cosine functions

(1) ![]()

We are interested in obtaining the (angular) frequencies ![]() ,

, ![]() , and

, and ![]() .

.

In the extreme limit, when we are given the values of the signal for all ![]() , both positive and negative, the frequencies are immediately given by taking a Fourier transform of the function

, both positive and negative, the frequencies are immediately given by taking a Fourier transform of the function ![]() . In practice, we only have access to the function

. In practice, we only have access to the function ![]() at certain times

at certain times ![]() which we assume are equally spaced

which we assume are equally spaced

![]()

Given the samples

![]()

we could try to identify ![]() ,

, ![]() , and

, and ![]() using a discrete Fourier transform.1The discrete Fourier transform can be computed very quickly using the fast Fourier transform, as I discussed in a previous post. Unfortunately, this generally requires a large number of samples to identify

using a discrete Fourier transform.1The discrete Fourier transform can be computed very quickly using the fast Fourier transform, as I discussed in a previous post. Unfortunately, this generally requires a large number of samples to identify ![]() ,

, ![]() , and

, and ![]() accurately. (The accuracy scales roughly like

accurately. (The accuracy scales roughly like ![]() , where

, where ![]() is the number of samples.) We are interested in finding a better way to identify the frequencies.

is the number of samples.) We are interested in finding a better way to identify the frequencies.

Now that we’ve moved from the function ![]() , defined for any real input

, defined for any real input ![]() , to a set of samples

, to a set of samples ![]() it will be helpful to rewrite our formula (1) for

it will be helpful to rewrite our formula (1) for ![]() in a different way. By Euler’s identity, the cosines can be rewritten as

in a different way. By Euler’s identity, the cosines can be rewritten as

![]()

As a consequence, we can rewrite one of the frequency components in (1) as

![]()

Here, ![]() and

and ![]() are complex coefficients

are complex coefficients ![]() and

and ![]() which contain the same information as the original parameters

which contain the same information as the original parameters ![]() and

and ![]() . Now notice that we are only interest in values

. Now notice that we are only interest in values ![]() which are multiples of the spacing

which are multiples of the spacing ![]() . Thus, our frequency component can be further rewritten as

. Thus, our frequency component can be further rewritten as

![]()

where ![]() and

and ![]() . Performing these reductions, our samples

. Performing these reductions, our samples ![]() take the form

take the form

(2) ![]()

We’ve now reformulated our frequency problems in identifying the parameters ![]() and

and ![]() in the relation (2) from a small number of measurements

in the relation (2) from a small number of measurements ![]() .

.

Frequency Finding as a Matrix Factorization

We will return to the algorithmic problem of identifying the parameters in the relation (2) from measurements in a little bit. First, we will see that (2) can actually be written as a matrix factorization. Understanding computations by matrix factorization has been an extremely successful paradigm in applied mathematics, and we will see in this post how viewing (2) as a matrix factorization can be very useful.

While it may seem odd at first,2As pointed out to me on math stack exchange, one reason forming the Hankel matrix is sensible is because it effectively augments the sequence of numbers ![]() into a sequence of vectors given by the columns of

into a sequence of vectors given by the columns of ![]() . This can reveal patterns in the sequence which are less obvious when it is represented as given just as numbers. For instance, any seven columns of

. This can reveal patterns in the sequence which are less obvious when it is represented as given just as numbers. For instance, any seven columns of ![]() are linearly dependent, a surprising fact since the columns of

are linearly dependent, a surprising fact since the columns of ![]() have length

have length ![]() which can be much larger than seven. In addition, as we will soon effectively exploit later, vectors in the nullspace of

which can be much larger than seven. In addition, as we will soon effectively exploit later, vectors in the nullspace of ![]() (or related Hankel matrices derived from the sequence) give recurrence relations obeyed by the sequence. This speaks to a general phenomenon where properties of sequence (say, arising from snapshots of a dynamical system) can sometimes become more clear by this procedure of delay embedding. it will be illuminating to repackage the measurements

(or related Hankel matrices derived from the sequence) give recurrence relations obeyed by the sequence. This speaks to a general phenomenon where properties of sequence (say, arising from snapshots of a dynamical system) can sometimes become more clear by this procedure of delay embedding. it will be illuminating to repackage the measurements ![]() as a matrix:

as a matrix:

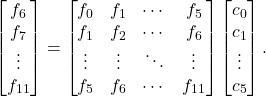

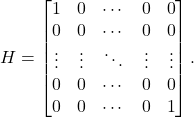

(3) ![Rendered by QuickLaTeX.com \[H = \begin{bmatrix} f_0 & f_1 & f_2 & \cdots & f_{(n-1)/2} \\f_1 & f_2 & f_3 & \cdots & f_{(n-1)/2+1} \\f_2 & f_3 & f_4 & \cdots & f_{(n-1)/2+2} \\\vdots & \vdots & \vdots & \ddots & \vdots \\f_{(n-1)/2} & f{(n-1)/2+1} & f{(n-1)/2+2} & \cdots & f_{n-1} \end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-e58f400c13a096efb5c1682c52003056_l3.png)

Here, we have assumed ![]() is odd. The matrix

is odd. The matrix ![]() is known as the Hankel matrix associated with the sequence

is known as the Hankel matrix associated with the sequence ![]() . Observe that the entry in position

. Observe that the entry in position ![]() of

of ![]() depends only on the sum of the indices

depends only on the sum of the indices ![]() and

and ![]() ,

, ![]() . (We use a zero-indexing system to label the rows and columns of

. (We use a zero-indexing system to label the rows and columns of ![]() where, for instance, the first row of

where, for instance, the first row of ![]() is row

is row ![]() .)

.)

Let’s see how we can interpret the frequency decomposition (2) as a factorization of the Hankel matrix ![]() . We first write out

. We first write out ![]() using (2):

using (2):

(4) ![Rendered by QuickLaTeX.com \[H_{ij} = f_{i+j} = \sum_{k=0}^5 d_k z_k^{i+j} = \sum_{k=0}^5 d_k z_k^i \cdot z_k^j. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-c66cda6c8df0e635353009a6469c9d13_l3.png)

The power ![]() was just begging to be factorized as

was just begging to be factorized as ![]() , which we did. Equation (4) almost looks like the formula for the product of two matrices with entries

, which we did. Equation (4) almost looks like the formula for the product of two matrices with entries ![]() , so it makes sense to introduce the

, so it makes sense to introduce the ![]() matrix

matrix ![]() with entry

with entry ![]() . This is a so-called Vandermonde matrix associated with

. This is a so-called Vandermonde matrix associated with ![]() and has the form

and has the form

![Rendered by QuickLaTeX.com \[V = \begin{bmatrix}z_0^0 & z_0^1 & z_0^2 & \cdots & z_0^{(n-1)/2} \\z_1^0 & z_1^1 & z_1^2 & \cdots & z_1^{(n-1)/2} \\\vdots & \vdots & \vdots & \ddots & \vdots \\z_5^0 & z_5^1 & z_5^2 & \cdots & z_5^{(n-1)/2}\end{bmatrix}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-52248d150045d64416cf49a569abf156_l3.png)

If we also introduce the ![]() diagonal matrix

diagonal matrix ![]() , the formula (4) for

, the formula (4) for ![]() can be written as the matrix factorization3In the Vandermonde decomposition

can be written as the matrix factorization3In the Vandermonde decomposition ![]() , the factor

, the factor ![]() appears transposed even when

appears transposed even when ![]() is populated with complex numbers! This differs from the usual case in linear algebra where we use the conjugate transpose rather than the ordinary transpose when working with complex matrices. As a related issue, observe that if at least one of the measurements

is populated with complex numbers! This differs from the usual case in linear algebra where we use the conjugate transpose rather than the ordinary transpose when working with complex matrices. As a related issue, observe that if at least one of the measurements ![]() is a (non-real) complex number, the Hankel matrix

is a (non-real) complex number, the Hankel matrix ![]() is symmetric but not Hermitian.

is symmetric but not Hermitian.

(5) ![]()

This is the Vandermonde decomposition of the Hankel matrix ![]() , a factorization of

, a factorization of ![]() as a product of the transpose of a Vandermonde matrix, a diagonal matrix, and that same Vandermonde matrix.

as a product of the transpose of a Vandermonde matrix, a diagonal matrix, and that same Vandermonde matrix.

The Vandermonde decomposition immediately tells us all the information ![]() and

and ![]() describing our sampled recording

describing our sampled recording ![]() via (2). Thus, the problem of determining

via (2). Thus, the problem of determining ![]() and

and ![]() is equivalent to finding the Vandermonde decomposition (5) of the Hankel matrix

is equivalent to finding the Vandermonde decomposition (5) of the Hankel matrix ![]() .

.

Computing the Vandermonde Decomposition: Prony’s Method

Computing the Vandermonde decomposition accurately can be a surprisingly hard task, particularly if the measurements ![]() are corrupted by even a small amount of measurement error. In view of this, I want to present a very classical way of computing this decomposition (dating back to 1795!) known as Prony’s method. This method is conceptually simple and will be a vehicle to continue exploring frequency finding and its connection with Hankel matrices. It remains in use, though it’s accuracy may be significantly worse compared to other methods.

are corrupted by even a small amount of measurement error. In view of this, I want to present a very classical way of computing this decomposition (dating back to 1795!) known as Prony’s method. This method is conceptually simple and will be a vehicle to continue exploring frequency finding and its connection with Hankel matrices. It remains in use, though it’s accuracy may be significantly worse compared to other methods.

As a first step to deriving Prony’s method, let’s reformulate the frequency finding problem in a different way. Sums of cosines like the ones in our expression (1) for the function ![]() often appear as the solution to a (linear) ordinary differential equation (ODE). This means that one way we could find the frequencies comprising

often appear as the solution to a (linear) ordinary differential equation (ODE). This means that one way we could find the frequencies comprising ![]() would be to find a differential equation which

would be to find a differential equation which ![]() satisfies. Together with the initial condition

satisfies. Together with the initial condition ![]() , determining all the frequencies would be very straightforward.

, determining all the frequencies would be very straightforward.

Since we only have access to samples ![]() of

of ![]() at regular time intervals, we will instead look for the “discrete-time” analog of a linear ODE, a linear recurrence relation. This is an expression of the form

at regular time intervals, we will instead look for the “discrete-time” analog of a linear ODE, a linear recurrence relation. This is an expression of the form

(6) ![]()

In our case, we’ll have ![]() because there are six terms in the formula (2) for

because there are six terms in the formula (2) for ![]() . Together with initial conditions

. Together with initial conditions ![]() , such a recurrence will allow us to determine the parameters

, such a recurrence will allow us to determine the parameters ![]() and

and ![]() in our formula (2) for our sampled recordings

in our formula (2) for our sampled recordings ![]() and hence also allow us to compute the Vandermonde decomposition (5).

and hence also allow us to compute the Vandermonde decomposition (5).

Observe that the recurrence (6) is a linear equation in the variables ![]() . A very good rule of thumb in applied mathematics is to always write down linear equations in matrix–vector notation in see how it looks. Doing this, we obtain

. A very good rule of thumb in applied mathematics is to always write down linear equations in matrix–vector notation in see how it looks. Doing this, we obtain

(7) ![Rendered by QuickLaTeX.com \[\begin{bmatrix} f_6 \\ f_7 \\ \vdots \\ f_{n-1} \end{bmatrix} = \underbrace{\begin{bmatrix} f_0 & f_1 & f_2 & f_3 & f_4 & f_5 \\ f_1 & f_2 & f_3 & f_4 & f_5 & f_6 \\ \vdots & \vdots & \vdots & \vdots & \vdots & \vdots \\ f_{n-7} & f_{n-6} & f_{n-5} & f_{n-4} & f_{n-3} & f_{n-2} \end{bmatrix}}_{=F}\begin{bmatrix} c_0 \\ c_1 \\ \vdots \\ c_5 \end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-7d7dc3e345c47a62fa233007d8d0db69_l3.png)

Observe that the matrix on the right-hand side of this equation is also a Hankel matrix (like ![]() in (3)) formed from the samples

in (3)) formed from the samples ![]() . Call this Hankel matrix

. Call this Hankel matrix ![]() . Unlike

. Unlike ![]() in (3),

in (3), ![]() is rectangular. If

is rectangular. If ![]() is much larger than

is much larger than ![]() ,

, ![]() will be tall, possessing many more rows than columns. We assume

will be tall, possessing many more rows than columns. We assume ![]() going forward.4

going forward.4![]() would also be fine for our purposes, but we assume

would also be fine for our purposes, but we assume ![]() to illustrate this highly typical case.

to illustrate this highly typical case.

Let’s write (7) a little more compactly as

(8) ![]()

where we’ve introduced ![]() for the vector on the left-hand side of (7) and collected the recurrence coefficients

for the vector on the left-hand side of (7) and collected the recurrence coefficients ![]() into a vector

into a vector ![]() . For a typical system of linear equations like (8), we would predict the system to have no solution

. For a typical system of linear equations like (8), we would predict the system to have no solution ![]() : Because

: Because ![]() has more rows than columns (if

has more rows than columns (if ![]() ), the system equations (8) has more equations than unknowns. Fortunately, we are not in the typical case. Despite the fact that we have more equations than unknowns, the linear equations (8) have a unique solution

), the system equations (8) has more equations than unknowns. Fortunately, we are not in the typical case. Despite the fact that we have more equations than unknowns, the linear equations (8) have a unique solution ![]() .5This solution can be computed by solving the

.5This solution can be computed by solving the ![]() system of linear equations

system of linear equations  In particular, the matrix on the right-hand side of this equation is guaranteed to be nonsingular under our assumptions. Using the Vandermonde decomposition, can you see why? The existence of a unique solution is a consequence of the fact that the samples

In particular, the matrix on the right-hand side of this equation is guaranteed to be nonsingular under our assumptions. Using the Vandermonde decomposition, can you see why? The existence of a unique solution is a consequence of the fact that the samples ![]() satisfy the formula (2). As a fun exercise, you might want to verify the existence of a unique

satisfy the formula (2). As a fun exercise, you might want to verify the existence of a unique ![]() satisfying (8)!

satisfying (8)!

As a quick aside, if the measurements ![]() are corrupted by small measurement errors, then the equations (8) will usually not possess a solution. In this case, it would be appropriate to find the least squares solution to equation (8) as a way of mitigating these errors.

are corrupted by small measurement errors, then the equations (8) will usually not possess a solution. In this case, it would be appropriate to find the least squares solution to equation (8) as a way of mitigating these errors.

Hurrah! We’ve found the coefficients ![]() providing a recurrence relation (6) for our measurements

providing a recurrence relation (6) for our measurements ![]() . All that is left is to find the parameters

. All that is left is to find the parameters ![]() and

and ![]() in our signal formula (2) and the Vandermonde decomposition (5). Fortunately, this is just a standard computation for linear recurrence relations, nicely paralleling the solution of (homogenous) linear ODEs by means of the so-called “characteristic equation”. I’ll go through fairly quickly since this material is well-explained elsewhere on the internet (like Wikipedia). Let’s guess that our recurrence (6) has a solution of the form

in our signal formula (2) and the Vandermonde decomposition (5). Fortunately, this is just a standard computation for linear recurrence relations, nicely paralleling the solution of (homogenous) linear ODEs by means of the so-called “characteristic equation”. I’ll go through fairly quickly since this material is well-explained elsewhere on the internet (like Wikipedia). Let’s guess that our recurrence (6) has a solution of the form ![]() ; we seek to find all complex numbers

; we seek to find all complex numbers ![]() for which this is a bonafide solution. Plugging this solution into the formula (6) for

for which this is a bonafide solution. Plugging this solution into the formula (6) for ![]() gives

gives

(9) ![]()

This is the so-called characteristic equation for the recurrence (6). As a single-variable polynomial equation of degree six, it has six complex solutions ![]() . These numbers

. These numbers ![]() are precisely those numbers which appear in the sequence formula (2) and the Vandermonde decomposition (5).

are precisely those numbers which appear in the sequence formula (2) and the Vandermonde decomposition (5).

Finally, we need to compute the coefficients ![]() . But this is easy. Observe that the formula (2) provides the following system of linear equations for

. But this is easy. Observe that the formula (2) provides the following system of linear equations for ![]() :

:

(10) ![Rendered by QuickLaTeX.com \[\begin{bmatrix}f_0 \\ f_1 \\ \vdots \\ f_{n-1}\end{bmatrix} = \begin{bmatrix}1 & 1 & \cdots & 1 \\ z_0 & z_1 & \cdots & z_5 \\ \vdots & \vdots & \ddots & \vdots \\ z_0^{n-1} & z_1^{n-1} & \cdots & z_5^{n-1}\end{bmatrix} \begin{bmatrix}d_0 \\ d_1 \\ \vdots \\ d_{n-1}.\end{bmatrix}. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-4b18fe5a64350989e4534f4b08ef4c23_l3.png)

Again, this system of equations will have a unique solution if the measurements ![]() are uncorrupted by errors (and can be solved in the least squares sense if corrupted). This gives

are uncorrupted by errors (and can be solved in the least squares sense if corrupted). This gives ![]() , completing our goal of computing the parameters in the formula (2) or, equivalently, finding the Vandermonde decomposition (5).

, completing our goal of computing the parameters in the formula (2) or, equivalently, finding the Vandermonde decomposition (5).

We have accomplished our goal of computing the Vandermonde decomposition. The approach by which we did so is known as Prony’s method, as mentioned in the introduction to this section. As suggested, this method may not always give high-accuracy results. There are two obvious culprits that jump out about why this is the case. Prony’s method requires solving for the roots of the polynomial equation (9) expressed “in the monomial basis” and solving a system of linear equations (10) with a (transposed) Vandermonde matrix. Both of these problems can be notoriously ill-conditioned and thus challenging to solve accurately and may require the measurements ![]() to be done to very high accuracy. Notwithstanding this, Prony’s method does useful results in some cases and forms the basis for potentially more accurate methods, such as those involving generalized eigenvalue problems.

to be done to very high accuracy. Notwithstanding this, Prony’s method does useful results in some cases and forms the basis for potentially more accurate methods, such as those involving generalized eigenvalue problems.

Separating Signals: Extending the Vandermonde Decomposition to Tensors

In our discussion of the frequency identification problem, the Vandermonde decomposition (5) has effectively been an equivalent way of showing the samples ![]() are a combination of exponentials

are a combination of exponentials ![]() . So far, the benefits of the matrix factorization perspective have yet to really reveal themselves.

. So far, the benefits of the matrix factorization perspective have yet to really reveal themselves.

So what are the benefits of the Vandermonde decompostions? A couple of nice observations related to the Vandermonde decomposition and the “Hankelization” of the signals ![]() have already been lurking in the background. For instance, the rank of the Hankel matrix

have already been lurking in the background. For instance, the rank of the Hankel matrix ![]() is the number of frequency components

is the number of frequency components ![]() needed to describe the samples and the representation of the samples as a mixture of exponentials is uniquely determined only if the matrix

needed to describe the samples and the representation of the samples as a mixture of exponentials is uniquely determined only if the matrix ![]() does not have full rank; I have a little more to say about this at the very end. There are also benefits to certain computational problems; one can use Vandermonde decompositions to compute super high accuracy singular value decompositions of Hankel matrices.

does not have full rank; I have a little more to say about this at the very end. There are also benefits to certain computational problems; one can use Vandermonde decompositions to compute super high accuracy singular value decompositions of Hankel matrices.

The power of the Vandermonde decomposition really starts to shine when we go beyond the basic frequency finding problem we discussed by introducing more signals. Suppose now there are three short recordings ![]() ,

, ![]() , and

, and ![]() . (Here, the superscript denotes an index rather than differentiation.) Each signal is a weighted mixture of three sources

. (Here, the superscript denotes an index rather than differentiation.) Each signal is a weighted mixture of three sources ![]() ,

, ![]() , and

, and ![]() , each of which plays a musical chord of three notes (thus representable as a sum of cosines as in (1)). One can think of the sources of being produced three different musical instruments at different places in a room and the recordings

, each of which plays a musical chord of three notes (thus representable as a sum of cosines as in (1)). One can think of the sources of being produced three different musical instruments at different places in a room and the recordings ![]() ,

, ![]() , and

, and ![]() being taken from different microphones in the room.6This scenario of instruments and microphones ignores the finite propagation speed of sound, which also would introduce time delays in the sources in the recorded signals. We effectively treat the speed of sound as being instantaneous. Our goal is now not just to identify the musical notes in the recordings but also to identify how to assign those notes to reconstruct the source signals

being taken from different microphones in the room.6This scenario of instruments and microphones ignores the finite propagation speed of sound, which also would introduce time delays in the sources in the recorded signals. We effectively treat the speed of sound as being instantaneous. Our goal is now not just to identify the musical notes in the recordings but also to identify how to assign those notes to reconstruct the source signals ![]() ,

, ![]() , and

, and ![]() .

.

Taking inspiration from earlier, we record samples ![]() for each recording

for each recording ![]() and form each collection of samples into a Hankel matrix

and form each collection of samples into a Hankel matrix

![Rendered by QuickLaTeX.com \[H^{(\ell)} = \begin{bmatrix} f_0^{(\ell)} & f_1^{(\ell)} & f_2^{(\ell)} & \cdots & f_{(n-1)/2}^{(\ell)} \\f_1^{(\ell)} & f_2^{(\ell)} & f_3^{(\ell)} & \cdots & f_{(n-1)/2+1}^{(\ell)} \\f_2^{(\ell)} & f_3^{(\ell)} & f_4^{(\ell)} & \cdots & f_{(n-1)/2+2}^{(\ell)} \\\vdots & \vdots & \vdots & \ddots & \vdots \\f_{(n-1)/2}^{(\ell)} & f_{(n-1)/2+1}^{(\ell)} & f_{(n-1)/2+2}^{(\ell)} & \cdots & f_{n-1}^{(\ell)} \end{bmatrix}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-426cddcdbfa1b1bae2c3aaccd49f9974_l3.png)

Here comes the crazy part: Stack the Hankelized recordings ![]() ,

, ![]() , and

, and ![]() as slices of a tensor

as slices of a tensor ![]() . A tensor, in this context, just means a multidimensional array of numbers. Just as a vector is a one-dimensional array and a matrix is a two-dimensional array, a tensor could have any number of dimensions. In our case, we need just three. If we use a MATLAB-esque indexing notation,

. A tensor, in this context, just means a multidimensional array of numbers. Just as a vector is a one-dimensional array and a matrix is a two-dimensional array, a tensor could have any number of dimensions. In our case, we need just three. If we use a MATLAB-esque indexing notation, ![]() is a

is a ![]() array given by

array given by

![]()

The remarkable thing is that the source signals can be determined (under appropriate conditions) by computing a special kind of Vandermonde decomposition of the tensor ![]() ! (Specifically, the required decomposition is a Vandermonde-structured

! (Specifically, the required decomposition is a Vandermonde-structured ![]() -block term decomposition of the tensor

-block term decomposition of the tensor ![]() .) Even more cool, this decomposition can be computed using general-purpose software like Tensorlab.

.) Even more cool, this decomposition can be computed using general-purpose software like Tensorlab.

If this sounds interesting, I would encourage you to check out my recently published paper ![]() -decompositions, sparse component analysis, and the blind separation of sums of exponentials, joint work with Nithin Govindajaran and Lieven De Lathauwer and recently published in the SIAM Journal on Matrix Analysis and Applications. In the paper, we explain what this

-decompositions, sparse component analysis, and the blind separation of sums of exponentials, joint work with Nithin Govindajaran and Lieven De Lathauwer and recently published in the SIAM Journal on Matrix Analysis and Applications. In the paper, we explain what this ![]() -decomposition is and how applying it to

-decomposition is and how applying it to ![]() can be used to separate mixtures of exponentials signals from the resulting Vandermonde structure, an idea originating in the work of De Lathauewer. A very important question for these signal separation problems is that of uniqueness. Given the three sampled recordings (comprising the tensor

can be used to separate mixtures of exponentials signals from the resulting Vandermonde structure, an idea originating in the work of De Lathauewer. A very important question for these signal separation problems is that of uniqueness. Given the three sampled recordings (comprising the tensor ![]() ), is there just one way of unscrambling the mixtures into different sources or multiple? If there are multiple, then we might have possibly computed the wrong one. If there is just a single unscrambling, though, then we’ve done our job and unmixed the scrambled signals. The uniqueness of these tensor decompositions is fairly complicated math, and we survey existing results and prove new ones in this paper.7One of our main technical contributions is a new notion of uniqueness of

), is there just one way of unscrambling the mixtures into different sources or multiple? If there are multiple, then we might have possibly computed the wrong one. If there is just a single unscrambling, though, then we’ve done our job and unmixed the scrambled signals. The uniqueness of these tensor decompositions is fairly complicated math, and we survey existing results and prove new ones in this paper.7One of our main technical contributions is a new notion of uniqueness of ![]() -decompositions which we believe is nicely adapted to the signal separation context. Specfically, we prove mathematized versions of the statement “if the source signals are sufficiently different from each others and the measurements of sufficiently high quality, then the signals can uniquely be separated”.

-decompositions which we believe is nicely adapted to the signal separation context. Specfically, we prove mathematized versions of the statement “if the source signals are sufficiently different from each others and the measurements of sufficiently high quality, then the signals can uniquely be separated”.

Conclusions, Loose Ends, and Extensions

The central idea that we’ve been discussing is how it can be useful to convert between a sequence of observations ![]() and a special matricization of this sequence into a Hankel matrix (either square, as in (3), or rectangular, as in (7)). By manipulating the Hankel matrix, say, by computing its Vandermonde decomposition (5), we learn something about the original signal, namely a representation of the form (2).

and a special matricization of this sequence into a Hankel matrix (either square, as in (3), or rectangular, as in (7)). By manipulating the Hankel matrix, say, by computing its Vandermonde decomposition (5), we learn something about the original signal, namely a representation of the form (2).

This is a powerful idea which appears implicitly or explicitly throughout various subfields of mathematics, engineering, and computation. As with many other useful ideas, this paradigm admits many natural generalizations and extensions. We saw one already in this post, where we extended the Vandermonde decomposition to the realm of tensors to solve signal separation problems. To end this post, I’ll place a few breadcrumbs at the beginning of a few of the trails of these generalizations for any curious to learn more, wrapping up a few loose ends on the way.

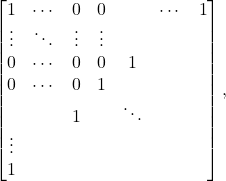

This matrix has rank-two but no rank-two confluent Vandermonde decomposition. The issue is that when extended to an infinite Hankel matrix

This matrix has rank-two but no rank-two confluent Vandermonde decomposition. The issue is that when extended to an infinite Hankel matrix  this (infinite!) matrix has a rank exceeding the size of the original Hankel matrix

this (infinite!) matrix has a rank exceeding the size of the original Hankel matrix ![Rendered by QuickLaTeX.com \[T = \begin{bmatrix} f_{(n-1)/2} & f_{(n-1)/2+1} & f_{(n-1)/2+2} & \cdots & f_{n-1} \\\\f_{(n-1)/2-1} & f_{(n-1)/2} & f_{(n-1)/2+1} & \cdots & f_{n-2} \\\\f_{(n-1)/2-2} & f_{(n-1)/2-1} & f_{(n-1)/2} & \cdots & f_{n-3} \\\\\vdots & \vdots & \vdots & \ddots & \vdots \\\\ f_0 & f_1 & f_2 & \cdots & f_{(n-1)/2} \end{bmatrix}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d576130eb33a8d98958a967ae78ae988_l3.png)