Given the diversity of applications of mathematics, the field of applied mathematics lacks a universally accepted set of core concepts which most experts would agree all self-proclaimed applied mathematicians should know. Further, much mathematical writing is very carefully written, and many important ideas can be obscured by precisely worded theorems or buried several steps into a long proof.

In this series of blog posts, I hope to share my personal experience with some techniques in applied mathematics which I’ve seen pop up many times. My goal is to isolate a single particularly interesting idea and provide a simple explanation of how it works and why it can be useful. In doing this, I hope to collect my own thoughts on these topics and write an introduction to these ideas of the sort I wish I had when I was first learning this material.

Given my fondness for linear algebra, I felt an appropriate first topic for this series would be the Schur Complement. Given matrices ![]() ,

, ![]() ,

, ![]() , and

, and ![]() of sizes

of sizes ![]() ,

, ![]() ,

, ![]() , and

, and ![]() with

with ![]() invertible, the Schur complement is defined to be the matrix

invertible, the Schur complement is defined to be the matrix ![]() .

.

The Schur complement naturally arises in block Gaussian elimination. In vanilla Gaussian elimination, one begins by using the ![]() -entry of a matrix to “zero out” its column. Block Gaussian elimination extends this idea by using the

-entry of a matrix to “zero out” its column. Block Gaussian elimination extends this idea by using the ![]() submatrix occupying the top-left portion of a matrix to “zero out” all of the first

submatrix occupying the top-left portion of a matrix to “zero out” all of the first ![]() columns together. Formally, given the matrix

columns together. Formally, given the matrix ![]() , one can check by carrying out the multiplication that the following factorization holds:

, one can check by carrying out the multiplication that the following factorization holds:

(1) ![]()

Here, we let ![]() denote an identity matrix of size

denote an identity matrix of size ![]() and

and ![]() the

the ![]() zero matrix. Here, we use the notation of block (or partitioned) matrices where, in this case, a

zero matrix. Here, we use the notation of block (or partitioned) matrices where, in this case, a ![]() matrix is written out as a

matrix is written out as a ![]() “block” matrix whose entries themselves are matrices of the appropriate size that all matrices occurring in one block row (or column) have the same number of rows (or columns). Two block matrices which are blocked in a compatible way can be multiplied just like two regular matrices can be multiplied, taking care of the noncommutativity of matrix multiplication.

“block” matrix whose entries themselves are matrices of the appropriate size that all matrices occurring in one block row (or column) have the same number of rows (or columns). Two block matrices which are blocked in a compatible way can be multiplied just like two regular matrices can be multiplied, taking care of the noncommutativity of matrix multiplication.

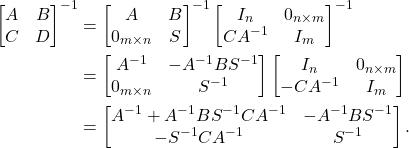

The Schur complement naturally in the expression for the inverse of ![]() . One can verify that for a block triangular matrix

. One can verify that for a block triangular matrix ![]() , we have the inverse formula

, we have the inverse formula

(2) ![]()

(This can be verified by carrying out the block multiplication ![]() for the proposed formula for

for the proposed formula for ![]() and verifying that one obtains the identity matrix.) A similar formula holds for block lower triangular matrices. From here, we can deduce a formula for the inverse of

and verifying that one obtains the identity matrix.) A similar formula holds for block lower triangular matrices. From here, we can deduce a formula for the inverse of ![]() . Let

. Let ![]() be the Schur complement. Then

be the Schur complement. Then

(3)

This remarkable formula gives the inverse of ![]() in terms of

in terms of ![]() ,

, ![]() ,

, ![]() , and

, and ![]() . In particular, the

. In particular, the ![]() -block entry of

-block entry of ![]() is simply just the inverse of the Schur complement.

is simply just the inverse of the Schur complement.

Here, we have seen that if one starts with a large matrix and performs block Gaussian elimination, one ends up with a smaller matrix called the Schur complement whose inverse appears in inverse of the original matrix. Very often, however, it benefits us to run this trick in reverse: we begin with a small matrix, which we recognize to be the Schur complement of a larger matrix. In general, dealing with a larger matrix is more difficult than a smaller one, but very often this larger matrix will have special properties which allow us to more efficiently compute the inverse of the original matrix.

One beautiful application of this idea is the Sherman-Morrison-Woodbury matrix identity. Suppose we want to find the inverse of the matrix ![]() . Notice that this is the Schur complement of the matrix

. Notice that this is the Schur complement of the matrix ![]() , which is the same

, which is the same ![]() after reordering.1Specifically, move the switch the first

after reordering.1Specifically, move the switch the first ![]() rows with the last

rows with the last ![]() rows and do the same with the columns. This defines a permutation matrix

rows and do the same with the columns. This defines a permutation matrix ![]() such that

such that ![]() . Alternately, and perhaps more cleanly, one may define two Schur complements of the block matrix

. Alternately, and perhaps more cleanly, one may define two Schur complements of the block matrix ![]() : one by “eliminating

: one by “eliminating ![]() “,

“, ![]() , and the other by “eliminating

, and the other by “eliminating ![]() “,

“, ![]() . Following the calculation in Eq. (3), just like the inverse of the Schur complement

. Following the calculation in Eq. (3), just like the inverse of the Schur complement ![]() appears in the

appears in the ![]() entry of

entry of ![]() , the inverse of the alternate Schur complement

, the inverse of the alternate Schur complement ![]() can be shown to appear in the

can be shown to appear in the ![]() entry of

entry of ![]() . Thus, comparing with Eq. (3), we deduce the Sherman-Morrison-Woodbury matrix identity:

. Thus, comparing with Eq. (3), we deduce the Sherman-Morrison-Woodbury matrix identity:

(4) ![]()

To see how this formula can be useful in practice, suppose that we have a fast way of solving the system linear equations ![]() . Perhaps

. Perhaps ![]() is a simple matrix like a diagonal matrix or we have already pre-computed an

is a simple matrix like a diagonal matrix or we have already pre-computed an ![]() factorization for

factorization for ![]() . Consider the problem of solving the rank-one updated problem

. Consider the problem of solving the rank-one updated problem ![]() . Using the Sherman-Morrison-Woodbury identity with

. Using the Sherman-Morrison-Woodbury identity with ![]() ,

, ![]() , and

, and ![]() , we have that

, we have that

(5) ![]()

Careful observation of this formula shows how we can compute ![]() (solving

(solving ![]() ) by only solving two linear systems

) by only solving two linear systems ![]() for

for ![]() and

and ![]() for

for ![]() .2Further economies can be saved if one has already previously computed

.2Further economies can be saved if one has already previously computed ![]() , which may be the case in many applications.

, which may be the case in many applications.

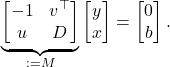

Here’s another variant of the same idea. Suppose we want solve the linear system of equation ![]() where

where ![]() is a diagonal matrix. Then we can immediately write down the lifted system of linear equations

is a diagonal matrix. Then we can immediately write down the lifted system of linear equations

(6)

One can easily see that ![]() is the Schur complement of the matrix

is the Schur complement of the matrix ![]() (with respect to the

(with respect to the ![]() block). This system of linear equations is sparse in the sense that most of its entries are zero and can be efficiently solved by sparse Gaussian elimination, for which there exists high quality software. Easy generalizations of this idea can be used to effectively solve many “sparse + low-rank” problems.

block). This system of linear equations is sparse in the sense that most of its entries are zero and can be efficiently solved by sparse Gaussian elimination, for which there exists high quality software. Easy generalizations of this idea can be used to effectively solve many “sparse + low-rank” problems.

Another example of the power of the Schur complement are in least-squares problems. Consider the problem of minimizing ![]() , where

, where ![]() is a matrix with full column rank and

is a matrix with full column rank and ![]() is the Euclidean norm of a vector

is the Euclidean norm of a vector ![]() . It is well known that the solution

. It is well known that the solution ![]() satisfies the normal equations

satisfies the normal equations ![]() . However, if the matrix

. However, if the matrix ![]() is even moderately ill-conditioned, the matrix

is even moderately ill-conditioned, the matrix ![]() will be much more ill-conditioned (the condition number will be squared), leading to a loss of accuracy. It is for this reason that it is preferable to solve the least-squares problem with

will be much more ill-conditioned (the condition number will be squared), leading to a loss of accuracy. It is for this reason that it is preferable to solve the least-squares problem with ![]() factorization. However, if

factorization. However, if ![]() factorization isn’t available, we can use the Schur complement trick instead. Notice that

factorization isn’t available, we can use the Schur complement trick instead. Notice that ![]() is the Schur complement of the matrix

is the Schur complement of the matrix ![]() . Thus, we can solve the normal equations by instead solving the (potentially, see footnote) much better-conditioned system3More precisely, one should scale the identity in the

. Thus, we can solve the normal equations by instead solving the (potentially, see footnote) much better-conditioned system3More precisely, one should scale the identity in the ![]() block of this system to be on the order of the size of the entries in

block of this system to be on the order of the size of the entries in ![]() . The conditioning is sensitive to the scaling. If one selects a scale

. The conditioning is sensitive to the scaling. If one selects a scale ![]() to be proportional to the smallest singular value

to be proportional to the smallest singular value ![]() of

of ![]() and constructs

and constructs ![]() , then one can show that the two-norm condition number of

, then one can show that the two-norm condition number of ![]() no more than twice that of

no more than twice that of ![]() . If one picks

. If one picks ![]() to be roughly equal to the largest singular value

to be roughly equal to the largest singular value ![]() , then the two-norm condition number of

, then the two-norm condition number of ![]() is roughly the square of the condition number of

is roughly the square of the condition number of ![]() . The accuracy of this approach may be less sensitive to the scaling parameter than this condition number analysis suggests.

. The accuracy of this approach may be less sensitive to the scaling parameter than this condition number analysis suggests.

(7) ![]()

In addition to (often) being much better-conditioned, this system is also highly interpretable. Multiplying out the first block row gives the equation ![]() which simplifies to

which simplifies to ![]() . The unknown

. The unknown ![]() is nothing but the least-squares residual. The second block row gives

is nothing but the least-squares residual. The second block row gives ![]() , which encodes the condition that the residual is orthogonal to the range of the matrix

, which encodes the condition that the residual is orthogonal to the range of the matrix ![]() . Thus, by lifting the normal equations to a large system of equations by means of the Schur complement trick, one derives an interpretable way of solving the least-squares problem by solving a linear system of equations, no

. Thus, by lifting the normal equations to a large system of equations by means of the Schur complement trick, one derives an interpretable way of solving the least-squares problem by solving a linear system of equations, no ![]() factorization or ill-conditioned normal equations needed.

factorization or ill-conditioned normal equations needed.

The Schur complement trick continues to have use in areas of more contemporary interest. For example, the Schur complement trick plays a central role in the theory of sequentially semiseparable matrices which is a precursor to many recent developments in rank-structured linear solvers. I have used the Schur complement trick myself several times in my work on graph-induced rank-structures.

Upshot: The Schur complement appears naturally when one does (block) Gaussian elimination on a matrix. One can also run this process in reverse: if one recognizes a matrix expression (involving a product of matrices potentially added to another matrix) as being a Schur complement of a larger matrix , one can often get considerable dividends by writing this larger matrix down. Examples include a proof of the Sherman-Morrison-Woodbury matrix identity (see Eqs. (3-4)), techniques for solving a low-rank update of a linear system of equations (see Eqs. (5-6)), and a stable way of solving least-squares problems without the need to use ![]() factorization (see Eq. (7)).

factorization (see Eq. (7)).

Additional resource: My classmate Chris Yeh has a great introduction to the Schur complements focusing more on positive semidefinite and rank-deficient matrices.

Edits: This blog post was edited to clarify the conditioning of the augmented linear system Eq. (7) and to include a reference to Chris’ post on Schur complements.

I am enjoying a lot these blog posts! Is there any change there is a small typo in D – BA^{-1}C -> D – CA^{-1}B?

Ack! You’re totally right. Hopefully fixed now. Many thanks!

Hi,

Do you have a reference for the statement that the matrix in equation 7 is better conditioned? Ideally I want to find something with a detailed proof discussing also this scaling of the identity matrix.

Thanks for bringing this to my attention. The post has now been corrected to fix some imprecise and incorrect language. See p. 260 of Åke Björck’s Iterative refinement of linear least squares solutions I for a derivation of the conditioning and how it depends on the scaling of the identity matrix (https://idp.springer.com/authorize/casa?redirect_uri=https://link.springer.com/article/10.1007/BF01939321&casa_token=-Um4RmwM3SMAAAAA:7JYsXD0VD-idOxuodYtb44CGRhmm-MSffq3tAjUFtVGBbltnYFKuuAshjrhjv-tyZFQ5kp9KtAj93Nf5u1U)

Stumbled across this as I prepare for my qualifying exams and this was such a great explanation! Thank you!