Welcome to a new series for this blog, Low-Rank Approximation Toolbox. As I discussed in a previous post, many matrices we encounter in applications are well-approximated by a matrix with a small rank. Efficiently computing low-rank approximations has been a major area of research, with applications in everything from classical problems in computational physics and signal processing to trendy topics like data science. In this series, I want to explore some broadly useful algorithms and theoretical techniques in the field of low-rank approximation.

I want to begin this series by talking about one of the fundamental types of low-rank approximation, the Nyström approximation of a ![]() (real symmetric or complex Hermitian) positive semidefinite (psd) matrix

(real symmetric or complex Hermitian) positive semidefinite (psd) matrix ![]() . Given an arbitrary

. Given an arbitrary ![]() “test matrix”

“test matrix” ![]() , the Nyström approximation is defined to be

, the Nyström approximation is defined to be

(1) ![]()

This formula is sensible whenever ![]() is invertible; if

is invertible; if ![]() is not invertible, then the inverse

is not invertible, then the inverse ![]() should be replaced by the Moore–Penrose pseudoinverse

should be replaced by the Moore–Penrose pseudoinverse ![]() . For simplicity, I will assume that

. For simplicity, I will assume that ![]() is invertible in this post, though everything we discuss will continue to work if this assumption is dropped. I use

is invertible in this post, though everything we discuss will continue to work if this assumption is dropped. I use ![]() to denote the conjugate transpose of a matrix, which agrees with the ordinary transpose

to denote the conjugate transpose of a matrix, which agrees with the ordinary transpose ![]() for real matrices. I will use the word self-adjoint to refer to a matrix which satisfies

for real matrices. I will use the word self-adjoint to refer to a matrix which satisfies ![]() .

.

The Nyström approximation (1) answers the question

What is the “best” rank-

approximation to the psd matrx

provided only with the matrix–matrix product

, where

is a known

matrix (

)?

Indeed, if we let ![]() , we observe that the Nyström approximation can be written entirely using

, we observe that the Nyström approximation can be written entirely using ![]() and

and ![]() :

:

![]()

This is central advantage of the Nyström approximation: to compute it, the only access to the matrix ![]() I need is the ability to multiply the matrices

I need is the ability to multiply the matrices ![]() and

and ![]() . In particular, I only need a single pass over the entries of

. In particular, I only need a single pass over the entries of ![]() to compute the Nyström approximation. This allows the Nyström approximation to be used in settings when other low-rank approximations wouldn’t work, such as when

to compute the Nyström approximation. This allows the Nyström approximation to be used in settings when other low-rank approximations wouldn’t work, such as when ![]() is streamed to me as a sum of matrices that must be processed as they arrive and then discarded.

is streamed to me as a sum of matrices that must be processed as they arrive and then discarded.

Choosing the Test Matrix

Every choice of ![]() test matrix

test matrix ![]() defines a rank-

defines a rank-![]() Nyström approximation1Actually,

Nyström approximation1Actually, ![]() is only rank at most

is only rank at most ![]() . For this post, we will use rank-

. For this post, we will use rank-![]() to mean “rank at most

to mean “rank at most ![]() “.

“. ![]() by (1). Unfortunately, the Nyström approximation won’t be a good low-rank approximation for every choice of

by (1). Unfortunately, the Nyström approximation won’t be a good low-rank approximation for every choice of ![]() . For an example of what can go wrong, if we pick

. For an example of what can go wrong, if we pick ![]() to have columns selected from the eigenvectors of

to have columns selected from the eigenvectors of ![]() with small eigenvalues, the approximation

with small eigenvalues, the approximation ![]() will be quite poor.

will be quite poor.

The very best choice of ![]() would be the

would be the ![]() eigenvectors associated with the largest eigenvalues. Unfortunately, computing the eigenvectors to high accuracy is computationally costly. Fortunately, we can get decent low-rank approximations out of much simpler

eigenvectors associated with the largest eigenvalues. Unfortunately, computing the eigenvectors to high accuracy is computationally costly. Fortunately, we can get decent low-rank approximations out of much simpler ![]() ‘s:

‘s:

- Random: Perhaps surprisingly, we get a fairly low-rank approximation out of just choosing

to be a random matrix, say, populated with statistically independent standard normal random entries. Intuitively, a random matrix is likely to have columns with meaningful overlap with the large-eigenvalue eigenvectors of

to be a random matrix, say, populated with statistically independent standard normal random entries. Intuitively, a random matrix is likely to have columns with meaningful overlap with the large-eigenvalue eigenvectors of  (and indeed with any

(and indeed with any  fixed orthonormal vectors). One can also pick more exotic kinds of random matrices, which can have computational benefits.

fixed orthonormal vectors). One can also pick more exotic kinds of random matrices, which can have computational benefits. - Random then improve: The more similar the test matrix

is to the large-eigenvalue eigenvectors of

is to the large-eigenvalue eigenvectors of  , the better the low-rank approximation will be. Therefore, it makes sense to use the power method (usually called subspace iteration in this context) to improve a random initial test matrix

, the better the low-rank approximation will be. Therefore, it makes sense to use the power method (usually called subspace iteration in this context) to improve a random initial test matrix  to be closer to the eigenvectors of

to be closer to the eigenvectors of  .2Even better than subspace iteration is block Krylov iteration. See section 11.6 of the following survey for details.

.2Even better than subspace iteration is block Krylov iteration. See section 11.6 of the following survey for details. - Column selection: If

consists of columns

consists of columns  of the identity matrix, then

of the identity matrix, then  just consists of columns

just consists of columns  of

of  : In MATLAB notation,

: In MATLAB notation,

This is highly appealing as it allows us to approximate the matrix![Rendered by QuickLaTeX.com \[A(:,\{i_1,\ldots,i_k\}) = A\Omega \quad \text{for}\quad \Omega = I(:,\{i_1,i_2,\ldots,i_k\}).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-49181e8e724ecddcd4646eb90f3bc35e_l3.png)

by only reading a small fraction of its entries (provided

by only reading a small fraction of its entries (provided  )! Producing a good low-rank approximation requires selecting the right column indices

)! Producing a good low-rank approximation requires selecting the right column indices  (usually under the constraint of reading a small number of entries from

(usually under the constraint of reading a small number of entries from  ). In my research with Yifan Chen, Joel A. Tropp, and Robert J. Webber, I’ve argued that the most well-rounded algorithm for this task is a randomly pivoted partial Cholesky decomposition.

). In my research with Yifan Chen, Joel A. Tropp, and Robert J. Webber, I’ve argued that the most well-rounded algorithm for this task is a randomly pivoted partial Cholesky decomposition.

The Projection Formula

Now that we’ve discussed the choice of test matrix, we shall explore the quality of the Nyström approximation as measured by the size of the residual ![]() . As a first step, we shall show that the residual is psd. This means that

. As a first step, we shall show that the residual is psd. This means that ![]() is an underapproximation to

is an underapproximation to ![]() .

.

The positive semidefiniteness of the residual follows from the following projection formula for the Nyström approximation:

![]()

Here, ![]() denotes the the orthogonal projection onto the column space of the matrix

denotes the the orthogonal projection onto the column space of the matrix ![]() . To deduce the projection formula, we break down

. To deduce the projection formula, we break down ![]() as

as ![]() in (1):

in (1):

![]()

The fact that the paranthesized quantity is ![]() can be verified in a variety of ways, such as by QR factorization.3Let

can be verified in a variety of ways, such as by QR factorization.3Let ![]() , where

, where ![]() has orthonormal columns and

has orthonormal columns and ![]() is square and upper triangular. The orthogonal projection is

is square and upper triangular. The orthogonal projection is ![]() . The parenthesized expression is

. The parenthesized expression is ![]() .

.

With the projection formula in hand, we easily obtain the following expression for the residual:

![]()

To show that this residual is psd, we make use of the conjugation rule.

Conjugation rule: For a matrix

and a self-adjoint matrix

, if

is psd then

is psd. If

is invertible, then the converse holds: if

is psd, then

is psd.

The matrix ![]() is an orthogonal projection and therefore psd. Thus, by the conjugation rule, the residual of the is Nyström approximation is psd:

is an orthogonal projection and therefore psd. Thus, by the conjugation rule, the residual of the is Nyström approximation is psd:

![]()

Optimality of the Nyström Approximation

There’s a question we’ve been putting off that can’t be deferred any longer:

Is the Nyström approximation actually a good low-rank approximation?

As we discussed earlier, the answer to this question depends on the test matrix ![]() . Different choices for

. Different choices for ![]() give different approximation errors. See the following papers for Nyström approximation error bounds with different choices of

give different approximation errors. See the following papers for Nyström approximation error bounds with different choices of ![]() . While the Nyström approximation can be better or worse depending on the choice of

. While the Nyström approximation can be better or worse depending on the choice of ![]() , what is true about Nyström approximation for every choice of

, what is true about Nyström approximation for every choice of ![]() is that:

is that:

The Nyström approximation ![]() is the best possible rank-

is the best possible rank-![]() approximation to

approximation to ![]() given the information

given the information ![]() .

.

In precise terms, I mean the following:

Theorem: Out of all self-adjoint matrices

spanned by the columns of

with a psd residual

, the Nyström approximation has the smallest error as measured by either the spectral or Frobenius norm (or indeed any unitarily invariant norm, see below).

Let’s break this statement down a bit. This result states that the Nyström approximation is the best approximation ![]() to

to ![]() under three conditions:

under three conditions:

is self-adjoint.

is self-adjoint. is spanned by the columns of

is spanned by the columns of  .

.

I find these first two requirements to be natural. Since ![]() is self-adjoint, it makes sense to require our approximation

is self-adjoint, it makes sense to require our approximation ![]() to be as well. The stipulation that

to be as well. The stipulation that ![]() is spanned by the columns

is spanned by the columns ![]() seems like a very natural requirement given we want to think of approximations which only use the information

seems like a very natural requirement given we want to think of approximations which only use the information ![]() . Additionally, requirement 2 ensures that

. Additionally, requirement 2 ensures that ![]() has rank at most

has rank at most ![]() , so we are really only considering low-rank approximations to

, so we are really only considering low-rank approximations to ![]() .

.

The last requirement is less natural:

- The residual

is psd.

is psd.

This is not an obvious requirement to impose on our approximation. Indeed, it was a nontrivial calculation using the projection formula to show that the Nyström approximation itself satisfies this requirement! Nevertheless, this third stipulation is required to make the theorem true. The Nyström approximation (1) is the best “underapproximation” to the matrix ![]() to in the span of

to in the span of ![]() .

.

Intermezzo: Unitarily Invariant Norms and the Psd Order

To prove our theorem about the optimality of the Nyström approximation, we shall need two ideas from matrix theory: unitarily invariant norms and the psd order. We shall briefly describe each in turn.

A norm ![]() defined on the set of

defined on the set of ![]() matrices is said to be unitarily invariant if the norm of a matrix does not change upon left- or right-multiplication by a unitary matrix:

matrices is said to be unitarily invariant if the norm of a matrix does not change upon left- or right-multiplication by a unitary matrix:

![]()

Recall that a unitary matrix ![]() (called a real orthogonal matrix if

(called a real orthogonal matrix if ![]() is real-valued) is one that obeys

is real-valued) is one that obeys ![]() . Unitary matrices preserve the Euclidean lengths of vectors, which makes the class of unitarily invariant norms highly natural. Important examples include the spectral, Frobenius, and nuclear matrix norms:

. Unitary matrices preserve the Euclidean lengths of vectors, which makes the class of unitarily invariant norms highly natural. Important examples include the spectral, Frobenius, and nuclear matrix norms:

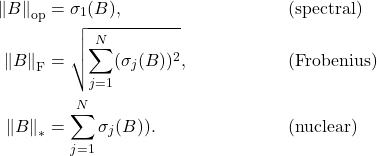

The unitarily invariant norm of a matrix ![]() depends entirely on its singular values

depends entirely on its singular values ![]() . For instance, the spectral, Frobenius, and nuclear norms take the forms

. For instance, the spectral, Frobenius, and nuclear norms take the forms

In addition to being entirely determined by the singular values, unitarily invariant norms are non-decreasing functions of the singular values: If the ![]() th singular value of

th singular value of ![]() is larger than the

is larger than the ![]() th singular value of

th singular value of ![]() for

for ![]() , then

, then ![]() for every unitarily invariant norm

for every unitarily invariant norm ![]() . For more on unitarily invariant norms, see this short and information-packed blog post from Nick Higham.

. For more on unitarily invariant norms, see this short and information-packed blog post from Nick Higham.

Our second ingredient is the psd order (also known as the Loewner order). A self-adjoint matrix ![]() is larger than a self-adjoint matrix

is larger than a self-adjoint matrix ![]() according to the psd order, written

according to the psd order, written ![]() , if the difference

, if the difference ![]() is psd. As a consequence,

is psd. As a consequence, ![]() if and only if

if and only if ![]() is psd, where

is psd, where ![]() here denotes the zero matrix of the same size as

here denotes the zero matrix of the same size as ![]() . Using the psd order, the positive semidefiniteness of the Nyström residual can be written as

. Using the psd order, the positive semidefiniteness of the Nyström residual can be written as ![]() .

.

If ![]() and

and ![]() are both psd matrices and

are both psd matrices and ![]() is larger than

is larger than ![]() in the psd order,

in the psd order, ![]() , it seems natural to expect that

, it seems natural to expect that ![]() is larger than

is larger than ![]() in norm. Indeed, this intuitive statement is true, at least when one restricts oneself to unitarily invariant norms.

in norm. Indeed, this intuitive statement is true, at least when one restricts oneself to unitarily invariant norms.

Psd order and norms. If

, then

for every unitarily invariant norm

.

This fact is a consequence of the following observations:

- If

, then the eigenvalues of

, then the eigenvalues of  are larger than

are larger than  in the sense that the

in the sense that the  th largest eigenvalue of

th largest eigenvalue of  is larger than the

is larger than the  th largest eigenvalue of

th largest eigenvalue of  .

. - The singular values of a psd matrix are its eigenvalues.

- Unitarily invariant norms are non-decreasing functions of the singular values.

Optimality of the Nyström Approximation: Proof

In this section, we’ll prove our theorem showing the Nyström approximation is the best low-rank approximation satisfying properties 1, 2, and 3. To this end, let ![]() be any matrix satisfying properties 1, 2, and 3. Because of properties 1 (self-adjointness) and 2 (spanned by columns of

be any matrix satisfying properties 1, 2, and 3. Because of properties 1 (self-adjointness) and 2 (spanned by columns of ![]() ),

), ![]() can be written in the form

can be written in the form

![]()

where ![]() is a self-adjoint matrix. To make this more similar to the projection formula, we can factor

is a self-adjoint matrix. To make this more similar to the projection formula, we can factor ![]() on both sides to obtain

on both sides to obtain

![]()

To make this more comparable to the projection formula, we can reparametrize by introducing a matrix ![]() with orthonormal columns with the same column space as

with orthonormal columns with the same column space as ![]() . Under this parametrization,

. Under this parametrization, ![]() takes the form

takes the form

![]()

The residual of this approximation is

(2) ![]()

We now make use of the of conjugation rule again. To simplify things, we make the assumption that ![]() is invertible. (As an exercise, see if you can adapt this argument to the case when this assumption doesn’t hold!) Since

is invertible. (As an exercise, see if you can adapt this argument to the case when this assumption doesn’t hold!) Since ![]() is psd (property 3), the conjugation rule tells us that

is psd (property 3), the conjugation rule tells us that

![]()

What does this observation tell us about ![]() ? We can apply the conjugation rule again to conclude

? We can apply the conjugation rule again to conclude

![]()

(Notice that ![]() since

since ![]() has orthonormal columns.)

has orthonormal columns.)

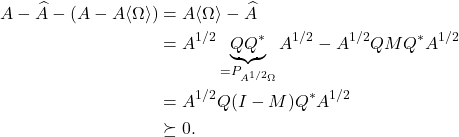

We are now in a place to show that ![]() . Indeed,

. Indeed,

The second line is the projection formula together with the observation that ![]() and the last line is the conjugation rule combined with the fact that

and the last line is the conjugation rule combined with the fact that ![]() is psd. Thus, having shown

is psd. Thus, having shown

![]()

we conclude

![]()

5 thoughts on “Low-Rank Approximation Toolbox: Nyström Approximation”