I’m excited to share that my paper Kernel quadrature with randomly pivoted Cholesky, joint with Elvira Moreno, has been accepted to NeurIPS 2023 as a spotlight.

Today, I want to share with you a little about the kernel quadrature problem. To avoid this post getting too long, I’m going to write this post assuming familiarity with the concepts of reproducing kernel Hilbert spaces and Gaussian processes.

Integration and Quadrature

Integration is one of the most widely used operations in mathematics and its applications. As such, it is a basic problem of wide interest to develop numerical methods for evaluating integrals.

In this post, we will consider a quite general integration problem. Let ![]() be a domain and let

be a domain and let ![]() be a (finite Borel) measure on

be a (finite Borel) measure on ![]() . We consider the task of evaluating

. We consider the task of evaluating

![]()

To evaluate, we will design a quadrature approximation to the integral ![]() :

:

![]()

Smoothness and Reproducing Kernel Hilbert Spaces

As is frequently the case in computational mathematics, the accuracy we can expect for this integration problem depends on the smoothness of the integrand ![]() . The more smooth

. The more smooth ![]() is, the more accurately we can expect to compute

is, the more accurately we can expect to compute ![]() for a given budget of computational effort.

for a given budget of computational effort.

In this post, will measure smoothness using the reproducing kernel Hilbert space (RKHS) formalism. Let ![]() be an RKHS with norm

be an RKHS with norm ![]() . We can interpret the norm as assigning a roughness

. We can interpret the norm as assigning a roughness ![]() to each function

to each function ![]() . If

. If ![]() is large, then

is large, then ![]() is rough; if

is rough; if ![]() is small, then

is small, then ![]() is smooth.

is smooth.

Associated to the RKHS ![]() is the titular reproducing kernel

is the titular reproducing kernel ![]() . The kernel is a bivariate function

. The kernel is a bivariate function ![]() . It is related to the RKHS inner product

. It is related to the RKHS inner product ![]() by the reproducing property

by the reproducing property

![]()

The Ideal Weights

To design a quadrature rule, we have to set the nodes ![]() and weights

and weights ![]() . Let’s first assume that the nodes

. Let’s first assume that the nodes ![]() are fixed, and talk about how to pick the weights

are fixed, and talk about how to pick the weights ![]() .

.

There’s one choice of weights ![]() that we’ll called the ideal weights. There (at least) are five equivalent ways of characterizing the ideal weights. We’ll present all of them. As an exercise, you can try and convince yourself that these characterizations are equivalent, giving rise to the same weights.

that we’ll called the ideal weights. There (at least) are five equivalent ways of characterizing the ideal weights. We’ll present all of them. As an exercise, you can try and convince yourself that these characterizations are equivalent, giving rise to the same weights.

Interpretation 1: Exactness

A standard way of designing quadrature rules is to make them exact (i.e., error-free) for some class of functions. For instance, many classical quadrature rules are exact for polynomials of degree up to ![]() .

.

For kernel quadrature, it makes sense to design the quadrature rule to be exact for the kernel function at the selected nodes. That is, we require

![]()

![Rendered by QuickLaTeX.com \[\sum_{j=1}^n k(s_i,s_j)w^\star_j = \int_\Omega k(s_i,x) g(x)\,\mathrm{d}\mu(x) \quad \text{for }i=1,2,\ldots,n.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-1a5ca36966f56dae9d994e208f9ef036_l3.png)

Interpretation 2: Interpolate and Integrate

Here’s another very classical way of designing a quadrature rule. First, interpolate the function values ![]() at the nodes, obtaining an interpolant

at the nodes, obtaining an interpolant ![]() . Then, obtain an approximation to the integral by integrating the interpolant:

. Then, obtain an approximation to the integral by integrating the interpolant:

![]()

In our context, the appropriate interpolation method is kernel interpolation.1Kernel interpolation is also called Gaussian process regression or kriging though (confusingly) these terms can also refer to slightly different methods. It is the regularization-free limit of kernel ridge regression. The kernel interpolant is defined to be the minimum-norm function

![]()

![]()

![]()

Interpretation 3: Minimizing the Worst-Case Error

Define the worst-case error of weights ![]() and nodes

and nodes ![]() to be

to be

![]()

Having defined the worst-case error, the ideal weights are precisely the weights that minimize this quantity

![]()

Interpretation 4: Minimizing the Mean-Square Error

The next two interpretations of the ideal weights will adopt a probabilistic framing. A Gaussian process is a random function ![]() such that

such that ![]() ’s values at any collection of points are (jointly) Gaussian random variables. We write

’s values at any collection of points are (jointly) Gaussian random variables. We write ![]() for a mean-zero Gaussian process with covariance function

for a mean-zero Gaussian process with covariance function ![]() :

:

![]()

Define the mean-square quadrature error of weights

![]()

Pleasantly, the mean-square error is equal ro the square of the worst-case error

![]()

![]()

Interpretation 5: Conditional Expectation

For our last interpretation, again consider a Gaussian process ![]() . The integral of this random function

. The integral of this random function ![]() is a random variable. To numerically integrate a function

is a random variable. To numerically integrate a function ![]() , compute the expectation of

, compute the expectation of ![]() conditional on

conditional on ![]() agreeing with

agreeing with ![]() at the quadrature nodes:

at the quadrature nodes:

![]()

Conclusion

We’ve just seen five sensible ways of defining the ideal weights for quadrature in a general reproducing kernel Hilbert space. Remarkably, all five lead to exactly the same choice of weights. To me, these five equivalent characterizations give me more confidence that the ideal weights really are the “right” or “natural” choice for kernel quadrature.

That said, there are other reasonable requirements that we might want to impose on the weights. For instance, if ![]() is a probability measure and

is a probability measure and ![]() , it is reasonable to add an additional constraint that the weights

, it is reasonable to add an additional constraint that the weights ![]() lie in the probability simplex

lie in the probability simplex

![Rendered by QuickLaTeX.com \[w\in\Delta\coloneqq \left\{ p\in\real^n_+ : \sum_{i=1}^n p_i = 1\right\}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-55c593b2593abc9ea8c18d4f8edbccd3_l3.png)

What About the Nodes?

We’ve spent a lot of time talking about how to pick the quadrature weights, but how should we pick the nodes ![]() ? To pick the nodes, it seems sensible to try and minimize the worst-case error

? To pick the nodes, it seems sensible to try and minimize the worst-case error ![]() with the ideal weights

with the ideal weights ![]() . For this purpose, we can use the following formula:

. For this purpose, we can use the following formula:

![]()

![]()

I find the appearance of the Nyström approximation in this context to be surprising and delightful. Previously on this blog, we’ve seen (column) Nyström approximation in the context of matrix low-rank approximation. Now, a continuum analog of the matrix Nyström approximation has appeared in the error formula for numerical integration.

The appearance of the Nyström approximation in the kernel quadrature error ![]() also suggests a strategy for picking the nodes.

also suggests a strategy for picking the nodes.

Node selection strategy. We should pick the nodes

to make the Nyström approximation

as accurate as possible.

The closer ![]() is to

is to ![]() , the smaller the function

, the smaller the function ![]() is and, thus, the smaller the error

is and, thus, the smaller the error

![]()

Indeed, all three of these algorithms—randomly pivoted Cholesky, ridge leverage score sampling, and determinantal point process sampling—have been studied for kernel quadrature. The first of these algorithms, randomly pivoted Cholesky, is the subject of our paper. We show that this simple, adaptive sampling method produces excellent nodes for kernel quadrature. Intuitively, randomly pivoted Cholesky is effective because it is repulsive: After having picked nodes ![]() , it has a high probability of placing the next node

, it has a high probability of placing the next node ![]() far from the previously selected nodes.

far from the previously selected nodes.

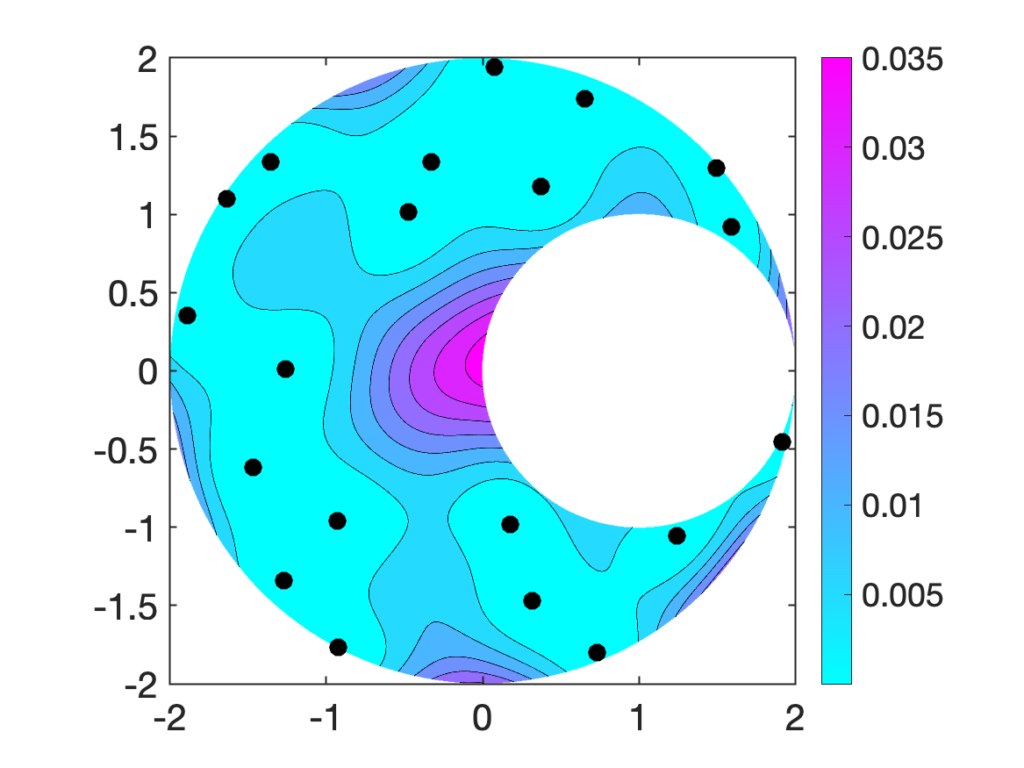

The following image shows 20 nodes selected by randomly pivoted Cholesky in a crescent-shaped region. The cyan–pink shading denotes the probability distribution for picking the next node. We see that the center of the crescent does not have any nodes, and thus is most likely to receive a node during the next round of sampling.

In our paper, we demonstrate—empirically and theoretically—that randomly pivoted Cholesky produces excellent nodes for quadrature. We also discuss efficient rejection sampling algorithms for sampling nodes with the randomly pivoted Cholesky distribution. Check out the paper for details!

One thought on “Five Interpretations of Kernel Quadrature”