My first experience with the numerical solution of partial differential equations (PDEs) was with finite difference methods. I found finite difference methods to be somewhat fiddly: it is quite an exercise in patience to, for example, work out the appropriate fifth-order finite difference approximation to a second order differential operator on an irregularly spaced grid and even more of a pain to prove that the scheme is convergent. I found that I liked the finite element method a lot better1Finite element methods certainly have their own fiddly-nesses (as anyone who has worked with a serious finite element code can no doubt attest to). as there was a unifying underlying functional analytic theory, Galerkin approximation, which showed how, in a sense, the finite element method computed the best possible approximate solution to the PDE among a family of potential solutions. However, I came to feel later that Galerkin approximation was, in a sense, the more fundamental concept, with the finite element method being one particular instantiation (with spectral methods, boundary element methods, and the conjugate gradient method being others). In this post, I hope to give a general introduction to Galerkin approximation as computing the best possible approximate solution to a problem within a certain finite-dimensional space of possibilities.

Systems of Linear Equations

Let us begin with a linear algebraic example, which is unburdened by some of the technicalities of partial differential equations. Suppose we want to solve a very large system of linear equations ![]() , where the matrix

, where the matrix ![]() is symmetric and positive definite (SPD). Suppose that

is symmetric and positive definite (SPD). Suppose that ![]() is

is ![]() where

where ![]() is so large that we don’t even want to store all

is so large that we don’t even want to store all ![]() components of the solution

components of the solution ![]() on our computer. What can we possibly do?

on our computer. What can we possibly do?

One solution is to consider only solutions ![]() lying in a subspace

lying in a subspace ![]() of the set of all possible solutions

of the set of all possible solutions ![]() . If this subspace has a basis

. If this subspace has a basis ![]() , then the solution

, then the solution ![]() can be represented as

can be represented as ![]() and one only has to store the

and one only has to store the ![]() numbers

numbers ![]() . In general,

. In general, ![]() will not belong to the subspace

will not belong to the subspace ![]() and we must settle for an approximate solution

and we must settle for an approximate solution ![]() .

.

The next step is to convert the system of linear equations ![]() into a form which is more amenable to approximate solution on a subspace

into a form which is more amenable to approximate solution on a subspace ![]() . Note that the equation

. Note that the equation ![]() encodes

encodes ![]() different linear equations

different linear equations ![]() where

where ![]() is the

is the ![]() th row of

th row of ![]() and

and ![]() is the

is the ![]() th element of

th element of ![]() . Note that the

. Note that the ![]() th equation is equivalent to the condition

th equation is equivalent to the condition ![]() , where

, where ![]() is the vector with zeros in all entries except for the

is the vector with zeros in all entries except for the ![]() th entry which is a one. More generally, by multiplying the equation

th entry which is a one. More generally, by multiplying the equation ![]() by an arbitrary test row vector

by an arbitrary test row vector ![]() , we get

, we get ![]() for all

for all ![]() . We refer to this as a variational formulation of the linear system of equations

. We refer to this as a variational formulation of the linear system of equations ![]() . In fact, one can easily show that the variational problem is equivalent to the system of linear equations:

. In fact, one can easily show that the variational problem is equivalent to the system of linear equations:

(1) ![]()

Since we are seeking an approximate solution from the subspace ![]() , it is only natural that we also restrict our test vectors

, it is only natural that we also restrict our test vectors ![]() to lie in the subspace

to lie in the subspace ![]() . Thus, we seek an approximate solution

. Thus, we seek an approximate solution ![]() to the system of equations

to the system of equations ![]() as the solution of the variational problem

as the solution of the variational problem

(2) ![]()

One can relatively easily show this problem possesses a unique solution ![]() .2Here is a linear algebraic proof. As we shall see below, the same conclusion will also follow from the general Lax-Milgram theorem. Let

.2Here is a linear algebraic proof. As we shall see below, the same conclusion will also follow from the general Lax-Milgram theorem. Let ![]() be a matrix whose columns form a basis for

be a matrix whose columns form a basis for ![]() . Then every

. Then every ![]() can be written as

can be written as ![]() for some

for some ![]() . Thus, writing

. Thus, writing ![]() , we have that

, we have that ![]() for every

for every ![]() . But this is just a variational formulation of the equation

. But this is just a variational formulation of the equation ![]() . The matrix

. The matrix ![]() is SPD since

is SPD since ![]() for

for ![]() since

since ![]() is SPD. Thus

is SPD. Thus ![]() has a unique solution

has a unique solution ![]() . Thus

. Thus ![]() is the unique solution to the variational problem Eq. (2). In what sense is

is the unique solution to the variational problem Eq. (2). In what sense is ![]() a good approximate solution for

a good approximate solution for ![]() ? To answer this question, we need to introduce a special way of measuring the error to an approximate solution to

? To answer this question, we need to introduce a special way of measuring the error to an approximate solution to ![]() . We define the

. We define the ![]() -inner product of a vector

-inner product of a vector ![]() and

and ![]() to be

to be ![]() and the associated

and the associated ![]() -norm

-norm ![]() .3Note that

.3Note that ![]() -norm can be seen as a weighted Euclidean norm, where the components of the vector

-norm can be seen as a weighted Euclidean norm, where the components of the vector ![]() in the direction of the eigenvectors of

in the direction of the eigenvectors of ![]() are scaled by their corresponding eigenvector. Concretely, if

are scaled by their corresponding eigenvector. Concretely, if ![]() where

where ![]() is an eigenvector of

is an eigenvector of ![]() with eigenvalue

with eigenvalue ![]() (

(![]() ), then we have

), then we have ![]() . All of the properties satisfied by the familiar Euclidean inner product and norm carry over to the new

. All of the properties satisfied by the familiar Euclidean inner product and norm carry over to the new ![]() -inner product and norm (e.g., the Pythagorean theorem). Indeed, for those familiar, one can show

-inner product and norm (e.g., the Pythagorean theorem). Indeed, for those familiar, one can show ![]() satisfies all the axioms for an inner product space.

satisfies all the axioms for an inner product space.

We shall now show that the error ![]() between

between ![]() and its Galerkin approximation

and its Galerkin approximation ![]() is

is ![]() -orthogonal to the space

-orthogonal to the space ![]() in the sense that

in the sense that ![]() for all

for all ![]() . This follows from the straightforward calculation, for

. This follows from the straightforward calculation, for ![]() ,

,

(3) ![]()

where ![]() since

since ![]() solves the variational problem Eq. (1) and

solves the variational problem Eq. (1) and ![]() since

since ![]() solves the variational problem Eq. (2).

solves the variational problem Eq. (2).

The fact that the error ![]() is

is ![]() -orthogonal to

-orthogonal to ![]() can be used to show that

can be used to show that ![]() is, in a sense, the best approximate solution to

is, in a sense, the best approximate solution to ![]() in the subspace

in the subspace ![]() . First note that, for any approximate solution

. First note that, for any approximate solution ![]() to

to ![]() , the vector

, the vector ![]() is

is ![]() -orthogonal to

-orthogonal to ![]() . Thus, by the Pythagorean theorem,

. Thus, by the Pythagorean theorem,

(4) ![]()

Thus, the Galerkin approximation ![]() is the best approximate solution to

is the best approximate solution to ![]() in the subspace

in the subspace ![]() with respect to the

with respect to the ![]() -norm,

-norm, ![]() for every

for every ![]() . Thus, if one picks a subspace

. Thus, if one picks a subspace ![]() for which the solution

for which the solution ![]() almost lies in

almost lies in ![]() 4In the sense that

4In the sense that ![]() is small then

is small then ![]() will be a good approximate solution to

will be a good approximate solution to ![]() , irrespective of the size of the subspace

, irrespective of the size of the subspace ![]() .

.

Variational Formulations of Differential Equations

As I hope I’ve conveyed in the previous section, Galerkin approximation is not a technique that only works for finite element methods or even just PDEs. However, differential and integral equations are one of the most important applications of Galerkin approximation since the space of all possible solution to a differential or integral equation is infinite-dimensional: approximation in a finite-dimensional space is absolutely critical. In this section, I want to give a brief introduction to how one can develop variational formulations of differential equations amenable to Galerkin approximation. For simplicity of presentation, I shall focus on a one-dimensional problem which is described by an ordinary differential equation (ODE) boundary value problem. All of this generalized wholesale to partial differential equations in multiple dimensions, though there are some additional technical and notational difficulties (some of which I will address in footnotes). Variational formulation of differential equations is a topic with important technical subtleties which I will end up brushing past. Rigorous references are Chapters 5 and 6 from Evans’ Partial Differential Equations or Chapters 0-2 from Brenner and Scott’s The Mathematical Theory of Finite Element Methods.

As our model problem for which we seek a variational formulation, we will focus on the one-dimensional Poisson equation, which appears in the study of electrostatics, gravitation, diffusion, heat flow, and fluid mechanics. The unknown ![]() is a real-valued function on an interval which take to be

is a real-valued function on an interval which take to be ![]() .5In higher dimensions, one can consider an arbitrary domain

.5In higher dimensions, one can consider an arbitrary domain ![]() with, for example, a Lipschitz boundary. We assume Dirichlet boundary conditions that

with, for example, a Lipschitz boundary. We assume Dirichlet boundary conditions that ![]() is equal to zero on the boundary

is equal to zero on the boundary ![]() .6In higher dimensions, one has

.6In higher dimensions, one has ![]() on the boundary

on the boundary ![]() of the region

of the region ![]() . Poisson’s equations then reads7

. Poisson’s equations then reads7![]() on

on ![]() and

and ![]() for higher dimensions, where

for higher dimensions, where ![]() is the Laplacian operator.

is the Laplacian operator.

(5) ![]()

We wish to develop a variational formulation of this differential equation, similar to how we develop a variational formulation of the linear system of equations in the previous section. To develop our variational formulation, we take inspiration from physics. If ![]() represents, say, the temperature at a point

represents, say, the temperature at a point ![]() , we are never able to measure

, we are never able to measure ![]() exactly. Rather, we can measure the temperature in a region around

exactly. Rather, we can measure the temperature in a region around ![]() with a thermometer. No matter how carefully we engineer our thermometer, our thermometer tip will have some volume occupying a region

with a thermometer. No matter how carefully we engineer our thermometer, our thermometer tip will have some volume occupying a region ![]() in space. The temperature

in space. The temperature ![]() measured by our thermometer will be the average temperature in the region

measured by our thermometer will be the average temperature in the region ![]() or, more generally, a weighted average

or, more generally, a weighted average ![]() where

where ![]() is a weighting function which is zero outside the region

is a weighting function which is zero outside the region ![]() . Now let’s use our thermometer to “measure” our differential equation:

. Now let’s use our thermometer to “measure” our differential equation:

(6) ![]()

This integral expression is some kind of variational formulation of our differential equation, as it is an equation involving the solution to our differential equation ![]() which must hold for every averaging function

which must hold for every averaging function ![]() . (The precise meaning of every will be forthcoming.) It will benefit us greatly to make this expression more “symmetric” with respect to

. (The precise meaning of every will be forthcoming.) It will benefit us greatly to make this expression more “symmetric” with respect to ![]() and

and ![]() . To do this, we shall integrate by parts:8Integrating by parts is harder in higher dimensions. My personal advice for integrating by parts in higher dimensions is to remember that integration by parts is ultimately a result of the product rule. As such, to integrate by parts, we first write an expression involving our integrand using the product rule of some differential operator and then integrate by both sides. In this case, notice that

. To do this, we shall integrate by parts:8Integrating by parts is harder in higher dimensions. My personal advice for integrating by parts in higher dimensions is to remember that integration by parts is ultimately a result of the product rule. As such, to integrate by parts, we first write an expression involving our integrand using the product rule of some differential operator and then integrate by both sides. In this case, notice that ![]() . Rearranging and integrating, we see that

. Rearranging and integrating, we see that ![]() . We then apply the divergence theorem to the last term to get

. We then apply the divergence theorem to the last term to get ![]() , where

, where ![]() represents an outward facing unit normal to the boundary

represents an outward facing unit normal to the boundary ![]() and

and ![]() represents integration on the surface

represents integration on the surface ![]() . If

. If ![]() is zero on

is zero on ![]() , we conclude

, we conclude ![]() for all nice functions

for all nice functions ![]() on

on ![]() satisfying

satisfying ![]() on

on ![]() .

.

(7) ![]()

In particular, if ![]() is zero on the boundary

is zero on the boundary ![]() , then the second two terms vanish and we’re left with the variational equation

, then the second two terms vanish and we’re left with the variational equation

(8) ![]()

Compare the variational formulation of the Poisson equation Eq. (8) to the variational formulation of the system of linear equations ![]() in Eq. (1). The solution vector

in Eq. (1). The solution vector ![]() in the differential equation context is a function

in the differential equation context is a function ![]() satisfying the boundary condition of

satisfying the boundary condition of ![]() being zero on the boundary

being zero on the boundary ![]() . The right-hand side

. The right-hand side ![]() is replaced by a function

is replaced by a function ![]() on the interval

on the interval ![]() . The test vector

. The test vector ![]() is replaced by a test function

is replaced by a test function ![]() on the interval

on the interval ![]() . The matrix product expression

. The matrix product expression ![]() is replaced by the integral

is replaced by the integral ![]() . The product

. The product ![]() is replaced by the integral

is replaced by the integral ![]() . As we shall soon see, there is a unifying theory which treats both of these contexts simultaneously.

. As we shall soon see, there is a unifying theory which treats both of these contexts simultaneously.

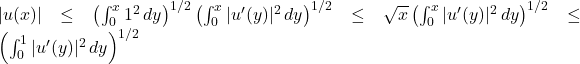

Before this unifying theory, we must address the question of which functions ![]() we will consider in our variational formulation. One can show that all of the calculations we did in this section hold if

we will consider in our variational formulation. One can show that all of the calculations we did in this section hold if ![]() is a continuously differentiable function on

is a continuously differentiable function on ![]() which is zero away from the endpoints

which is zero away from the endpoints ![]() and

and ![]() and

and ![]() is a twice continuously differentiable function on

is a twice continuously differentiable function on ![]() . Because of technical functional analytic considerations, we shall actually want to expand the class of functions in our variational formulation to even more functions

. Because of technical functional analytic considerations, we shall actually want to expand the class of functions in our variational formulation to even more functions ![]() . Specifically, we shall consider all functions

. Specifically, we shall consider all functions ![]() which are (A) square-integrable (

which are (A) square-integrable (![]() is finite), (B) possess a square integrable derivative9More specifically, we only insist that

is finite), (B) possess a square integrable derivative9More specifically, we only insist that ![]() possess a square-integrable weak derivative.

possess a square-integrable weak derivative. ![]() (

(![]() is finite), and (C) are zero on the boundary. We refer to this class of functions as the Sobolev space

is finite), and (C) are zero on the boundary. We refer to this class of functions as the Sobolev space ![]() .10The class of functions satisfying (A) and (B) but not necessarily (C) is the Sobolev space

.10The class of functions satisfying (A) and (B) but not necessarily (C) is the Sobolev space ![]() . For an arbitrary function in

. For an arbitrary function in ![]() , the existence of a well-defined restriction to the boundary

, the existence of a well-defined restriction to the boundary ![]() and

and ![]() is actually nontrivial to show, requiring showing the existence of a trace operator. Chapter 5 of Evan’s Partial Differential Equations is a good introduction to Sobolev spaces. The Sobolev spaces

is actually nontrivial to show, requiring showing the existence of a trace operator. Chapter 5 of Evan’s Partial Differential Equations is a good introduction to Sobolev spaces. The Sobolev spaces ![]() and

and ![]() naturally extend to spaces

naturally extend to spaces ![]() and

and ![]() for an arbitrary domain

for an arbitrary domain ![]() with a nice boundary.

with a nice boundary.

Now this is where things get really strange. Note that it is possible for a function ![]() to satisfy the variational formulation Eq. (8) but for

to satisfy the variational formulation Eq. (8) but for ![]() not to satisfy the Poisson equation Eq. (5). A simple example is when

not to satisfy the Poisson equation Eq. (5). A simple example is when ![]() possesses a discontinuity (say, for example, a step discontinuity where

possesses a discontinuity (say, for example, a step discontinuity where ![]() is

is ![]() and then jumps to

and then jumps to ![]() ). Then no continuously differentiable

). Then no continuously differentiable ![]() will satisfy Eq. (5) at every point in

will satisfy Eq. (5) at every point in ![]() and yet a solution

and yet a solution ![]() to the variational problem Eq. (8) exists! The variational formulation actually allows us to give a reasonable definition of “solving the differential equation” when a classical solution to

to the variational problem Eq. (8) exists! The variational formulation actually allows us to give a reasonable definition of “solving the differential equation” when a classical solution to ![]() does not exist. Our only requirement for the variational problem is that

does not exist. Our only requirement for the variational problem is that ![]() , itself, belongs to the space

, itself, belongs to the space ![]() . A solution to the variational problem Eq. (8) is called a weak solution to the differential equation Eq. (5) because, as we have argued, a weak solution to Eq. (8) need not always solve Eq. (5).11One can show that any classical solution to Eq. (5) solves Eq. (8). Given certain conditions on

. A solution to the variational problem Eq. (8) is called a weak solution to the differential equation Eq. (5) because, as we have argued, a weak solution to Eq. (8) need not always solve Eq. (5).11One can show that any classical solution to Eq. (5) solves Eq. (8). Given certain conditions on ![]() , one can go the other way, showing that weak solutions are indeed bonafide classical solutions. This is the subject of regularity theory.

, one can go the other way, showing that weak solutions are indeed bonafide classical solutions. This is the subject of regularity theory.

The Lax-Milgram Theorem

Let us now build up an abstract language which allows us to use Galerkin approximation both for linear systems of equations and PDEs (as well as other contexts). If one compares the expressions ![]() from the linear systems context and

from the linear systems context and ![]() from the differential equation context, one recognizes that both these expressions are so-called bilinear forms: they depend on two arguments (

from the differential equation context, one recognizes that both these expressions are so-called bilinear forms: they depend on two arguments (![]() and

and ![]() or

or ![]() and

and ![]() ) and are a linear transformation in each argument independently if the other one is fixed. For example, if one defines

) and are a linear transformation in each argument independently if the other one is fixed. For example, if one defines ![]() one has

one has ![]() . Similarly, if one defines

. Similarly, if one defines ![]() , then

, then ![]() .

.

Implicitly swimming in the background is some space of vectors or function which this bilinear form ![]() is defined upon. In the linear system of equations context, this space

is defined upon. In the linear system of equations context, this space ![]() of

of ![]() -dimensional vectors and in the differential context, this space is

-dimensional vectors and in the differential context, this space is ![]() as defined in the previous section.12The connection between vectors and functions is even more natural if one considers a function

as defined in the previous section.12The connection between vectors and functions is even more natural if one considers a function ![]() as a vector of infinite length, with one entry for each real number

as a vector of infinite length, with one entry for each real number ![]() . Call this space

. Call this space ![]() . We shall assume that

. We shall assume that ![]() is a special type of linear space called a Hilbert space, an inner product space (with inner product

is a special type of linear space called a Hilbert space, an inner product space (with inner product ![]() ) where every Cauchy sequence converges to an element in

) where every Cauchy sequence converges to an element in ![]() (in the inner product-induced norm).13Note that every inner product space has a unique completion to a Hilbert space. For example, if one considers the space

(in the inner product-induced norm).13Note that every inner product space has a unique completion to a Hilbert space. For example, if one considers the space ![]() of

of ![]() smooth functions which are zero away from the boundary of

smooth functions which are zero away from the boundary of ![]() with the inner product

with the inner product ![]() , the completion is

, the completion is ![]() . A natural extension to higher dimensions hold. The Cauchy sequence convergence property, also known as metric completeness, is important because we shall often deal with a sequence of entries

. A natural extension to higher dimensions hold. The Cauchy sequence convergence property, also known as metric completeness, is important because we shall often deal with a sequence of entries ![]() which we will need to establish convergence to a vector

which we will need to establish convergence to a vector ![]() . (Think of

. (Think of ![]() as a sequence of Galerkin approximations to a solution

as a sequence of Galerkin approximations to a solution ![]() .)

.)

With these formalities, an abstract variational problem takes the form

(9) ![]()

where ![]() is a bilinear form on

is a bilinear form on ![]() and

and ![]() is a linear form on

is a linear form on ![]() (a linear map

(a linear map ![]() ). There is a beautiful and general theorem called the Lax-Milgram theorem which establishes existence and uniqueness of solutions to a problem like Eq. (9).

). There is a beautiful and general theorem called the Lax-Milgram theorem which establishes existence and uniqueness of solutions to a problem like Eq. (9).

Theorem (Lax-Milgram): Let ![]() and

and ![]() satisfy the following properties:

satisfy the following properties:

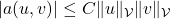

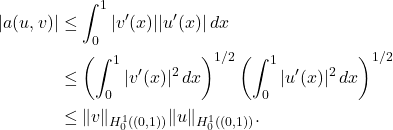

- (Boundedness of

) There exists a constant

) There exists a constant  such that every

such that every  ,

,  .

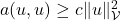

. - (Coercivity) There exists a positive constant

such that

such that  for every

for every  .

. - (Boundedness of

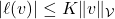

) There exists a constant

) There exists a constant  such that

such that  for every

for every  .

.

Then the variational problem Eq. (9) possesses a unique solution.

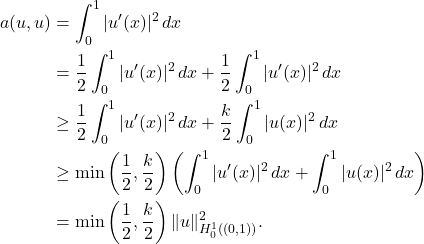

For our cases, ![]() will also be symmetric

will also be symmetric ![]() for all

for all ![]() . While the Lax-Milgram theorem holds without symmetry, let us continue our discussion with this additional symmetry assumption. Note that, taken together, properties (1-2) say that the

. While the Lax-Milgram theorem holds without symmetry, let us continue our discussion with this additional symmetry assumption. Note that, taken together, properties (1-2) say that the ![]() -inner product, defined as

-inner product, defined as ![]() , is no more than so much bigger or smaller than the standard inner product

, is no more than so much bigger or smaller than the standard inner product ![]() of

of ![]() and

and ![]() .14That is, one has that the

.14That is, one has that the ![]() -norm and the

-norm and the ![]() -norm are equivalent in the sense that

-norm are equivalent in the sense that ![]() . so the norms

. so the norms ![]() and

and ![]() define the same topology.

define the same topology.

Let us now see how the Lax-Milgram theorem can apply to our two examples. For a reader who wants a more “big picture” perspective, they can comfortably skip to the next section. For those who want to see Lax-Milgram in action, see the discussion below.

General Galerkin Approximation

With our general theory set up, Galerkin approximation for general variational problem is the same as it was for a system of linear equations. First, we pick an approximation space ![]() which is a subspace of

which is a subspace of ![]() . We then have the Galerkin variational problem

. We then have the Galerkin variational problem

(13) ![]()

Provided ![]() and

and ![]() satisfy the conditions of the Lax-Milgram theorem, there is a unique solution

satisfy the conditions of the Lax-Milgram theorem, there is a unique solution ![]() to the problem Eq. (13). Moreover, the special property of Galerkin approximation holds: the error

to the problem Eq. (13). Moreover, the special property of Galerkin approximation holds: the error ![]() is

is ![]() -orthogonal to the subspace

-orthogonal to the subspace ![]() . Consequently,

. Consequently, ![]() is te best approximate solution to the variational problem Eq. (9) in the

is te best approximate solution to the variational problem Eq. (9) in the ![]() -norm. To see the

-norm. To see the ![]() -orthogonality, we have that, for any

-orthogonality, we have that, for any ![]() ,

,

(14) ![]()

where we use the variational equation Eq. (9) for ![]() and Eq. (13) for

and Eq. (13) for ![]() . Note the similarities with Eq. (3). Thus, using the Pythagorean theorem for the

. Note the similarities with Eq. (3). Thus, using the Pythagorean theorem for the ![]() -norm, for any other approximation solution

-norm, for any other approximation solution ![]() , we have22Compare Eq. (4).

, we have22Compare Eq. (4).

(15) ![]()

Put simply, ![]() is the best approximation to

is the best approximation to ![]() in the

in the ![]() -norm.23Using the fact the norms

-norm.23Using the fact the norms ![]() and

and ![]() are equivalent in virtue of Properties (1-2), one can also show that

are equivalent in virtue of Properties (1-2), one can also show that ![]() is within a constant factor

is within a constant factor ![]() of the best approximation in the norm

of the best approximation in the norm ![]() . This is known as Céa’s Lemma.

. This is known as Céa’s Lemma.

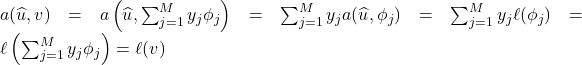

Galerkin approximation is powerful because it allows us to approximate an infinite-dimensional problem by a finite-dimensional one. If we let ![]() be a basis for the space

be a basis for the space ![]() , then the approximate solution

, then the approximate solution ![]() can be represented as

can be represented as ![]() . Since

. Since ![]() form a basis of

form a basis of ![]() , to check that the Galerkin variational problem Eq. (13) holds for all

, to check that the Galerkin variational problem Eq. (13) holds for all ![]() it is sufficient to check that it holds for

it is sufficient to check that it holds for ![]() .24For an arbitrary

.24For an arbitrary ![]() can be written as

can be written as ![]() , so

, so  . Thus, plugging in

. Thus, plugging in ![]() and

and ![]() into Eq. (13), we get (using bilinearity of

into Eq. (13), we get (using bilinearity of ![]() )

)

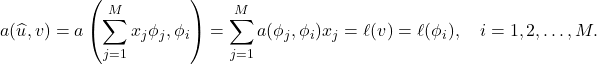

(16)

If we define ![]() and

and ![]() , then this gives us a matrix equation

, then this gives us a matrix equation ![]() for the unknowns

for the unknowns ![]() parametrizing

parametrizing ![]() . Thus, we can compute our Galerkin approximation by solving a linear system of equations.

. Thus, we can compute our Galerkin approximation by solving a linear system of equations.

We’ve covered a lot of ground so let’s summarize. Galerkin approximation is a technique which allows us to approximately solve a large- or infinite-dimensional problem by searching for an approximate solution in a smaller finite-dimensional space ![]() of our choosing. This Galerkin approximation is the best approximate solution to our original problem in the

of our choosing. This Galerkin approximation is the best approximate solution to our original problem in the ![]() -norm. By choosing a basis

-norm. By choosing a basis ![]() for our approximation space

for our approximation space ![]() , we reduce the problem of computing a Galerkin approximation to a linear system of equations.

, we reduce the problem of computing a Galerkin approximation to a linear system of equations.

Design of a Galerkin approximation scheme for a variational problem thus boils down to choosing the approximation space ![]() and a basis

and a basis ![]() . Picking

. Picking ![]() to be a space of piecewise polynomial functions (splines) gives the finite element method. Picking

to be a space of piecewise polynomial functions (splines) gives the finite element method. Picking ![]() to be a space spanned by a collection of trigonometric functions gives a Fourier spectral method. One can use a space spanned by wavelets as well. The Galerkin framework is extremely general: give it a subspace

to be a space spanned by a collection of trigonometric functions gives a Fourier spectral method. One can use a space spanned by wavelets as well. The Galerkin framework is extremely general: give it a subspace ![]() and it will give you a linear system of equations to solve to give you the best approximate solution in

and it will give you a linear system of equations to solve to give you the best approximate solution in ![]() .

.

Two design considerations factor into the choice of space ![]() and basis

and basis ![]() . First, one wants to pick a space

. First, one wants to pick a space ![]() , where the solution

, where the solution ![]() almost lies in. This is the rationale behind spectral methods. Smooth functions are very well-approximated by short truncated Fourier expansions, so, if the solution

almost lies in. This is the rationale behind spectral methods. Smooth functions are very well-approximated by short truncated Fourier expansions, so, if the solution ![]() is smooth, spectral methods will converge very quickly. Finite element methods, which often use low-order piecewise polynomial functions, converge much more slowly to a smooth

is smooth, spectral methods will converge very quickly. Finite element methods, which often use low-order piecewise polynomial functions, converge much more slowly to a smooth ![]() . The second design consideration one wants to consider is the ease of solving the system

. The second design consideration one wants to consider is the ease of solving the system ![]() resulting from the Galerkin approximation. If the basis function

resulting from the Galerkin approximation. If the basis function ![]() are local in the sense that most pairs of basis functions

are local in the sense that most pairs of basis functions ![]() and

and ![]() aren’t nonzero at the same point

aren’t nonzero at the same point ![]() (more formally,

(more formally, ![]() and

and ![]() have disjoint supports for most

have disjoint supports for most ![]() and

and ![]() ), the system

), the system ![]() will be sparse and thus usually much easier to solve. Traditional spectral methods usually result in a harder-to-solve dense linear systems of equations.25There are clever ways of making spectral methods which lead to sparse matrices. Conversely, if one uses high-order piecewise polynomials in a finite element approximation, one can get convergence properties similar to a spectral method. These are called spectral element methods. It should be noted that both spectral and finite element methods lead to ill-conditioned matrices

will be sparse and thus usually much easier to solve. Traditional spectral methods usually result in a harder-to-solve dense linear systems of equations.25There are clever ways of making spectral methods which lead to sparse matrices. Conversely, if one uses high-order piecewise polynomials in a finite element approximation, one can get convergence properties similar to a spectral method. These are called spectral element methods. It should be noted that both spectral and finite element methods lead to ill-conditioned matrices ![]() , making integral equation-based approaches preferable if one needs high-accuracy.26For example, only one researcher using a finite-element method was able to meet Trefethen’s challenge to solve the Poisson equation to eight digits of accuracy on an L-shaped domain (see Section 6 of this paper). Getting that solution required using a finite-element method of order 15! Integral equations, themselves, are often solved using Galerkin approximation, leading to so-called boundary element methods.

, making integral equation-based approaches preferable if one needs high-accuracy.26For example, only one researcher using a finite-element method was able to meet Trefethen’s challenge to solve the Poisson equation to eight digits of accuracy on an L-shaped domain (see Section 6 of this paper). Getting that solution required using a finite-element method of order 15! Integral equations, themselves, are often solved using Galerkin approximation, leading to so-called boundary element methods.

Upshot: Galerkin approximation is a powerful and extremely flexible methodology for approximately solving large- or infinite-dimensional problems by finding the best approximate solution in a smaller finite-dimensional subspace. To use a Galerkin approximation, one must convert their problem to a variational formulation and pick a basis for the approximation space. After doing this, computing the Galerkin approximation reduces down to solving a system of linear equations with dimension equal to the dimension of the approximation space.

since

since

The “big idea” described here, which is indeed big, really goes back to Rayleigh. Ritz extended it to what we would recognize today as the (Bubnov) Galerkin method. Finite element interpolation on triangles dates to Courant (a student of Hilbert’s) in the 1920s, or perhaps teens.

There is an excellent article by Martin Gander about the history, https://www.unige.ch/~gander/Preprints/Ritz.pdf, which shows that Ritz created this method in his thesis.

Nice writeup! Seems like this might be a typo?

a(x,x) = x^\top Ax \ge \lambda_{\min} = x^\top x

The typo should be corrected now. Thanks for catching this!

The Golomb-Weinberger principle gives another (seemingly less well-known) perspective on “best approximation”. A related approach is the Nystrom method. Comparing these might be instructive.