In this post, I want to discuss a very beautiful piece of mathematics I stumbled upon recently. As a warning, this post will be more mathematical than most, but I will still try and sand off the roughest mathematical edges. This post is adapted from a much more comprehensive post by Paata Ivanishvili. My goal is to distill the main idea to its essence, deferring the stochastic calculus until it cannot be avoided.

Jensen’s inequality is one of the most important results in probability.

Jensen’s inequality. Let

be a (real) random variable and

a convex function such that both

and

are defined. Then

.

Here is the standard proof. A convex function has supporting lines. That is, at a point ![]() , there exists a slope

, there exists a slope ![]() such that

such that ![]() for all

for all ![]() . Invoke this result at

. Invoke this result at ![]() and

and ![]() and take expectations to conclude that

and take expectations to conclude that

![]()

In this post, I will outline a proof of Jensen’s inequality which is much longer and more complicated. Why do this? This more difficult proof illustrates an incredible powerful technique for proving inequalities, interpolation. The interpolation method can be used to prove a number of difficult and useful inequalities in probability theory and beyond. As an example, at the end of this post, we will see the Gaussian Jensen inequality, a striking generalization of Jensen’s inequality with many applications.

The idea of interpolation is as follows: Suppose I wish to prove ![]() for two numbers

for two numbers ![]() and

and ![]() . This may hard to do directly. With the interpolation method, I first construct a family of numbers

. This may hard to do directly. With the interpolation method, I first construct a family of numbers ![]() ,

, ![]() , such that

, such that ![]() and

and ![]() and show that

and show that ![]() is (weakly) increasing in

is (weakly) increasing in ![]() . This is typically accomplished by showing the derivative is nonnegative:

. This is typically accomplished by showing the derivative is nonnegative:

![]()

To prove Jensen’s inequality by interpolation, we shall begin with a special case. As often in probability, the simplest case is that of a Gaussian random variable.

Jensen’s inequality for a Gaussian. Let

be a standard Gaussian random variable (i.e., mean-zero and variance

) and let

be a thrice-differentiable convex function satisfying a certain technical condition.1Specifically, we assume the regularity condition

for some

for any Gaussian random variable

. Then

Note that the conclusion is exactly Jensen’s inequality, as we have assumed ![]() is mean-zero.

is mean-zero.

The difficulty with any proof by interpolation is to come up with the “right” ![]() . For us, the “right” answer will take the form

. For us, the “right” answer will take the form

![]()

where ![]() starts with no randomness and

starts with no randomness and ![]() is our standard Gaussian. To interpolate between these extremes, we increase the variance linearly from

is our standard Gaussian. To interpolate between these extremes, we increase the variance linearly from ![]() to

to ![]() . Thus, we define

. Thus, we define

![]()

Here, and throughout, ![]() denotes a Gaussian random variable with zero mean and variance

denotes a Gaussian random variable with zero mean and variance ![]() .

.

Let’s compute the derivative of ![]() . To do this, let

. To do this, let ![]() denote a small parameter which we will later send to zero. For us, the key fact will be that a

denote a small parameter which we will later send to zero. For us, the key fact will be that a ![]() can be realized as a sum of independent

can be realized as a sum of independent ![]() and

and ![]() random variables. Therefore, we write

random variables. Therefore, we write

![]()

We now evaluate ![]() by using Taylor’s formula

by using Taylor’s formula

(1) ![]()

where ![]() lies between

lies between ![]() and

and ![]() . Now, take expectations,

. Now, take expectations,

![Rendered by QuickLaTeX.com \[\mathbb{E}[ f(X_t+\Delta)]=\mathbb{E}[f(X_t)] + \mathbb{E}[f'(X_t)\Delta] + \frac{1}{2} \mathbb{E}[f''(X_t)] \mathbb{E}[\Delta^2] + \underbrace{\frac{1}{6} \mathbb{E}[f'''(\xi) \Delta^3]}_{:=\mathrm{Rem}(\delta)}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-ccacfa2622a31446e66624a9a8458dc4_l3.png)

The random variable ![]() has mean zero and variance

has mean zero and variance ![]() so this gives

so this gives

![]()

As we show below, the remainder term ![]() vanishes as

vanishes as ![]() . Thus, we can rearrange this expression to compute the derivative:

. Thus, we can rearrange this expression to compute the derivative:

![]()

The second derivative of a convex function is nonnegative: ![]() for every

for every ![]() . Therefore,

. Therefore,

![]()

Jensen’s inequality is proven! In fact, we’ve proven the stronger version of Jensen’s inequality:

![]()

This strengthened version can yield improvements. For instance, if ![]() is

is ![]() -smooth

-smooth

![]()

then we have

![]()

This inequality isn’t too hard to prove directly, but it does show that we’ve obtained something more than the simple proof of Jensen’s inequality.

What’s Really Going On Here?

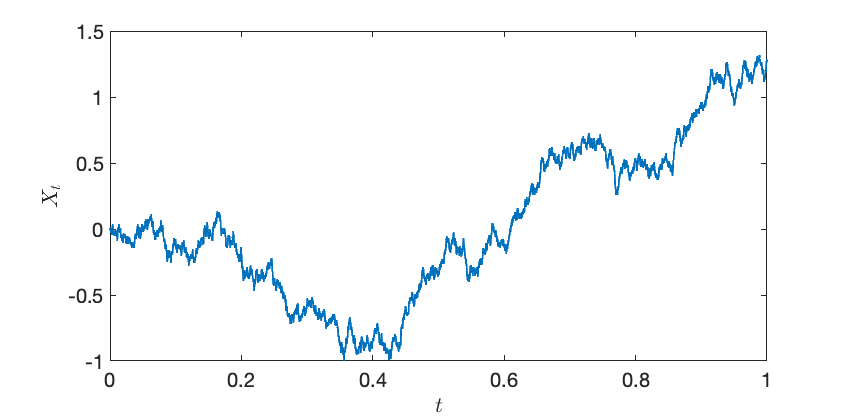

In our proof, we use a family of random variables ![]() , defined for each

, defined for each ![]() . Rather than treating these quantities as independent, we can think of them as a collective, comprising a random function

. Rather than treating these quantities as independent, we can think of them as a collective, comprising a random function ![]() known as a Brownian motion.

known as a Brownian motion.

The Brownian motion is a very natural way of interpolating between a constant ![]() and a Gaussian with mean

and a Gaussian with mean ![]() .2The Ornstein–Uhlenbeck process is another natural way of interpolating between a random variable and a Gaussian.

.2The Ornstein–Uhlenbeck process is another natural way of interpolating between a random variable and a Gaussian.

There is an entire subject known as stochastic calculus which allows us to perform computations with Brownian motion and other random processes. The rules of stochastic calculus can seem bizarre at first. For a function ![]() of a real number

of a real number ![]() , we often write

, we often write

![]()

For a function ![]() of a Brownian motion, the analog is Itô’s formula

of a Brownian motion, the analog is Itô’s formula

![]()

While this might seem odd at first, this formula may seem more sensible if we compare with (1) above. The idea, very roughly, is that for an increment of the Brownian motion ![]() over a time interval

over a time interval ![]() ,

, ![]() is a random variable with mean

is a random variable with mean ![]() , so we cannot drop the second term in the Taylor series, even up to first order in

, so we cannot drop the second term in the Taylor series, even up to first order in ![]() . Fully diving into the subtleties of stochastic calculus is far beyond the scope of this short post. Hopefully, the rest of this post, which outlines some extensions of our proof of Jensen’s inequality that require more stochastic calculus, will serve as an enticement to learn more about this beautiful subject.

. Fully diving into the subtleties of stochastic calculus is far beyond the scope of this short post. Hopefully, the rest of this post, which outlines some extensions of our proof of Jensen’s inequality that require more stochastic calculus, will serve as an enticement to learn more about this beautiful subject.

Proving Jensen by Interpolation

For the rest of this post, we will be less careful with mathematical technicalities. We can use the same idea that we used to prove Jensen’s inequality for a Gaussian random variable to prove Jensen’s inequality for any random variable ![]() :

:

![]()

Here is the idea of the proof.

First, realize that we can write any random variable ![]() as a function of a standard Gaussian random variable

as a function of a standard Gaussian random variable ![]() . Indeed, letting

. Indeed, letting ![]() and

and ![]() denote the cumulative distribution functions of

denote the cumulative distribution functions of ![]() and

and ![]() , one can show that

, one can show that

![]()

has the same distribution as ![]() .

.

Now, as before, we can interpolate between ![]() and

and ![]() using a Brownian motion. As a first, idea, we might try

using a Brownian motion. As a first, idea, we might try

![]()

Unfortunately, this choice of ![]() does not work! Indeed,

does not work! Indeed, ![]() does not even equal to

does not even equal to ![]() ! Instead, we must define

! Instead, we must define

![]()

We define ![]() using the conditional expectation of the final value

using the conditional expectation of the final value ![]() conditional on the Brownian motion

conditional on the Brownian motion ![]() at an earlier time

at an earlier time ![]() . Using a bit of elbow grease and stochastic calculus, one can show that

. Using a bit of elbow grease and stochastic calculus, one can show that

![]()

This provides a proof of Jensen’s inequality in general by the method if interpolation.

Gaussian Jensen Inequality

Now, we’ve come to the real treat, the Gaussian Jensen inequality. In the last section, we saw the sketch of a proof of Jensen’s inequality using interpolation. While it is cool that this proof is possible, we learned anything new since we can prove Jensen’s inequality in other ways. The Gaussian Jensen inequality provides an application of this technique which is hard to prove other ways. This section, in particular, is cribbing quite heavily from Paata Ivanishvili‘s excellent post on the topic.

Here’s the big question:

If

are “somewhat dependent”, for which functions does the multivariate Jensen’s inequality

(

hold?)

Considering extreme cases, if ![]() are entirely dependent, then we would only expect (

are entirely dependent, then we would only expect (![]() ) to hold when

) to hold when ![]() is convex. But if

is convex. But if ![]() are independent, then we can apply Jensen’s inequality to each coordinate one at a time to deduce

are independent, then we can apply Jensen’s inequality to each coordinate one at a time to deduce

![]()

We would like a result which interpolates between extremes {fully dependent, fully convex} and {independent, separately convex}. The Gaussian Jensen inequality provides exactly this tool.

As in the previous section, we can generate arbitrary random variables ![]() as functions

as functions ![]() of Gaussian random variables

of Gaussian random variables ![]() . We will use the covariance matrix

. We will use the covariance matrix ![]() of the Gaussian random variables

of the Gaussian random variables ![]() as our measure of the dependence of the random variables

as our measure of the dependence of the random variables ![]() . With this preparation in place, we have the following result:

. With this preparation in place, we have the following result:

Gaussian Jensen inequality. The conclusion of Jensen’s inequality

(2)

holds for all test functionsif and only if

Here,

is the Hessian matrix at

and

denotes the entrywise product of matrices.

This is a beautiful result with striking consequences (see Ivanishvili‘s post). The proof is essentially the same as the proof as Jensen’s inequality by interpolation with a little additional bookkeeping.

Let us confirm this result respects our extreme cases. In the case where ![]() are equal (and variance one),

are equal (and variance one), ![]() is a matrix of all ones and

is a matrix of all ones and ![]() for all

for all ![]() . Thus, the Gaussian Jensen inequality states that (2) holds if and only if

. Thus, the Gaussian Jensen inequality states that (2) holds if and only if ![]() is positive semidefinite for every

is positive semidefinite for every ![]() , which occurs precisely when

, which occurs precisely when ![]() is convex.

is convex.

Next, suppose that ![]() are independent and variance one, then

are independent and variance one, then ![]() is the identity matrix and

is the identity matrix and

![]()

A diagonal matrix is positive semidefinite if and only if its entries are nonnegative. Thus, (2) holds if and only if each of ![]() ‘s diagonal second derivatives are nonnegative

‘s diagonal second derivatives are nonnegative ![]() : this is precisely the condition for

: this is precisely the condition for ![]() to be separately convex in each argument.

to be separately convex in each argument.

There’s much more to be said about the Gaussian Jensen inequality, and I encourage you to read Ivanishvili‘s post to see the proof and applications. What I find so compelling about this result—so compelling that I felt the need to write this post—is how interpolation and stochastic calculus can be used to prove inequalities which don’t feel like stochastic calculus problems. The Gaussian Jensen inequality is a statement about functions of dependent Gaussian random variables; there’s nothing dynamic happening. Yet, to prove this result, we inject dynamics into the problem, viewing the two sides of our inequality as endpoints of a random process connecting them. This is a such a beautiful idea that I couldn’t help but share it.

One thought on “The Hard Way to Prove Jensen’s Inequality”