I’m delighted to share that my first applied mathematics paper, Minimal Rank Completions for Overlapping Blocks, coauthored with Nithin Govindarajan and Shivkumar Chandrasekaran, has been accepted for publication in Linear Algebra and its Applications. (We also have a preprint on arXiv.) In this post, I wanted to share what our paper is about and share an alternate derivation which ended up being cut from the final paper.

Our paper is concerned with the following question: given a collection of matrices which overlap in a particular way, can we choose the region of simultaneous overlap so as to minimize the rank of each of the individual matrices? This is a multi-objective optimization problem, so a priori it is not clear it has a simultaneous solution. So it was of great surprise to us when we discovered that it does. In fact, we were able to find a tractable algorithm to find all solutions to this multi-objective problem.

Our enormous thanks go to the anonymous reviewer, who went above and beyond by discovering and sketching to us a dramatically shorter and more revealing proof1In particular, our original proof only produced some solutions to this problem, where the reviewer’s improved proof strategy allowed us to characterize all solutions. of our main theorem. We are truly indebted to this extraordinary reviewer for the significantly streamlined and more understandable presentation in the current iteration of our paper.

For the remainder of this post, I want to discuss minimal rank completions in a little more generality and present a solution to a special case of a minimal rank completion problem which can be tractably solved. I will present a solution to this problem we discovered which I have not seen elsewhere in the literature. I hope that this perspective will be complementary to existing solutions to this problem published in the literature including the summary of this result in our paper.

Minimal Rank Completion Problems

Minimal rank completions have achieved a lot of buzz in statistics and data science. Given a matrix with unknown entries, it is often very natural to impute the missing entries as those minimizing the rank of the matrix. This is justified by a kind of Occam’s razor argument: big data matrices are very often (approximately) low-rank, so when data is missing, we may assume it takes whatever values are necessary to minimize the rank.

Finding the rank-minimizing choice of missing entries is, in general, NP-hard. However, under certain assumptions, semidefinite programming relaxations can sometimes exactly recover the missing entries.

Alternately, if the missing entries belong to a special pattern, it may be possible to find the rank-minimizing choice of the unknown entries using linear algebraic tools like matrix factorizations. These approaches have significantly more limited domains of applicability than optimization-based approaches, but have advantages in that they

- can be more tractable as they involve matrix factorizations rather than solving optimization problems;

- work over arbitrary fields of numbers, such as finite fields appearing in computer science;

- can find all ways of choosing the entries to minimize the rank, in particular certifying or dis-certifying the uniqueness of the rank-minimizing choice; and

- are guaranteed to find the rank-minimizer, without any statistical assumptions.

These algebraic methods have their roots in systems theory, integral operators, and rank-structured matrices. The last of these was the application which motivated our interest in the subject.

Our paper concerns an overlapping variant of this problem, where we simultaneously minimize the ranks of several matrices by choosing the entries in the overlap carefully. This problem emerged to us naturally in the construction of certain matrix representations, and we hope it might prove useful in tackling other problems. As it turns out, the overlapping problem can be solved very much in the spirit of a much simpler problem, the block ![]() minimal rank completion problem, which we will spend most of the remainder of this post discussing.

minimal rank completion problem, which we will spend most of the remainder of this post discussing.

A Solution to the Block 2×2 Case

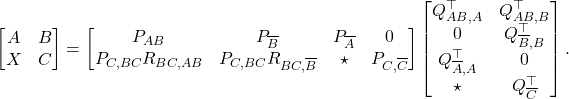

The block ![]() minimal rank completion problem is as follows: given a partially filled block matrix

minimal rank completion problem is as follows: given a partially filled block matrix

(1) ![]()

how can the “?” be filled in to minimize the rank of this matrix?

A generalized version of this problem was originally solved by Kaashoek and Woerdeman. An alternate derivation using matrix factorizations is given by Eidelman, Gohberg, and Haimovici, though they only find some of the solutions to this problem. We seek to characterize all ways of choosing the “?” so that the rank is minimize the rank.

Here, I present the solution to this problem which my coauthors and I originally discovered, which is different than the one we summarize in the final version of our paper.2This construction does appear in Section 4 of an initial preprint of ours on rank-structured matrices. This solution is in the spirit of the one by Eidelman, Gohberg, and Haimovici but does produce all solutions.

Let’s start by recalling some facts about linear algebra. Given an ![]() real3The analysis in this discussion holds over any field, but we shall use the real numbers for concreteness. matrix

real3The analysis in this discussion holds over any field, but we shall use the real numbers for concreteness. matrix ![]() , one can define its column space, which I will denote

, one can define its column space, which I will denote ![]() , to be the vector space consist of all linear combinations of the columns of

, to be the vector space consist of all linear combinations of the columns of ![]() . From this column space, or indeed any vector subspace of

. From this column space, or indeed any vector subspace of ![]() , one can extract a basis for this subspace, meaning every vector in the subspace can be uniquely decomposed as a linear combination of elements from the basis. In this discussion, we shall always arrange the basis vectors as columns of a basis matrix

, one can extract a basis for this subspace, meaning every vector in the subspace can be uniquely decomposed as a linear combination of elements from the basis. In this discussion, we shall always arrange the basis vectors as columns of a basis matrix ![]() . If we instead consider the row space

. If we instead consider the row space ![]() of

of ![]() , then we arrange the elements of a basis as rows of a matrix

, then we arrange the elements of a basis as rows of a matrix ![]() .

.

Before we solve the problem, let’s reason about the lowest possible rank we could possibly expect for the completed matrix. Since the rank of a matrix can be no smaller than the rank of any of its submatrices, clearly we must have that, for any assignment ![]() of the “?”,

of the “?”,

(2) ![]()

However, in general, the rank of the completed matrix must be higher than ![]() , as is exemplified when

, as is exemplified when ![]() and

and ![]() are both zero but

are both zero but ![]() is not. In addition to rank of

is not. In addition to rank of ![]() , we must also account for rows of

, we must also account for rows of ![]() which cannot be written as linear combinations of the rows above, no matter how

which cannot be written as linear combinations of the rows above, no matter how ![]() is chosen. With a little noodling, one can convince oneself that there are always at least

is chosen. With a little noodling, one can convince oneself that there are always at least ![]() such rows, leading to the bound

such rows, leading to the bound

(3) ![]()

We shall show that, by judicious choice of ![]() , Eq. (3) can always be achieved with equality, making

, Eq. (3) can always be achieved with equality, making ![]() the minimal rank for this completion problem.

the minimal rank for this completion problem.

Let us now find such a rank-minimizing ![]() . The construction begins by considering the column spaces

. The construction begins by considering the column spaces ![]() and

and ![]() of the matrices

of the matrices ![]() and

and ![]() . The intersection of these column spaces

. The intersection of these column spaces ![]() is again a vector subspace, and we choose a basis

is again a vector subspace, and we choose a basis ![]() for it. The subspace

for it. The subspace ![]() might have a smaller size than

might have a smaller size than ![]() . Therefore, to find a basis for

. Therefore, to find a basis for ![]() , we can extend the basis

, we can extend the basis ![]() by adding new columns

by adding new columns ![]() to it so that the enlarged matrix

to it so that the enlarged matrix ![]() forms a basis for

forms a basis for ![]() . Similarly, we can find new columns

. Similarly, we can find new columns ![]() to add to

to add to ![]() so that the matrix

so that the matrix ![]() forms a basis for

forms a basis for ![]() .

.

Now comes something subtle. Since ![]() forms a basis for

forms a basis for ![]() , every column in

, every column in ![]() can be written as a linear combination of columns in

can be written as a linear combination of columns in ![]() . In matrix language, this means there exists a matrix

. In matrix language, this means there exists a matrix ![]() such that

such that ![]() —in fact, this exact

—in fact, this exact ![]() forms a basis for

forms a basis for ![]() . Since

. Since ![]() is divided into two collections of columns, it is only natural to similarly (and conformally) divide

is divided into two collections of columns, it is only natural to similarly (and conformally) divide ![]() into two pieces as

into two pieces as ![]() . Thus, doing the same with

. Thus, doing the same with ![]() as we did with

as we did with ![]() , we obtain two matrix factorizations

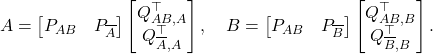

, we obtain two matrix factorizations

(4)

Now we do the same song and dance with the row spaces of ![]() and

and ![]() . Let’s go through the construction somewhat quickly. We start with a basis

. Let’s go through the construction somewhat quickly. We start with a basis ![]() for the intersection of

for the intersection of ![]() . We then extend these to bases

. We then extend these to bases ![]() and

and ![]() for

for ![]() and

and ![]() . From here, we derive the existence of matrix factorizations analogous to Eq. (4):

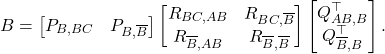

. From here, we derive the existence of matrix factorizations analogous to Eq. (4):

(5) ![]()

For the next part, we shall take advantage of a powerful fact: if I have a bases ![]() and

and ![]() for the row and column spaces of a matrix

for the row and column spaces of a matrix ![]() , there exists a nonsingular matrix

, there exists a nonsingular matrix ![]() for which

for which ![]() . Applying this fact to the bases

. Applying this fact to the bases ![]() and

and ![]() for

for ![]() ‘s column and row spaces, we get the existence of a matrix

‘s column and row spaces, we get the existence of a matrix ![]() such that

such that

(6)

We now have all ingredients to describe the solution. Rather than trying to “derive” the solution in a rigorous way, let us try and discover the solution in a non-rigorous way and justify our work later. We’re hoping to find assignments ![]() of the “?” such that

of the “?” such that ![]() achieves the minimum possible rank

achieves the minimum possible rank ![]() defined in Eq. (3). To do so, let’s try and find a rank factorization of the completed matrix and then infer what values

defined in Eq. (3). To do so, let’s try and find a rank factorization of the completed matrix and then infer what values ![]() will take. First off, let’s build a rank factorization for

will take. First off, let’s build a rank factorization for ![]() using the building blocks we’ve already built

using the building blocks we’ve already built

(7)

Now we want to extend this to a rank factorization for the entire completed matrix. Let’s build up to this in stages, denoting by ![]() entries whose value we have yet to determine. For us to have a hope of representing the matrix

entries whose value we have yet to determine. For us to have a hope of representing the matrix ![]() , we’ll need to somehow add

, we’ll need to somehow add ![]() into our rank factorization. Doing exactly this, we get the partially specified rank factorization

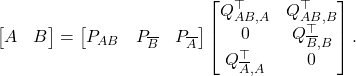

into our rank factorization. Doing exactly this, we get the partially specified rank factorization

(8)

Now, to fill in the second block row of the left factor, we recall that ![]() . From Eqs. (5) and (6), we deduce that

. From Eqs. (5) and (6), we deduce that ![]() . Thus, we can fill in more entries:

. Thus, we can fill in more entries:

(9)

With these entries filled in, the remaining ![]() ‘s can be chosen arbitrarily. Assigning names

‘s can be chosen arbitrarily. Assigning names ![]() and

and ![]() to these free variables, we conclude that

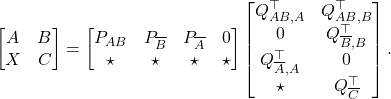

to these free variables, we conclude that

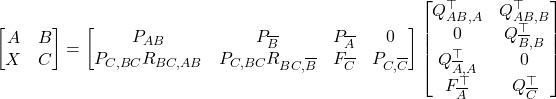

(10)

and

(11) ![]()

From all the analysis previous, we know that all ![]() ‘s of the form Eq. (11) solve the minimal rank completion problem, making the completed matrix achieve the minimal rank

‘s of the form Eq. (11) solve the minimal rank completion problem, making the completed matrix achieve the minimal rank ![]() defined in Eq. (3). With a little more elbow grease, one can also confirm that all such minimal completions

defined in Eq. (3). With a little more elbow grease, one can also confirm that all such minimal completions ![]() are of the form Eq. (11), proving that Eq. (11) indeed characterizes all solutions to the minimal rank completion problem. This completes the characterization and construction of the complete set of minimizers to the block

are of the form Eq. (11), proving that Eq. (11) indeed characterizes all solutions to the minimal rank completion problem. This completes the characterization and construction of the complete set of minimizers to the block ![]() minimal rank completion problem.

minimal rank completion problem.

The Overlapping Block Minimal Rank Completion Problem

If you found this post interesting, be sure to check out our paper (or here on arXiv) for an explanation of a different way of thinking about the solution of the block ![]() minimal rank completion problem and a solution to a more general “overlapping” variant. The treatment should be reasonably self-contained, and we hope the solution to this problem could prove a useful tool in tackling open problems in systems theory and the study of rank-structured matrices.

minimal rank completion problem and a solution to a more general “overlapping” variant. The treatment should be reasonably self-contained, and we hope the solution to this problem could prove a useful tool in tackling open problems in systems theory and the study of rank-structured matrices.